关于Ubuntu18.04/20.04安装后的一系列环境配置过程的总结

关于Ubuntu18.04/20.04安装后的一系列环境配置过程的总结

ZZU-RoboCup环境配置

Updating(最速更新链接【支持一些扩展语法,观感良好】):博客)…

[!IMPORTANT]

ZZU-SR的童鞋配置环境前可以给鄙人发邮件:E-mail,另外:

如果装了

Anaconda3/Miniconda3,最好设置auto_activate_base: false,或者需要编译时conda deactivate,否则会影响编译。本文根据重要程度对各个步骤进行分类:

- ESSENTIAL (必需)

- RECOMMENDED (推荐)

- OPTIONAL (可选)

- NOT RECOMMENDED (不宜)

- NOT REQUIRED (无需)

- EOL (停更)

目录

本文用到的部分文件打包供无法访问部分网站的童鞋下载 (EOL):

链接:

https://pan.baidu.com/s/1PgmWHKl8oyX_cWYx_uZJrg?pwd=zwz4

提取码:

zwz4

ESSENTIAL

刚进入系统一段时间,系统会通知是否更新到新版本的系统(比如Ubuntu20.04 → Ubuntu22.04 or Later),选择否,之后会询问是否更新系统组件,选择否。

阻止软件更新弹窗:

打开终端输入:

sudo chmod a-x /usr/bin/update-notifier

将关机时间从90秒换为5秒:

sudo gedit /etc/systemd/system.conf

将:

#DefaultTimeoutStopSec=90s

改为:

DefaultTimeoutStopSec=5s

保存退出,打开终端输入:

sudo systemctl daemon-reload

更换国内源(可替换为自己中意的镜像源)

sudo gedit /etc/apt/sources.list

将原本的注释掉,在最下方加入:

# 华科源(Ubuntu 18.04)【默认注释了源码仓库,如有需要可自行取消注释】

deb https://mirrors.hust.edu.cn/ubuntu bionic main restricted universe multiverse

# deb-src https://mirrors.hust.edu.cn/ubuntu bionic main restricted universe multiverse

deb https://mirrors.hust.edu.cn/ubuntu bionic-updates main restricted universe multiverse

# deb-src https://mirrors.hust.edu.cn/ubuntu bionic-updates main restricted universe multiverse

deb https://mirrors.hust.edu.cn/ubuntu bionic-backports main restricted universe multiverse

# deb-src https://mirrors.hust.edu.cn/ubuntu bionic-backports main restricted universe multiverse

deb https://mirrors.hust.edu.cn/ubuntu bionic-security main restricted universe multiverse

# deb-src https://mirrors.hust.edu.cn/ubuntu bionic-security main restricted universe multiverse

# 预发布软件源,不建议启用

# deb https://mirrors.hust.edu.cn/ubuntu bionic-proposed main restricted universe multiverse

# deb-src https://mirrors.hust.edu.cn/ubuntu bionic-proposed main restricted universe multiverse

# 华科源(Ubuntu 20.04)【默认注释了源码仓库,如有需要可自行取消注释】

deb https://mirrors.hust.edu.cn/ubuntu focal main restricted universe multiverse

# deb-src https://mirrors.hust.edu.cn/ubuntu focal main restricted universe multiverse

deb https://mirrors.hust.edu.cn/ubuntu focal-updates main restricted universe multiverse

# deb-src https://mirrors.hust.edu.cn/ubuntu focal-updates main restricted universe multiverse

deb https://mirrors.hust.edu.cn/ubuntu focal-backports main restricted universe multiverse

# deb-src https://mirrors.hust.edu.cn/ubuntu focal-backports main restricted universe multiverse

deb https://mirrors.hust.edu.cn/ubuntu focal-security main restricted universe multiverse

# deb-src https://mirrors.hust.edu.cn/ubuntu focal-security main restricted universe multiverse

# 预发布软件源,不建议启用

# deb https://mirrors.hust.edu.cn/ubuntu focal-proposed main restricted universe multiverse

# deb-src https://mirrors.hust.edu.cn/ubuntu focal-proposed main restricted universe multiverse

sudo apt update -y && sudo apt upgrade -y

anaconda镜像源(~/.condarc):

[!TIP]

注意替换

envs_dirs中的绝对路径!

custom_channels中鄙人只填了自己可能用到的,其它第三方源列表可参考清华源的文档自行添加。

channels:

- defaults

show_channel_urls: true

default_channels:

- https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/r

- https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/pro

- https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main

- https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/free

- https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/msys2

custom_channels:

msys2: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

menpo: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

numba: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

pyviz: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

omnia: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

ohmeta: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

plotly: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

fastai: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

caffe2: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

Paddle: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

dglteam: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

pytorch: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

rapidsai: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

MindSpore: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

pytorch3d: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

pytorch-lts: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

conda-forge: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

pytorch-test: https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud

nvidia: https://mirrors.sustech.edu.cn/anaconda-extra/cloud

envs_dirs:

- /home/m0rtzz/Programs/anaconda3/envs

auto_activate_base: false

pip设置镜像源:

mkdir -p ${HOME}/.config/pip/ && cd ${HOME}/.config/pip/ && \

tee ${HOME}/.config/pip/pip.conf > /dev/null << EOF

[global]

index-url = https://mirrors.hust.edu.cn/pypi/web/simple

extra-index-url =

https://mirrors.tuna.tsinghua.edu.cn/pypi/web/simple

https://mirrors.bfsu.edu.cn/pypi/web/simple

# https://pypi.nvidia.com

# https://pypi.ngc.nvidia.com

trusted-host =

mirrors.hust.edu.cn

mirrors.tuna.tsinghua.edu.cn

mirrors.bfsu.edu.cn

pypi.nvidia.com

pypi.ngc.nvidia.com

no-cache-dir = true

EOF

禁用Nouveau驱动

sudo tee -a /etc/modprobe.d/blacklist.conf > /dev/null << EOF

# 禁用Nouveau驱动

blacklist nouveau

options nouveau modeset=0

EOF

sudo update-initramfs -u

reboot

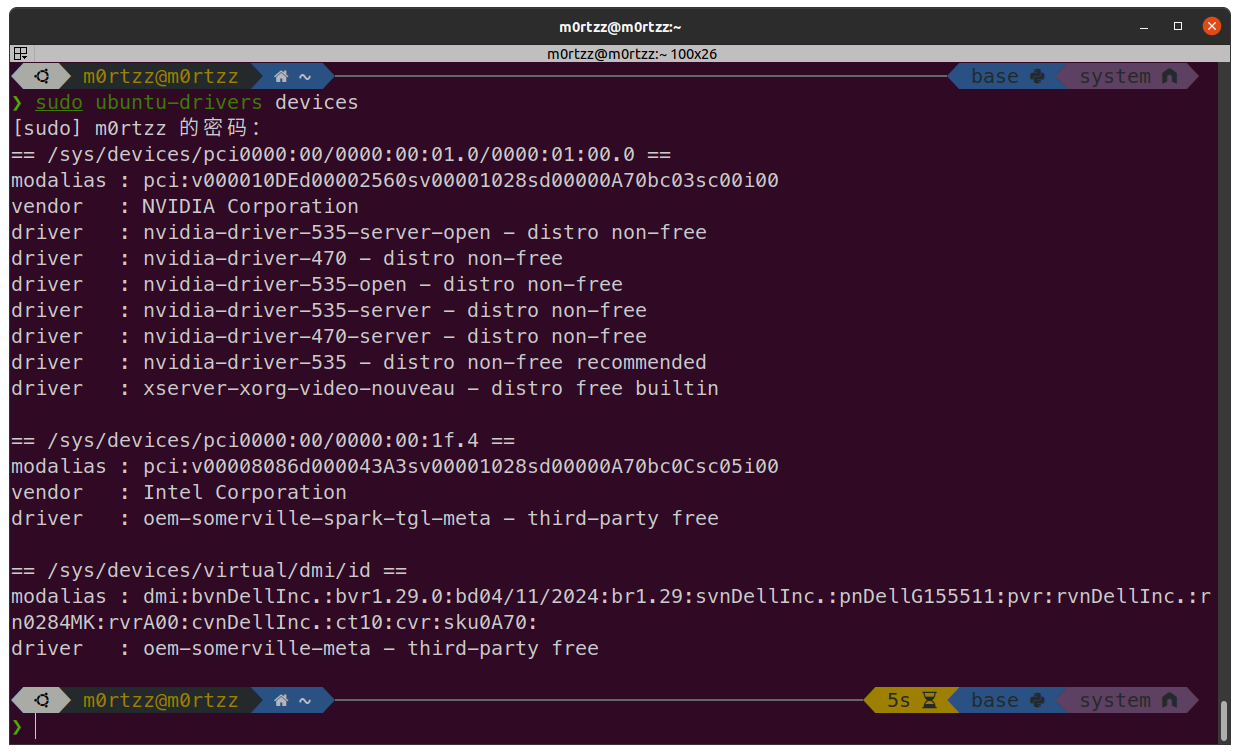

NVIDIA驱动

[!CAUTION]

由于众所周知的原因,安装

NVIDIA显卡驱动有可能会损坏系统,如果损坏可以重装并看看网上的其他教程,鄙人曾经尝试过多种方法,认定这种方法最快捷且最不容易损坏系统。

打开终端,输入:

sudo apt install -y gcc g++ make zlib1g

sudo ubuntu-drivers devices

寻找带有recommended的版本,输入:

# `your_version`是你的版本号

sudo apt install -y nvidia-driver-your_version nvidia-settings nvidia-prime

sudo apt update -y

sudo apt upgrade -y

reboot

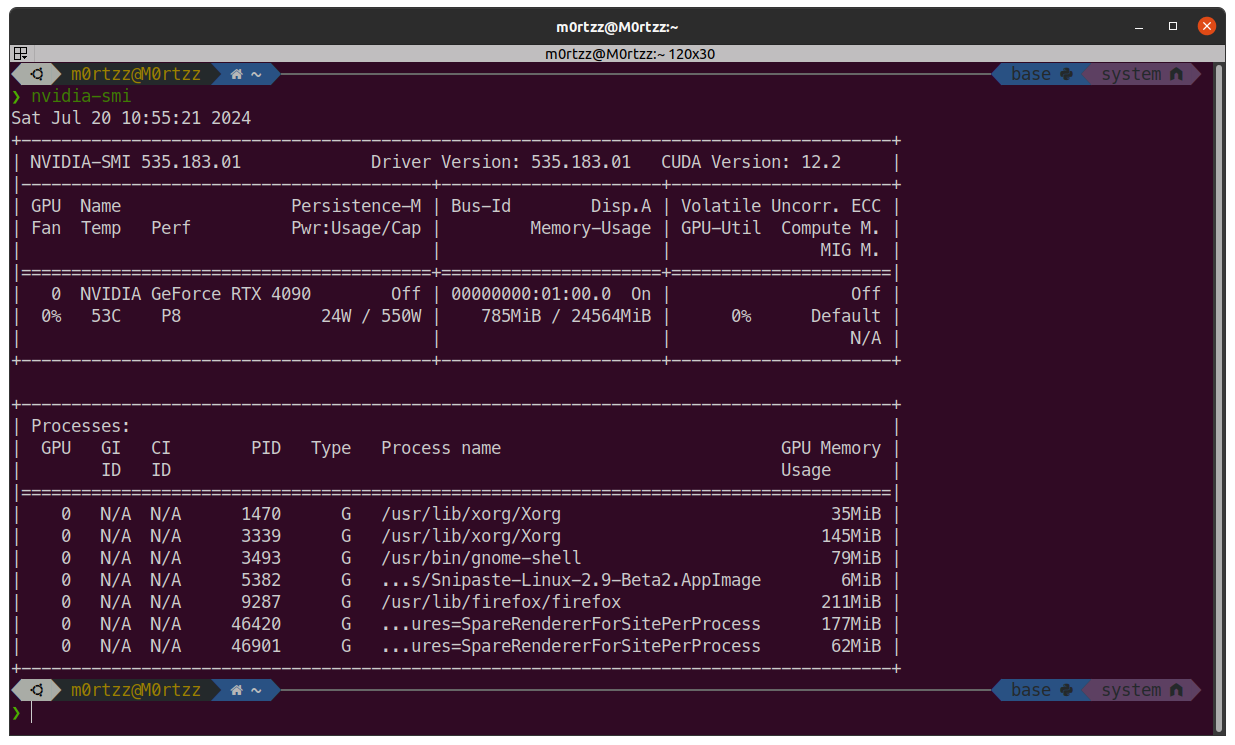

验证版本:

nvidia-smi

CUDA

https://developer.nvidia.com/cuda-toolkit-archive

选择≤上一步nvidia-smi显示的CUDA Version进行安装,官方有教程。

安装好之后打开终端输入:

sudo tee -a /etc/profile > /dev/null << 'EOF'

# CUDA

export PATH=${PATH}:/usr/local/cuda/bin

export LD_LIBRARY_PATH=${LD_LIBRARY_PATH}:/usr/local/cuda/lib64

export CUDA_HOME=/usr/local/cuda # 通过设置软链接`/usr/local/cuda`,可以做到多版本CUDA共存

EOF

source /etc/profile

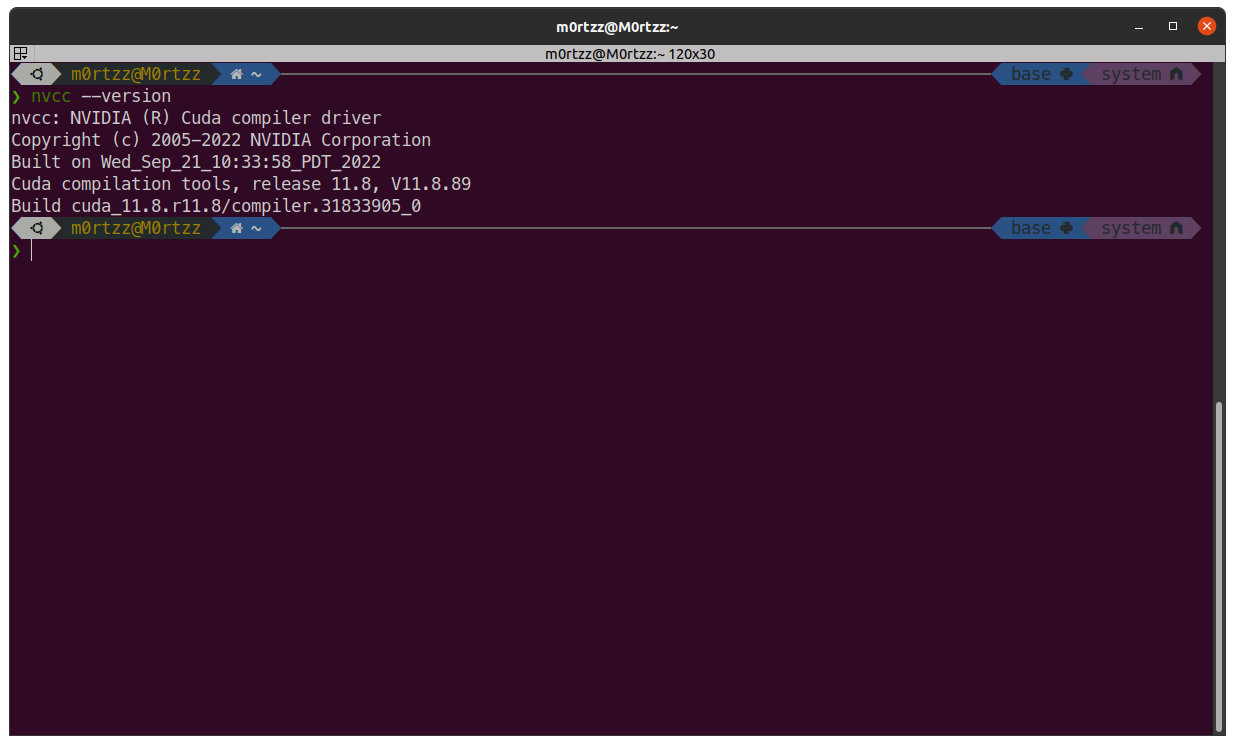

接下来验证CUDA版本:

nvcc --version

安装成功!

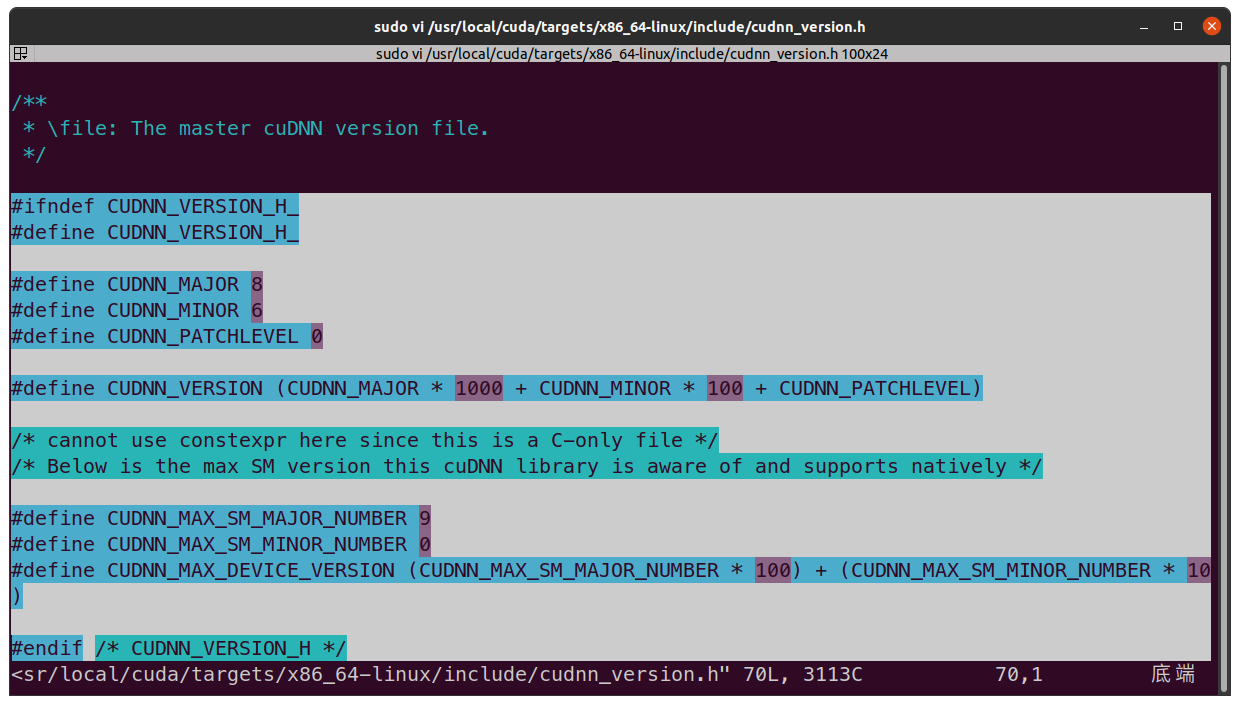

cuDNN

https://developer.nvidia.com/rdp/cudnn-archive

[!TIP]

官方安装教程(选择合适版本的NVIDIA cuDNN Installation Guide,鄙人一般来说会安装和已安装

CUDA的发布时间相近的版本,之前安装PaddlePaddle的时候发现GPU版PaddlePaddle依赖库要求的CUDA工具包版本和cuDNN版本貌似也是这样对应的):https://docs.nvidia.com/deeplearning/cudnn/archives/index.html

tar -xvf cudnn-linux-x86_64-8.x.x.x_cudaX.Y-archive.tar.xz

sudo cp cudnn-*-archive/include/cudnn*.h /usr/local/cuda/include

sudo cp -P cudnn-*-archive/lib/libcudnn* /usr/local/cuda/lib64

sudo chmod a+r /usr/local/cuda/include/cudnn*.h /usr/local/cuda/lib64/libcudnn*

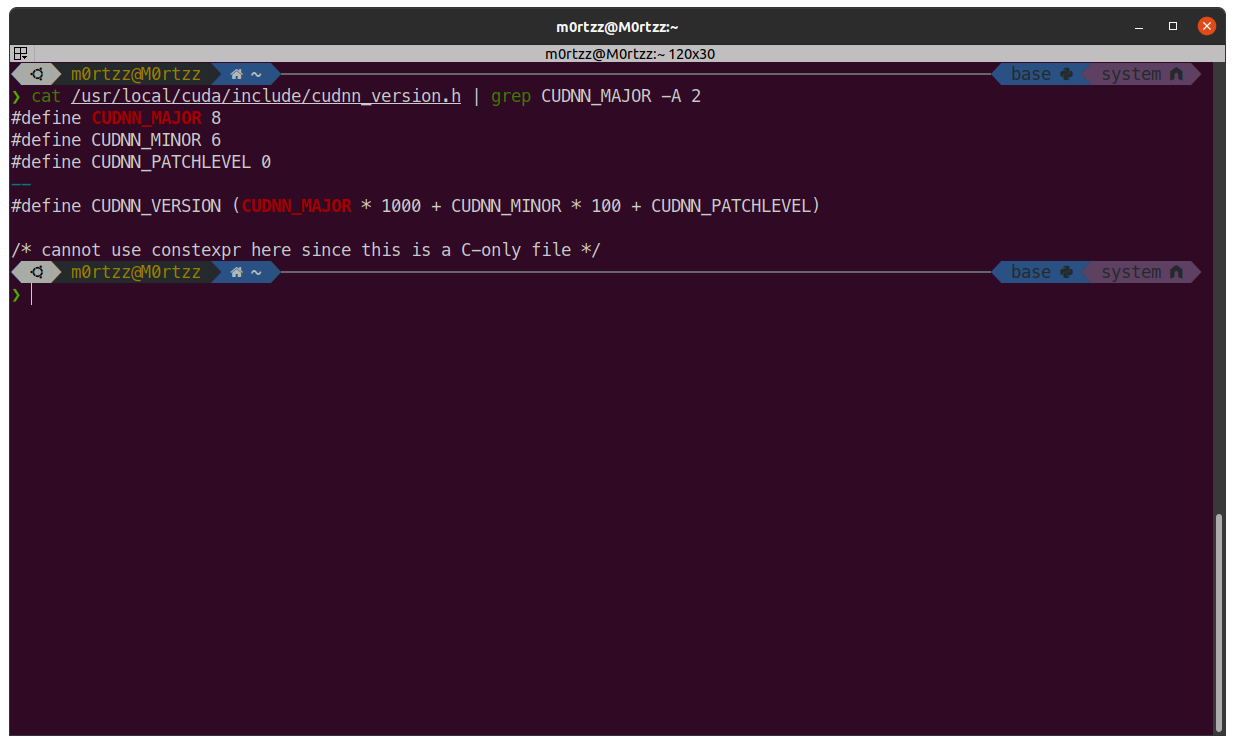

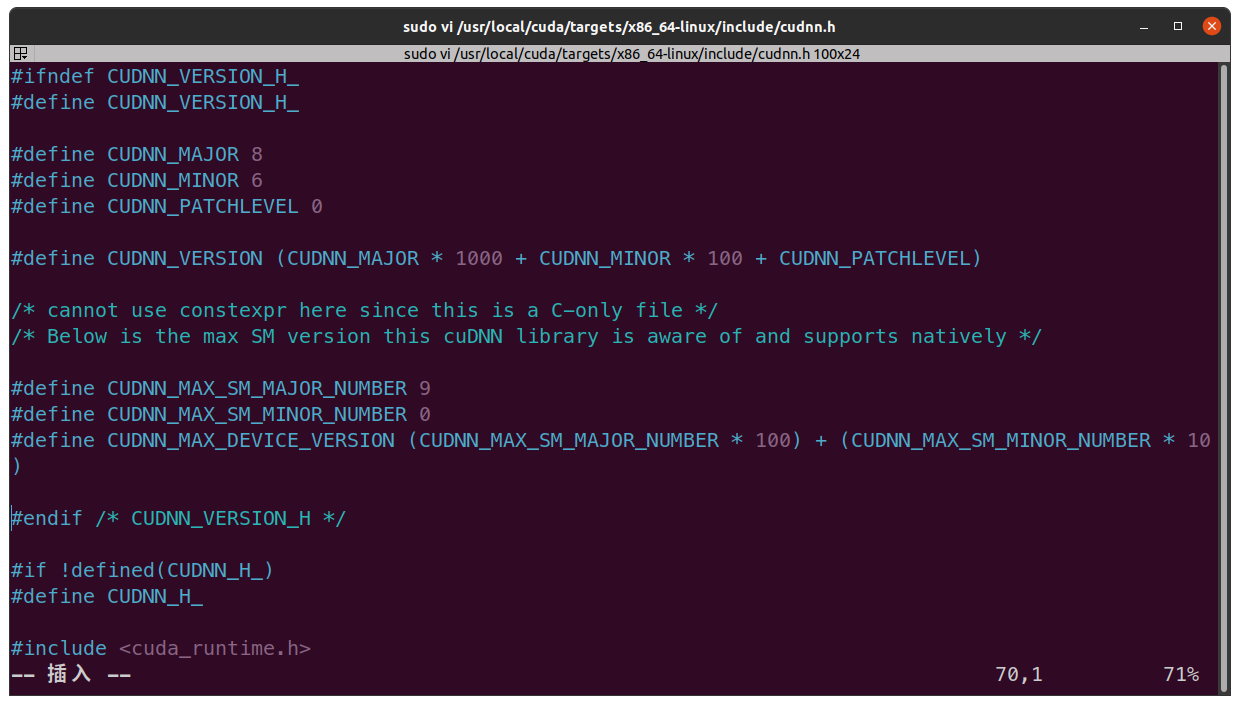

验证是否安装成功:

cat /usr/local/cuda/include/cudnn_version.h | grep CUDNN_MAJOR -A 2

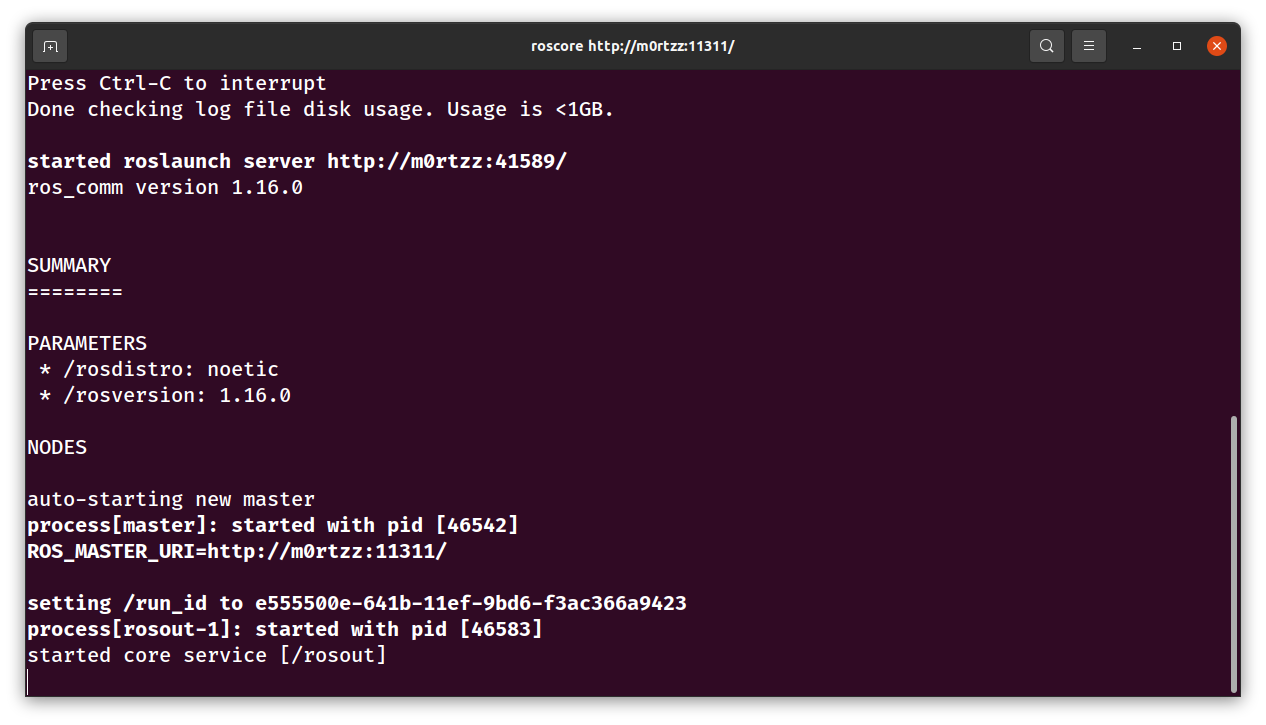

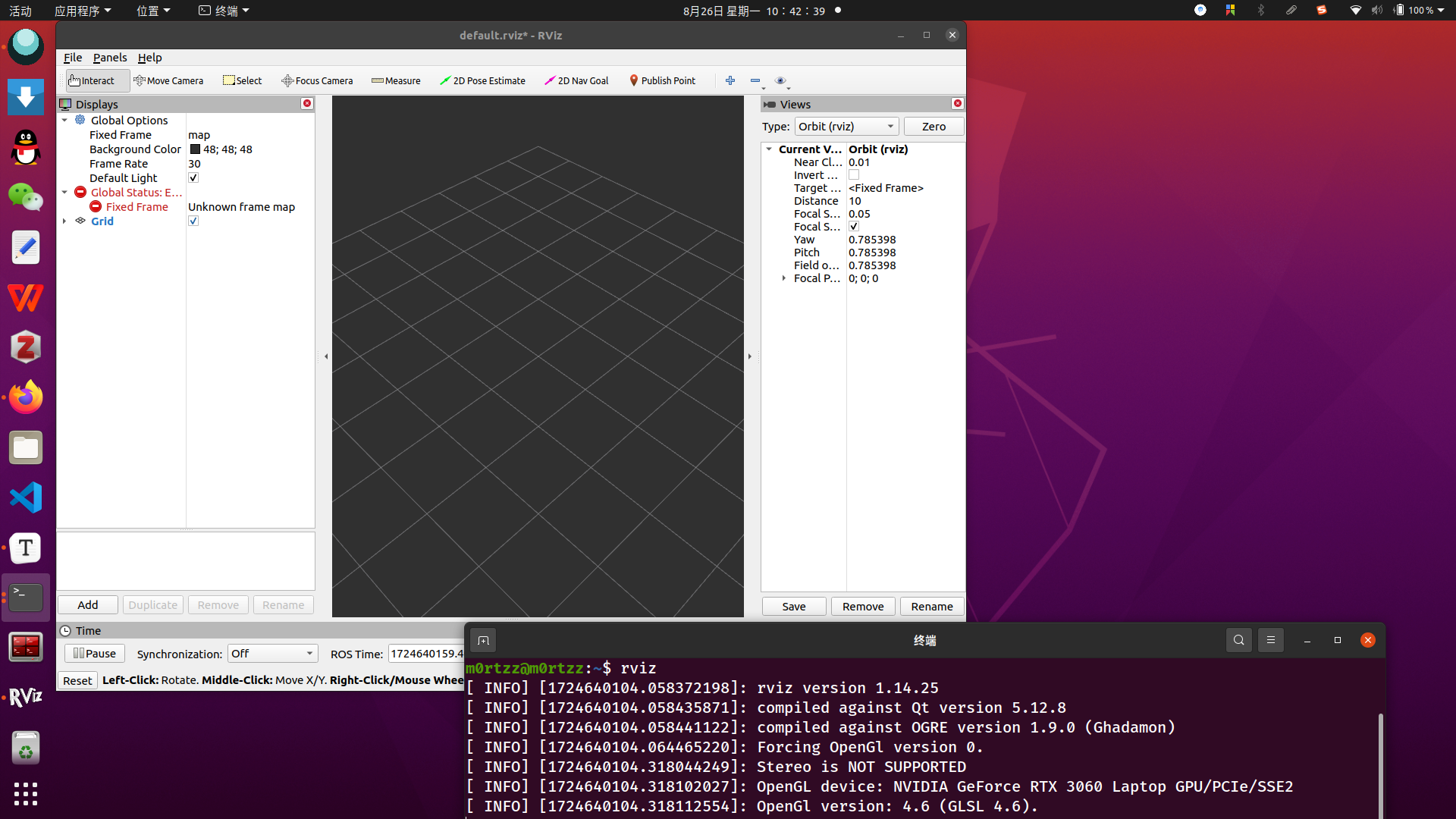

ROS-noetic(有些图忘记截了)

sudo gpg --keyserver 'hkp://keyserver.ubuntu.com:80' --recv-key C1CF6E31E6BADE8868B172B4F42ED6FBAB17C654

sudo gpg --export C1CF6E31E6BADE8868B172B4F42ED6FBAB17C654 | sudo tee /usr/share/keyrings/ros.gpg > /dev/null

sudo tee /etc/apt/sources.list.d/ros-latest.list > /dev/null << EOF

deb [signed-by=/usr/share/keyrings/ros.gpg] https://mirrors.hust.edu.cn/ros/ubuntu/ $(lsb_release -sc) main

EOF

sudo apt update -y && sudo apt install -y ros-noetic-desktop-full

echo 'source /opt/ros/noetic/setup.bash' >> ~/.bashrc

source ~/.bashrc

sudo apt install -y python3-rosdep python3-rosinstall python3-rosinstall-generator python3-wstool build-essential

sudo apt install -y python3-pip

使用镜像源加速pip下载:

sudo pip3 install rosdepc -i https://mirrors.hust.edu.cn/pypi/web/simple

sudo rosdepc init

rosdepc update

sudo chmod 777 -R ~/.ros/

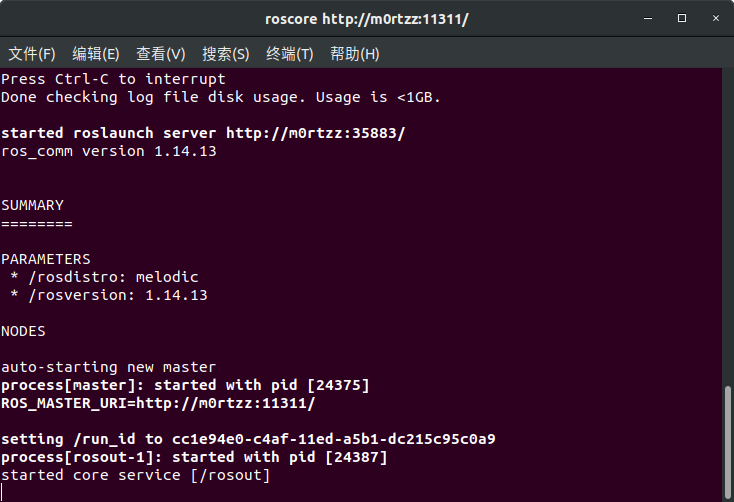

roscore

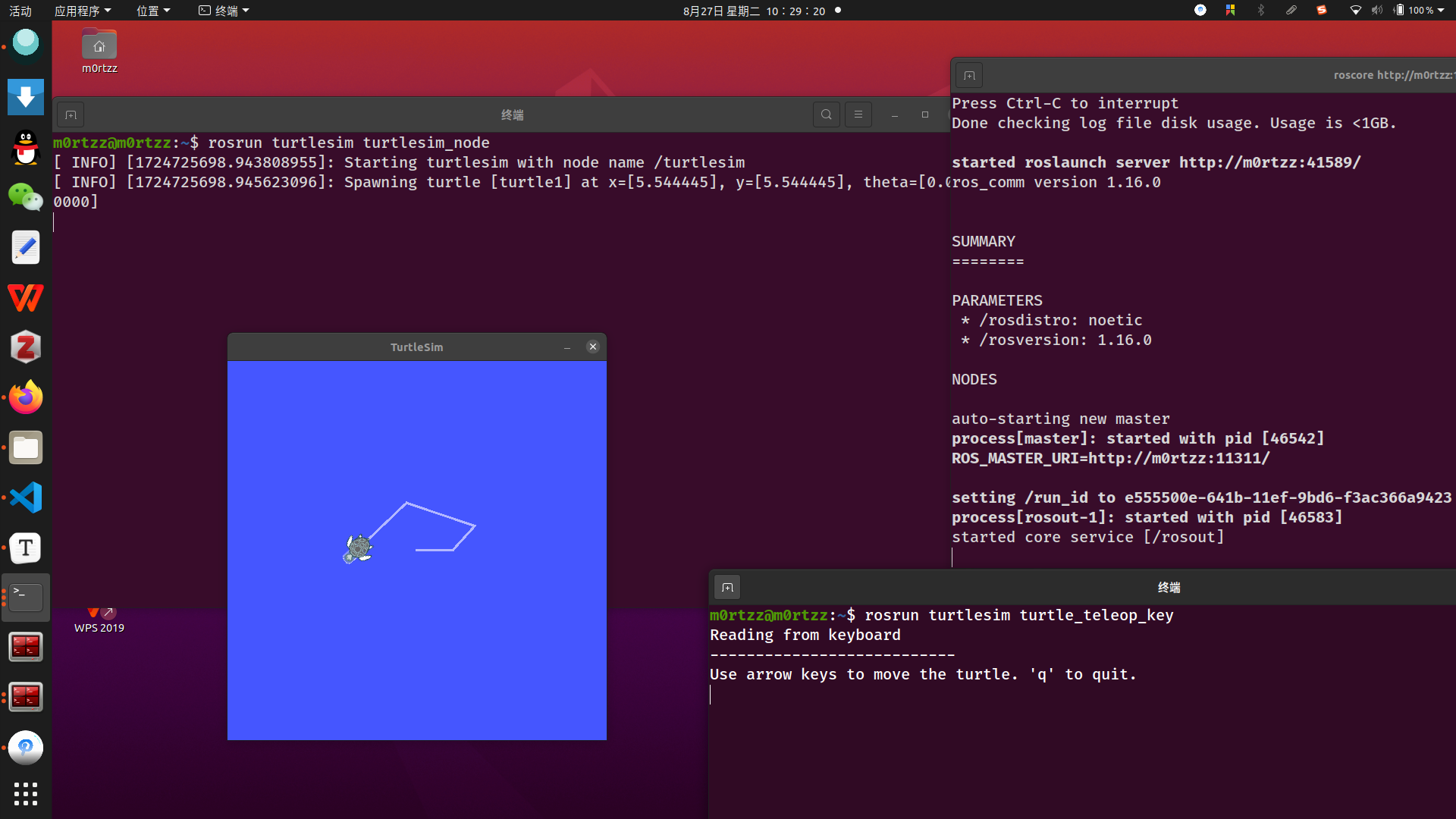

再新建两个终端,分别输入:

rosrun turtlesim turtlesim_node

rosrun turtlesim turtle_teleop_key

在 rosrun turtlesim turtle_teleop_key所在终端点击一下任意位置,然后使用←↕→小键盘控制,看小海龟会不会动,如果会动则安装成功。

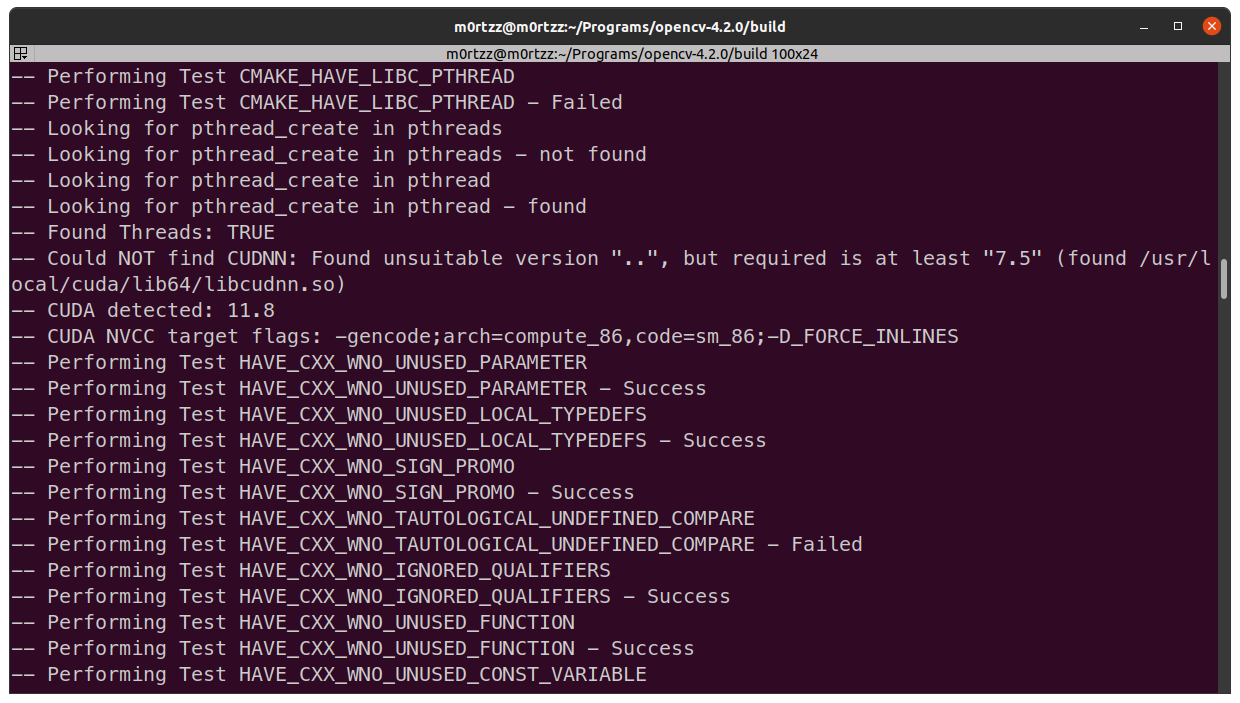

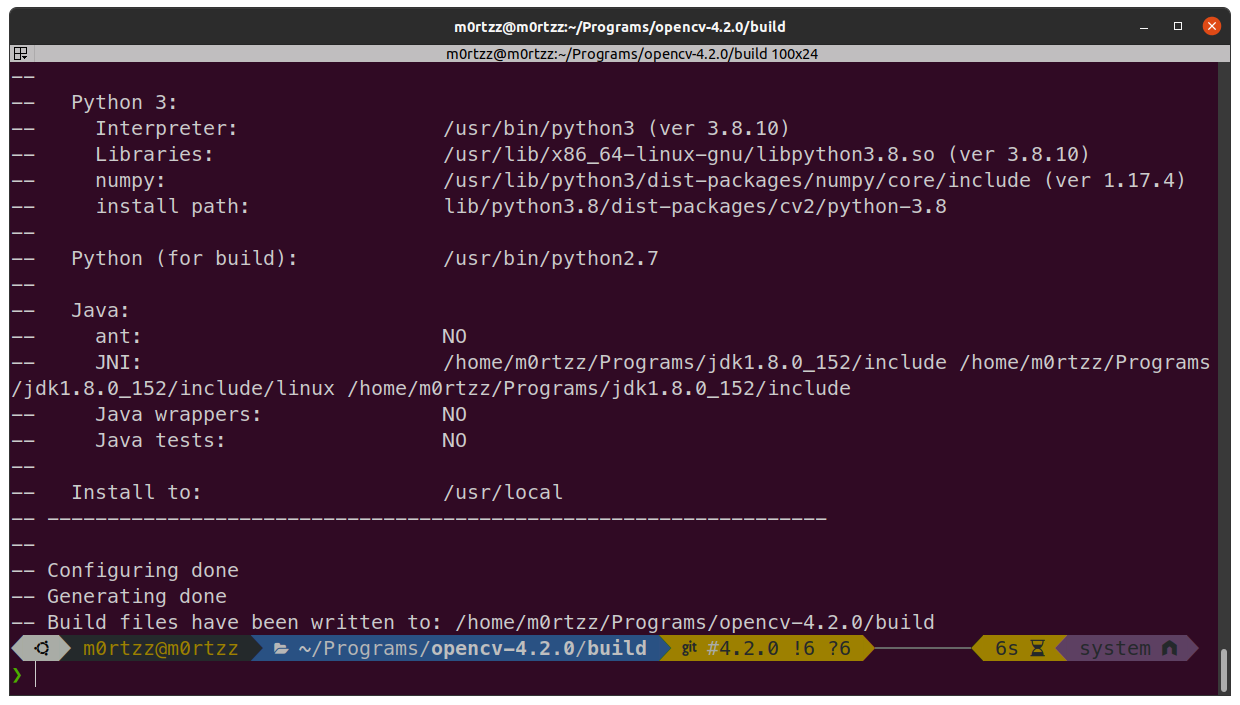

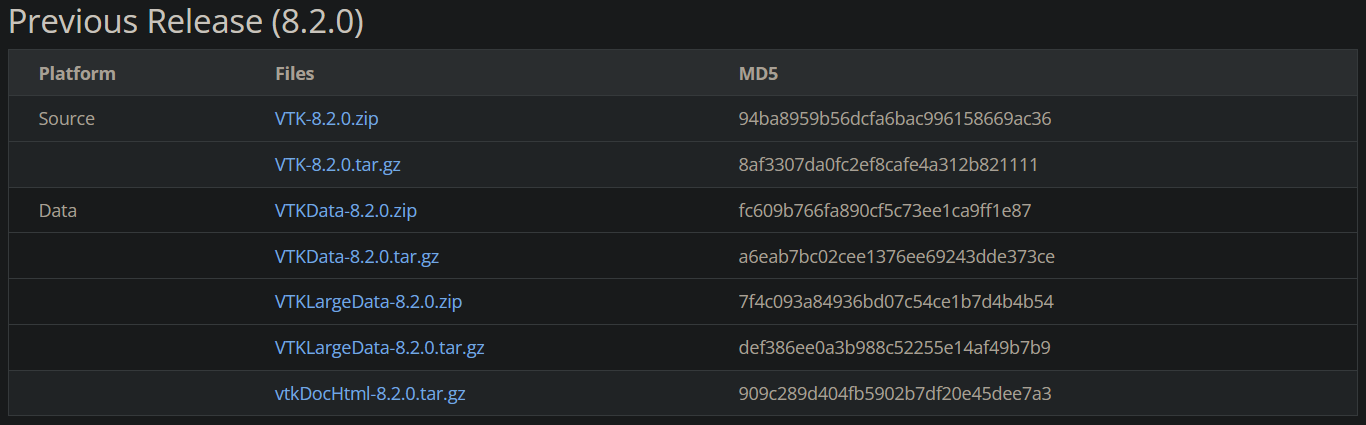

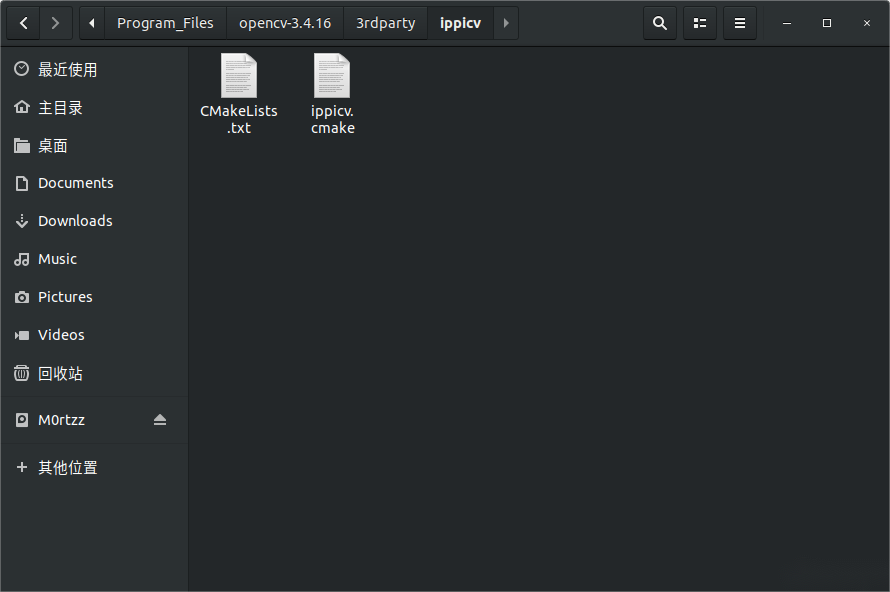

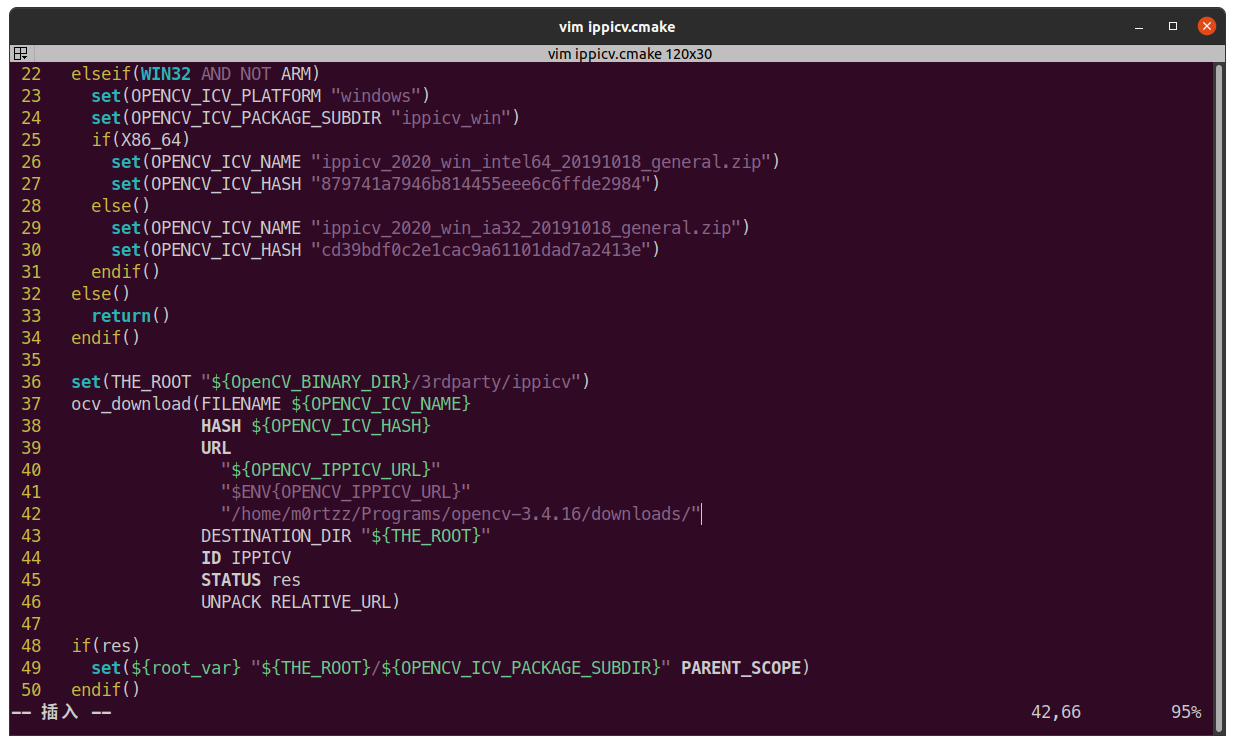

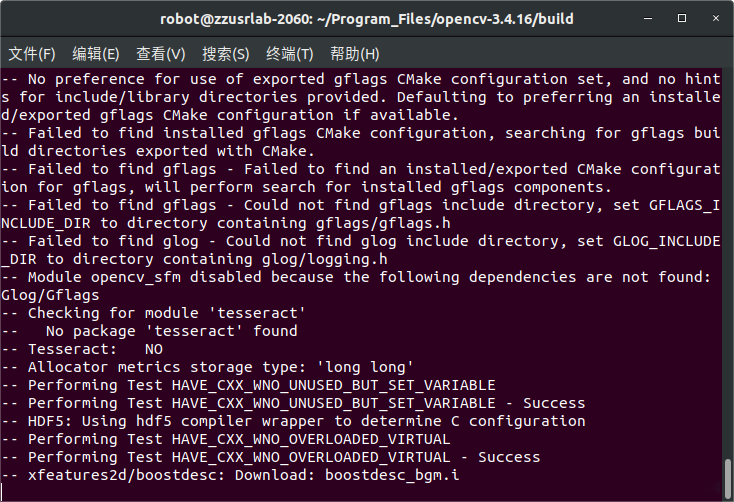

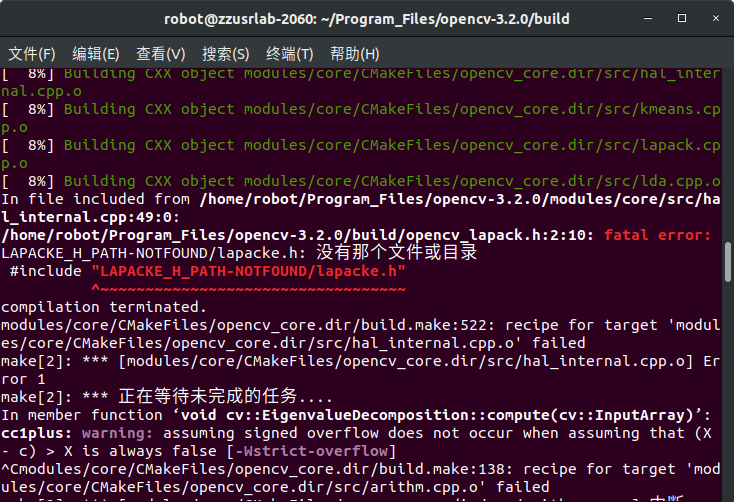

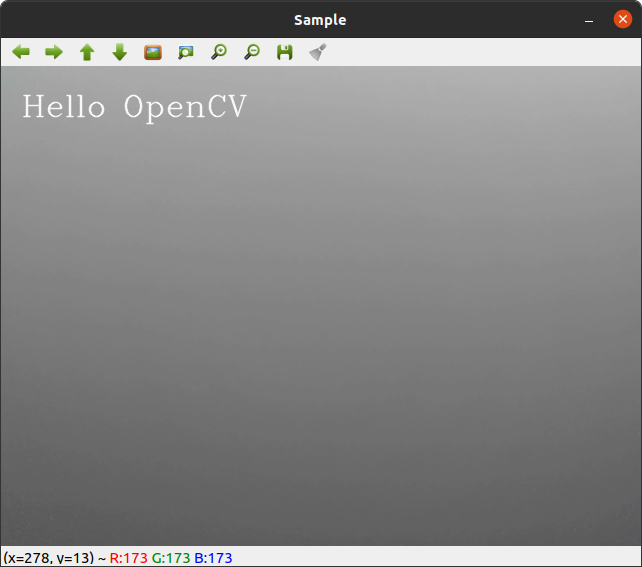

OpenCV-4.2.0及其扩展模块

经尝试多版本Ubuntu和OpenCV,装Ubuntu20.04,ROS noetic和OpenCV4.2.0及其扩展模块才能解决将彩色图像转换为网络所需的输入Blob后前馈时抛出的raised OpenCV exception和error: (-215:Assertion failed)等错误。

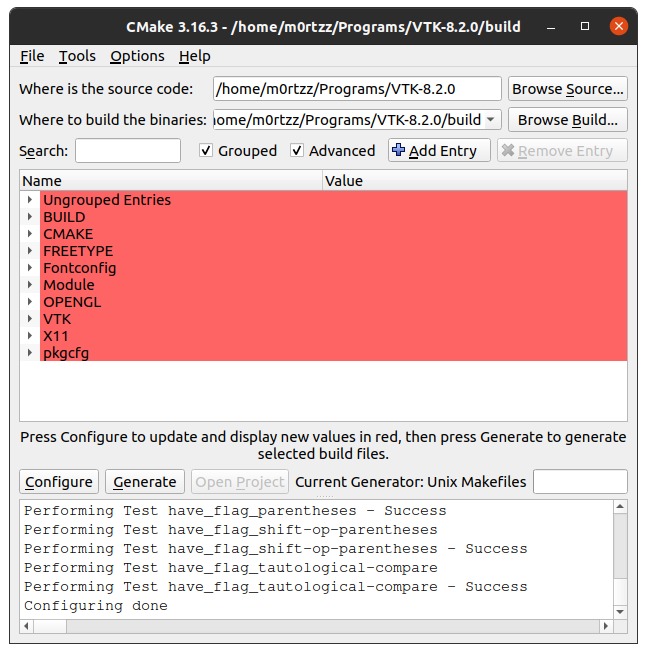

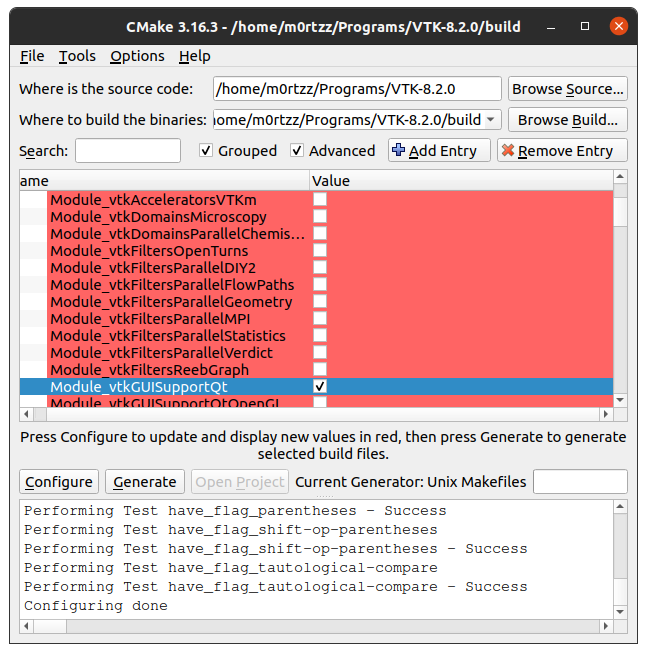

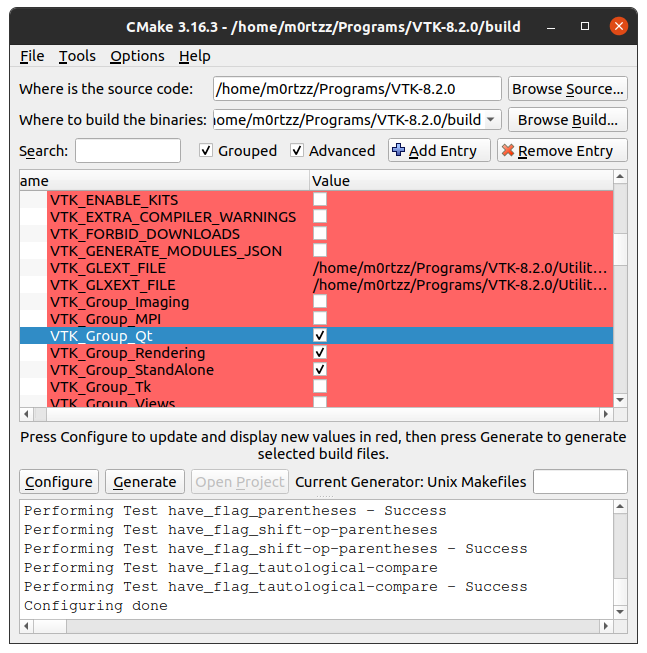

cmake命令

以下为几次成功安装的命令(注意替换命令中的绝对路径),安装过程可以参考NOT RECOMMENDED中的OpenCV3安装步骤:

cmake \

-D CMAKE_BUILD_TYPE=RELEASE \

-D WITH_GTK=ON \

-D WITH_VTK=ON \

-D WITH_ADE=OFF \

-D WITH_CUDA=ON \

-D WITH_CUDNN=ON \

-D WITH_OPENMP=ON \

-D WITH_LAPACK=OFF \

-D OPENCV_DNN_CUDA=ON \

-D CUDA_GENERATION=Auto \

-D CUDA_CUDA_LIBRARY=ON \

-D OPENCV_ENABLE_NONFREE=ON \

-D OPENCV_GENERATE_PKGCONFIG=ON \

-D ENABLE_PRECOMPILED_HEADERS=OFF \

-D OPENCV_EXTRA_MODULES_PATH=/home/m0rtzz/Programs/opencv-4.2.0/opencv_contrib-4.2.0/modules \

..

或:

cmake \

-D CMAKE_BUILD_TYPE=RELEASE \

-D WITH_GTK=ON \

-D WITH_VTK=ON \

-D WITH_ADE=OFF \

-D WITH_CUDA=ON \

-D WITH_CUDNN=ON \

-D WITH_OPENMP=ON \

-D WITH_LAPACK=OFF \

-D OPENCV_DNN_CUDA=ON \

-D CUDA_GENERATION=Auto \

-D CUDA_CUDA_LIBRARY=ON \

-D OPENCV_ENABLE_NONFREE=ON \

-D OPENCV_GENERATE_PKGCONFIG=ON \

-D ENABLE_PRECOMPILED_HEADERS=OFF \

-D CUDA_TOOLKIT_ROOT_DIR=/usr/local/cuda \

-D CUDNN_LIBRARY=/usr/local/cuda/lib64/libcudnn.so \

-D CUDA_CUDA_LIBRARY=/usr/local/cuda/lib64/stubs/libcuda.so \

-D OPENCV_EXTRA_MODULES_PATH=/home/m0rtzz/Programs/opencv-4.2.0/opencv_contrib-4.2.0/modules \

..

或:

cmake \

-D CMAKE_BUILD_TYPE=RELEASE \

-D WITH_GTK=ON \

-D WITH_VTK=ON \

-D WITH_ADE=OFF \

-D WITH_CUDA=ON \

-D WITH_CUDNN=ON \

-D WITH_OPENMP=ON \

-D WITH_LAPACK=OFF \

-D CUDA_ARCH_BIN=8.6 \

-D OPENCV_DNN_CUDA=ON \

-D CUDA_GENERATION=Auto \

-D CUDA_CUDA_LIBRARY=ON \

-D OPENCV_ENABLE_NONFREE=ON \

-D OPENCV_GENERATE_PKGCONFIG=ON \

-D ENABLE_PRECOMPILED_HEADERS=OFF \

-D CUDA_HOST_COMPILER:FILEPATH=/usr/bin/gcc \

-D CUDNN_LIBRARY=/usr/local/cuda/lib64/libcudnn.so \

-D OPENCV_EXTRA_MODULES_PATH=/home/m0rtzz/Programs/opencv-4.2.0/opencv_contrib-4.2.0/modules \

..

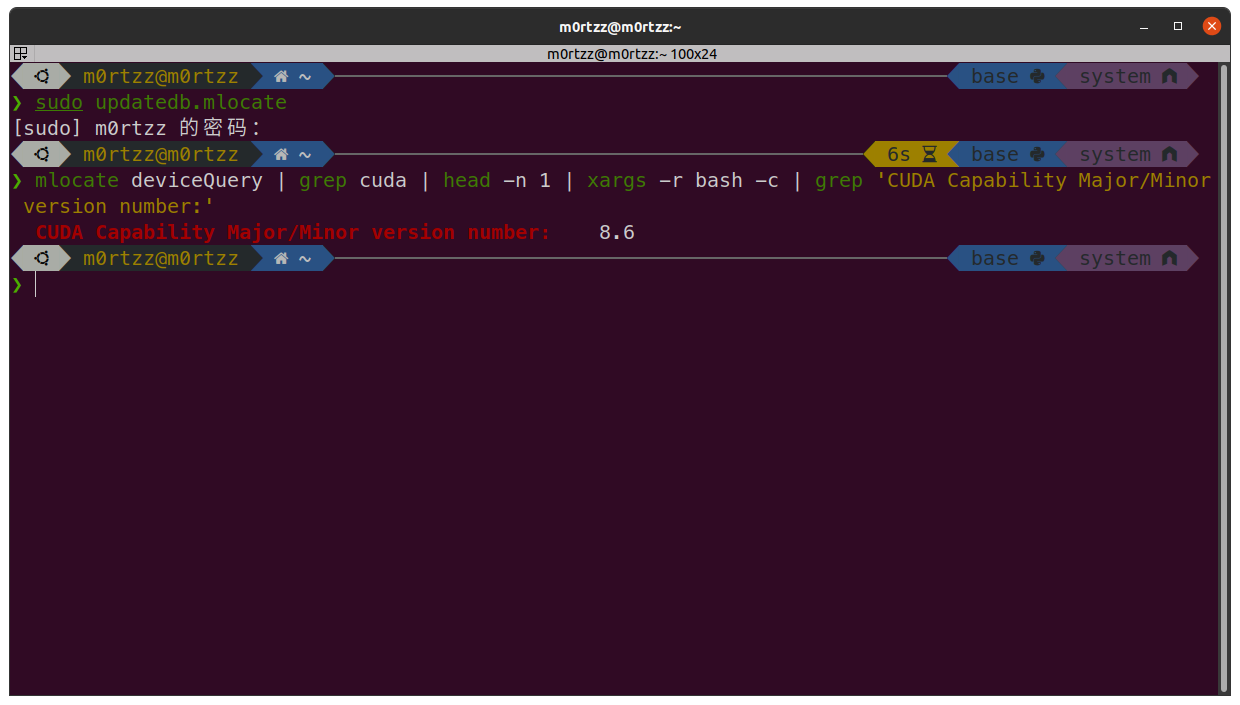

CUDA_ARCH_BIN查看命令:

sudo apt install -y mlocate

sudo updatedb.mlocate

mlocate deviceQuery | grep cuda | head -n 1 | xargs -r bash -c | grep 'CUDA Capability Major/Minor version number:'

部分报错解决办法

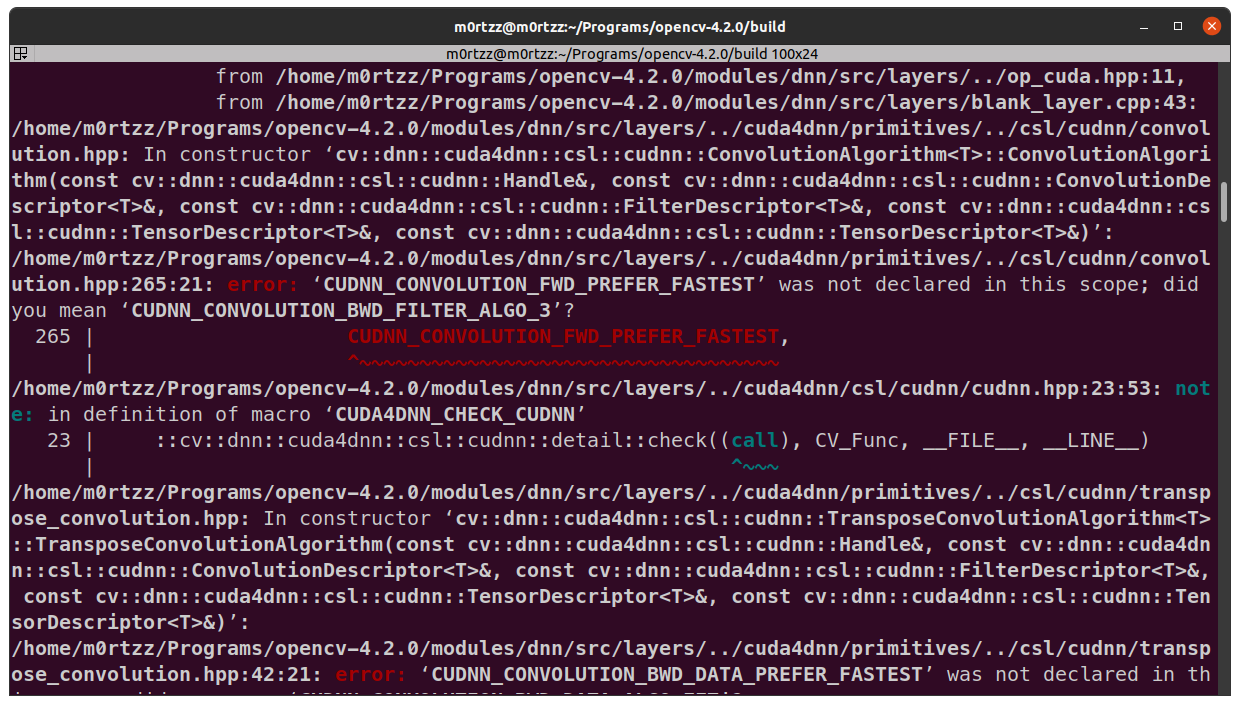

cuDNN8.X相关

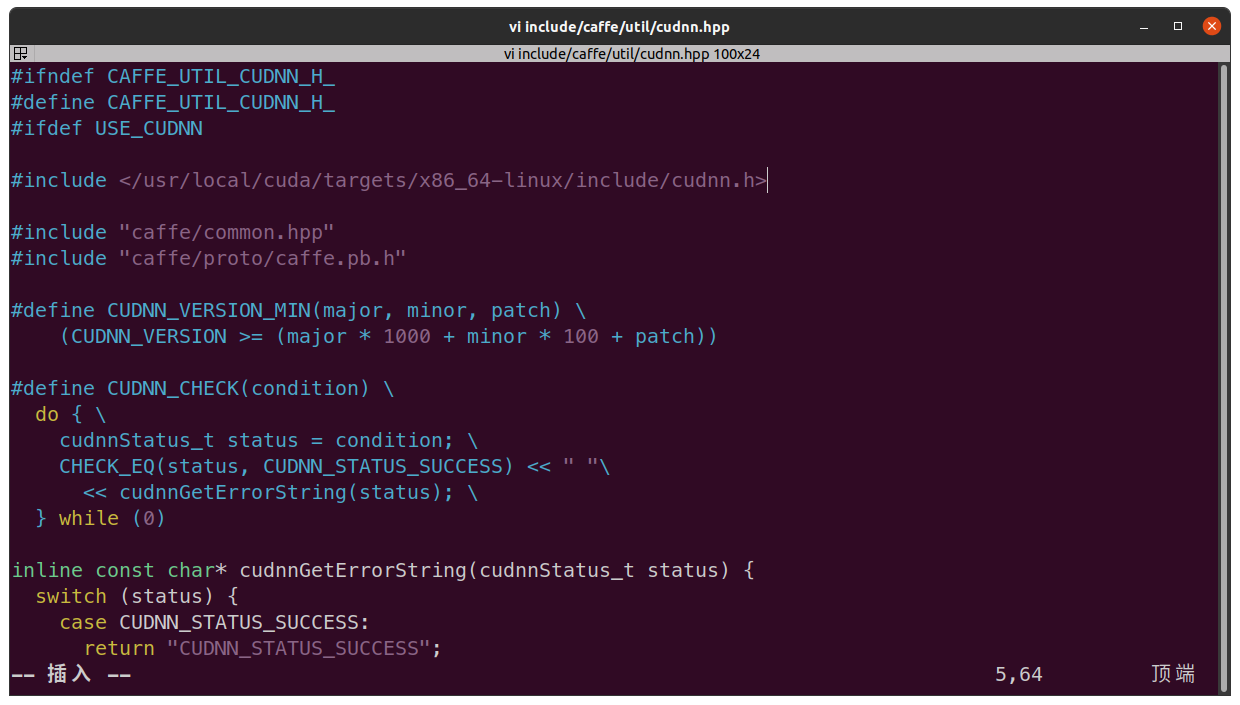

无法识别cuDNN版本

解决办法:

# @file: $(git rev-parse --show-toplevel)/cmake/FindCUDNN.cmake

# @line: 66左右

# extract version from the include

if(CUDNN_INCLUDE_DIR)

file(READ "${CUDNN_INCLUDE_DIR}/cudnn.h" CUDNN_H_CONTENTS) # [!code --]

if(EXISTS "${CUDNN_INCLUDE_DIR}/cudnn_version.h") # [!code ++]

file(READ "${CUDNN_INCLUDE_DIR}/cudnn_version.h" CUDNN_H_CONTENTS) # [!code ++]

else() # [!code ++]

file(READ "${CUDNN_INCLUDE_DIR}/cudnn.h" CUDNN_H_CONTENTS) # [!code ++]

endif() # [!code ++]

string(REGEX MATCH "define CUDNN_MAJOR ([0-9]+)" _ "${CUDNN_H_CONTENTS}")

set(CUDNN_MAJOR_VERSION ${CMAKE_MATCH_1} CACHE INTERNAL "")

message("CUDNN_MAJOR_VERSION:" ${CUDNN_MAJOR_VERSION}) # [!code ++]

string(REGEX MATCH "define CUDNN_MINOR ([0-9]+)" _ "${CUDNN_H_CONTENTS}")

set(CUDNN_MINOR_VERSION ${CMAKE_MATCH_1} CACHE INTERNAL "")

message("CUDNN_MINOR_VERSION:" ${CUDNN_MINOR_VERSION}) # [!code ++]

string(REGEX MATCH "define CUDNN_PATCHLEVEL ([0-9]+)" _ "${CUDNN_H_CONTENTS}")

set(CUDNN_PATCH_VERSION ${CMAKE_MATCH_1} CACHE INTERNAL "")

message("CUDNN_PATCH_VERSION:" ${CUDNN_PATCH_VERSION}) # [!code ++]

set(CUDNN_VERSION

"${CUDNN_MAJOR_VERSION}.${CUDNN_MINOR_VERSION}.${CUDNN_PATCH_VERSION}"

CACHE

STRING # [!code --]

INTERNAL # [!code ++]

"cuDNN version"

)

unset(CUDNN_H_CONTENTS)

endif()

Reference:

添加cuDNN8.X支持

打补丁:

.patch文件:

cd $(git rev-parse --show-toplevel)/ && \

wget -q --show-progress https://raw.gitcode.com/M0rtzz/opencv4-cudnn8-support/raw/master/opencv_pr_17685.patch -O opencv_pr_17685.patch && \

git apply opencv_pr_17685.patch

或者手动加入PR代码:

.diff文件:

cd $(git rev-parse --show-toplevel)/ && \

wget -q --show-progress https://raw.gitcode.com/M0rtzz/opencv4-cudnn8-support/raw/master/opencv_pr_17685.diff -O opencv_pr_17685.diff

// @brief: 行号是按照从上到下添加的顺序依次排列的

// @file: $(git rev-parse --show-toplevel)/modules/dnn/src/cuda4dnn/csl/cudnn/convolution.hpp

// @line: 226左右

CUDA4DNN_CHECK_CUDNN(cudnnSetConvolutionGroupCount(descriptor, group_count));

/**/// [!code ++]

#if CUDNN_MAJOR >= 8 // [!code ++]

/* cuDNN 7 and below use FMA math by default. cuDNN 8 includes TF32 Tensor Ops // [!code ++]

* in the default setting. TF32 convolutions have lower precision than FP32. // [!code ++]

* Hence, we set the math type to CUDNN_FMA_MATH to reproduce old behavior. // [!code ++]

*/ // [!code ++]

CUDA4DNN_CHECK_CUDNN(cudnnSetConvolutionMathType(descriptor, CUDNN_FMA_MATH)); // [!code ++]

#endif // [!code ++]

/**/// [!code ++]

if (std::is_same<T, half>::value)

/********************************分割线********************************/

// @line: 263左右

ConvolutionAlgorithm(

const Handle& handle, // [!code --]

const ConvolutionDescriptor<T>& conv, // [!code --]

const FilterDescriptor<T>& filter, // [!code --]

const TensorDescriptor<T>& input, // [!code --]

const TensorDescriptor<T>& output) // [!code --]

const ConvolutionDescriptor<T>& convDesc, // [!code ++]

const FilterDescriptor<T>& filterDesc, // [!code ++]

const TensorDescriptor<T>& inputDesc, // [!code ++]

const TensorDescriptor<T>& outputDesc) // [!code ++]

/********************************分割线********************************/

// @line: 269左右

{

#if CUDNN_MAJOR >= 8 // [!code ++]

int requestedAlgoCount = 0, returnedAlgoCount = 0; // [!code ++]

CUDA4DNN_CHECK_CUDNN(cudnnGetConvolutionForwardAlgorithmMaxCount(handle.get(), &requestedAlgoCount)); // [!code ++]

std::vector<cudnnConvolutionFwdAlgoPerf_t> results(requestedAlgoCount); // [!code ++]

CUDA4DNN_CHECK_CUDNN( // [!code ++]

cudnnGetConvolutionForwardAlgorithm_v7( // [!code ++]

handle.get(), // [!code ++]

inputDesc.get(), filterDesc.get(), convDesc.get(), outputDesc.get(), // [!code ++]

requestedAlgoCount, // [!code ++]

&returnedAlgoCount, // [!code ++]

&results[0] // [!code ++]

) // [!code ++]

); // [!code ++]

/**/// [!code ++]

size_t free_memory, total_memory; // [!code ++]

CUDA4DNN_CHECK_CUDA(cudaMemGetInfo(&free_memory, &total_memory)); // [!code ++]

/**/// [!code ++]

bool found_conv_algorithm = false; // [!code ++]

for (int i = 0; i < returnedAlgoCount; i++) // [!code ++]

{ // [!code ++]

if (results[i].status == CUDNN_STATUS_SUCCESS && // [!code ++]

results[i].algo != CUDNN_CONVOLUTION_FWD_ALGO_WINOGRAD_NONFUSED && // [!code ++]

results[i].memory < free_memory) // [!code ++]

{ // [!code ++]

found_conv_algorithm = true; // [!code ++]

algo = results[i].algo; // [!code ++]

workspace_size = results[i].memory; // [!code ++]

break; // [!code ++]

} // [!code ++]

} // [!code ++]

/**/// [!code ++]

if (!found_conv_algorithm) // [!code ++]

CV_Error (cv::Error::GpuApiCallError, "cuDNN did not return a suitable algorithm for convolution."); // [!code ++]

#else // [!code ++]

CUDA4DNN_CHECK_CUDNN(

/********************************分割线********************************/

// @line: 304左右

cudnnGetConvolutionForwardAlgorithm(

handle.get(),

input.get(), filter.get(), conv.get(), output.get(), // [!code --]

inputDesc.get(), filterDesc.get(), convDesc.get(), outputDesc.get(), // [!code ++]

CUDNN_CONVOLUTION_FWD_PREFER_FASTEST,

/********************************分割线********************************/

// @line: 314左右

cudnnGetConvolutionForwardWorkspaceSize(

handle.get(),

input.get(), filter.get(), conv.get(), output.get(), // [!code --]

inputDesc.get(), filterDesc.get(), convDesc.get(), outputDesc.get(), // [!code ++]

algo, &workspace_size

/********************************分割线********************************/

// @line: 320左右

);

#endif // [!code ++]

}

ConvolutionAlgorithm& operator=(const ConvolutionAlgorithm&) = default;

// @brief: 行号是按照从上到下添加的顺序依次排列的

// @file: $(git rev-parse --show-toplevel)/modules/dnn/src/cuda4dnn/csl/cudnn/transpose_convolution.hpp

// @line: 31左右

TransposeConvolutionAlgorithm(

const Handle& handle, // [!code --]

const ConvolutionDescriptor<T>& conv, // [!code --]

const FilterDescriptor<T>& filter, // [!code --]

const TensorDescriptor<T>& input, // [!code --]

const TensorDescriptor<T>& output) // [!code --]

const ConvolutionDescriptor<T>& convDesc, // [!code ++]

const FilterDescriptor<T>& filterDesc, // [!code ++]

const TensorDescriptor<T>& inputDesc, // [!code ++]

const TensorDescriptor<T>& outputDesc) // [!code ++]

/********************************分割线********************************/

// @line: 31左右

{

#if CUDNN_MAJOR >= 8 // [!code ++]

int requestedAlgoCount = 0, returnedAlgoCount = 0; // [!code ++]

CUDA4DNN_CHECK_CUDNN(cudnnGetConvolutionBackwardDataAlgorithmMaxCount(handle.get(), &requestedAlgoCount)); // [!code ++]

std::vector<cudnnConvolutionBwdDataAlgoPerf_t> results(requestedAlgoCount); // [!code ++]

CUDA4DNN_CHECK_CUDNN( // [!code ++]

cudnnGetConvolutionBackwardDataAlgorithm_v7( // [!code ++]

handle.get(), // [!code ++]

filterDesc.get(), inputDesc.get(), convDesc.get(), outputDesc.get(), // [!code ++]

requestedAlgoCount, // [!code ++]

&returnedAlgoCount, // [!code ++]

&results[0] // [!code ++]

) // [!code ++]

); // [!code ++]

/**/// [!code ++]

size_t free_memory, total_memory; // [!code ++]

CUDA4DNN_CHECK_CUDA(cudaMemGetInfo(&free_memory, &total_memory)); // [!code ++]

/**/// [!code ++]

bool found_conv_algorithm = false; // [!code ++]

for (int i = 0; i < returnedAlgoCount; i++) // [!code ++]

{ // [!code ++]

if (results[i].status == CUDNN_STATUS_SUCCESS && // [!code ++]

results[i].algo != CUDNN_CONVOLUTION_BWD_DATA_ALGO_WINOGRAD_NONFUSED && // [!code ++]

results[i].memory < free_memory) // [!code ++]

{ // [!code ++]

found_conv_algorithm = true; // [!code ++]

dalgo = results[i].algo; // [!code ++]

workspace_size = results[i].memory; // [!code ++]

break; // [!code ++]

} // [!code ++]

} // [!code ++]

/**/// [!code ++]

if (!found_conv_algorithm) // [!code ++]

CV_Error (cv::Error::GpuApiCallError, "cuDNN did not return a suitable algorithm for transpose convolution."); // [!code ++]

#else // [!code ++]

CUDA4DNN_CHECK_CUDNN(

/********************************分割线********************************/

// @line: 73左右

cudnnGetConvolutionBackwardDataAlgorithm(

handle.get(),

filter.get(), input.get(), conv.get(), output.get(), // [!code --]

filterDesc.get(), inputDesc.get(), convDesc.get(), outputDesc.get(), // [!code ++]

CUDNN_CONVOLUTION_BWD_DATA_PREFER_FASTEST,

/********************************分割线********************************/

// @line: 83左右

cudnnGetConvolutionBackwardDataWorkspaceSize(

handle.get(),

filter.get(), input.get(), conv.get(), output.get(), // [!code --]

filterDesc.get(), inputDesc.get(), convDesc.get(), outputDesc.get(), // [!code ++]

dalgo, &workspace_size

/********************************分割线********************************/

// @line: 88左右

);

#endif // [!code ++]

}

TransposeConvolutionAlgorithm& operator=(const TransposeConvolutionAlgorithm&) = default;

Reference:

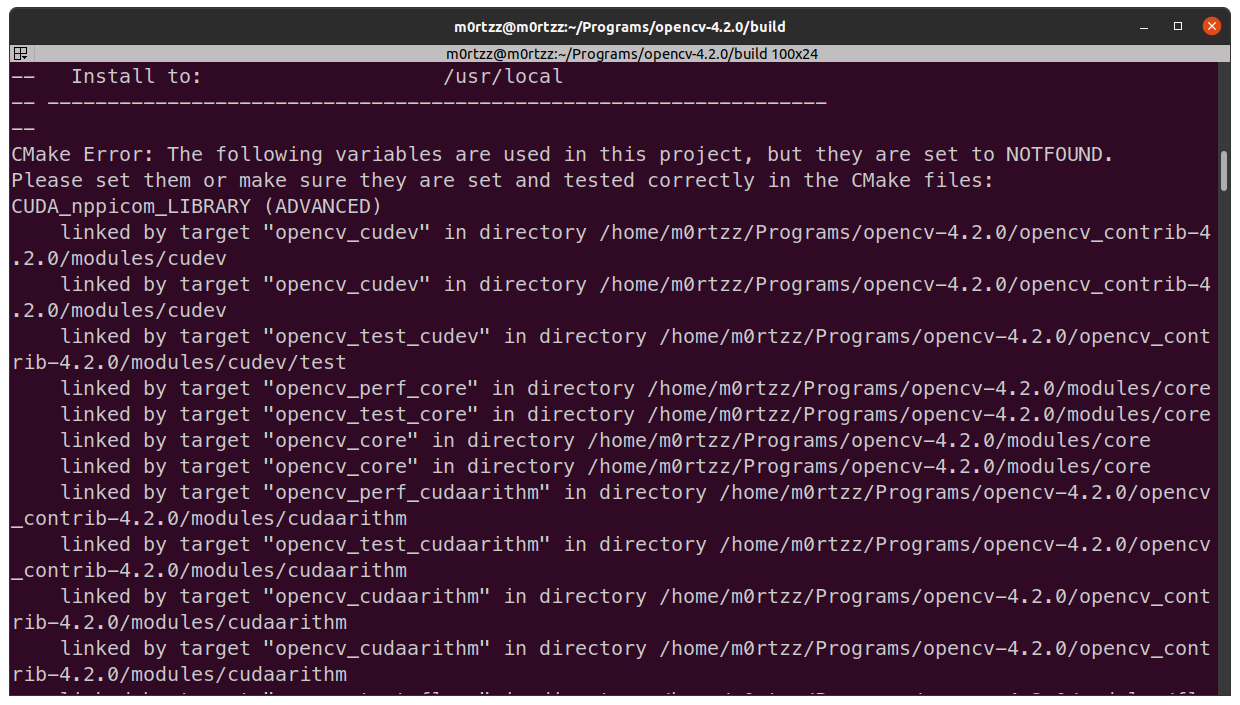

CUDA11.X相关

因为CUDA11.X不再支持CUDA_nppicom_LIBRARY而报错:

解决办法:

# @file: $(git rev-parse --show-toplevel)/cmake/OpenCVDetectCUDA.cmake

# @line: 29左右

if(CUDA_FOUND)

set(HAVE_CUDA 1)

if(CUDA_VERSION VERSION_GREATER_EQUAL "11.0") # [!code ++]

# CUDA 11.X removes nppicom # [!code ++]

ocv_list_filterout(CUDA_npp_LIBRARY "nppicom") # [!code ++]

ocv_list_filterout(CUDA_nppi_LIBRARY "nppicom") # [!code ++]

endif() # [!code ++]

if(WITH_CUFFT)

Reference:

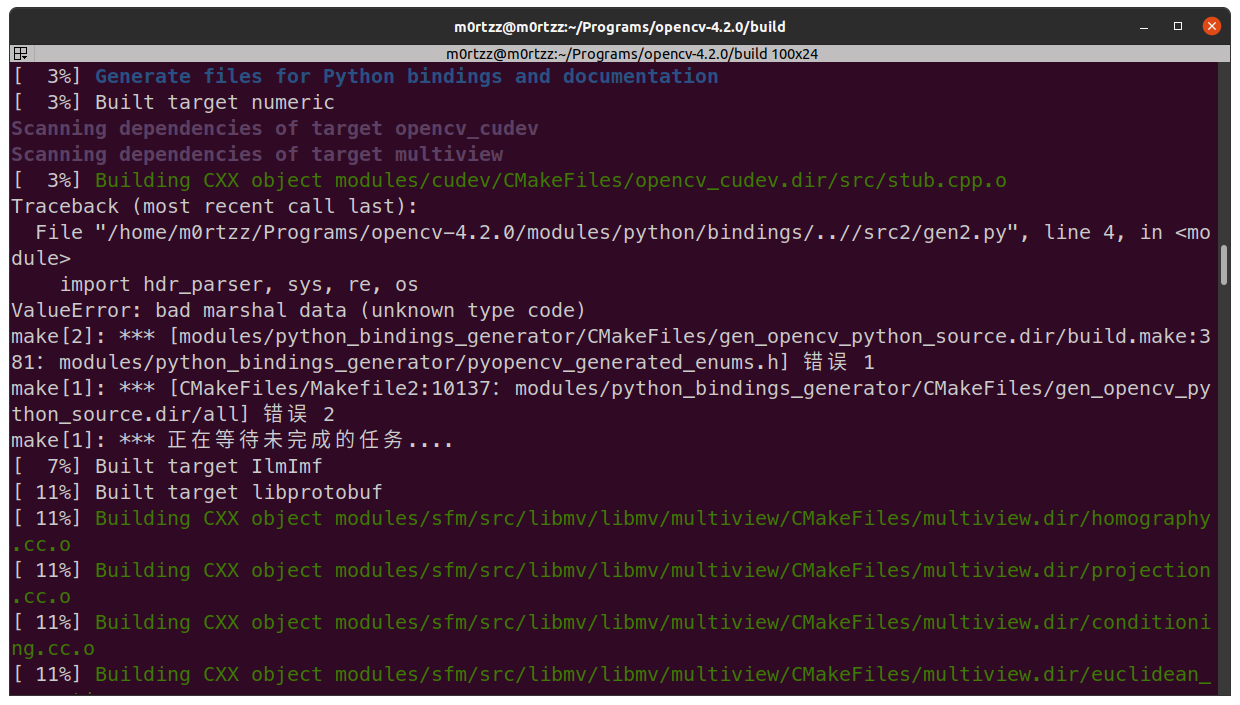

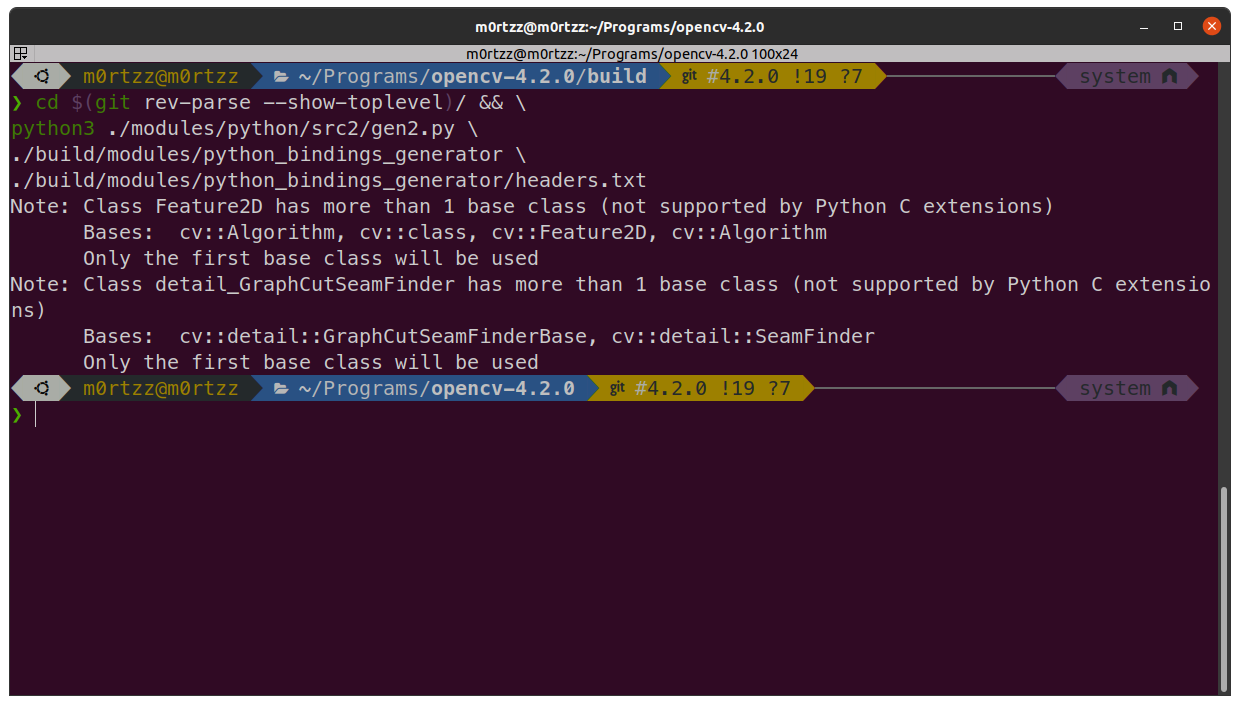

Python相关

可能是cmake找不到合适的Python解释器来执行脚本:

解决办法:

# 手动执行脚本

cd $(git rev-parse --show-toplevel)/ && \

python3 ./modules/python/src2/gen2.py \

./build/modules/python_bindings_generator \

./build/modules/python_bindings_generator/headers.txt

Reference:

https://github.com/opencv/opencv/issues/10771#issuecomment-376861139

处理配置文件

如果不执行以下几步,编译darknet_ros会报错: error: 'IplImage'之类的:

sudo cp /usr/local/lib/pkgconfig/opencv4.pc /usr/lib/pkgconfig/opencv4.pc

sudo cp /usr/lib/pkgconfig/opencv4.pc /usr/lib/pkgconfig/opencv.pc

百度智能云

sudo apt install -y curl libjsoncpp-dev

jsoncpp库的头文件改为:

#include <jsoncpp/json/json.h>

g++编译:

g++ test.cpp -o test.out -lcurl -ljsoncpp

运行:

./test.out

darknet、yolov3及darknet_ros工作空间

git clone https://github.com/AlexeyAB/darknet.git darknet

或公益加速源:

git clone https://ghp.ci/https://github.com/AlexeyAB/darknet.git darknet

cd darknet/

sudo gedit Makefile

修改以下前几行为:

GPU=1

CUDNN=1

CUDNN_HALF=1

OPENCV=1

AVX=0

OPENMP=1

LIBSO=1

ZED_CAMERA=0

ZED_CAMERA_v2_8=0

然后修改NVCC=后边为nvcc路径:

NVCC=/usr/local/cuda/bin/nvcc

打开终端,输入:

sudo tee /etc/ld.so.conf.d/cuda.conf > /dev/null << EOF

/usr/local/cuda/lib64

EOF

sudo ldconfig

sudo make -j$(nproc)

./darknet

输出为:

usage: ./darknet <function>

之后我们下载yolov3权重文件:

cd $(git rev-parse --show-toplevel)/ && \

mkdir weights && cd weights/ && \

wget -q --show-progress https://pjreddie.com/media/files/yolov3.weight

到此为止darknet就配置好了。

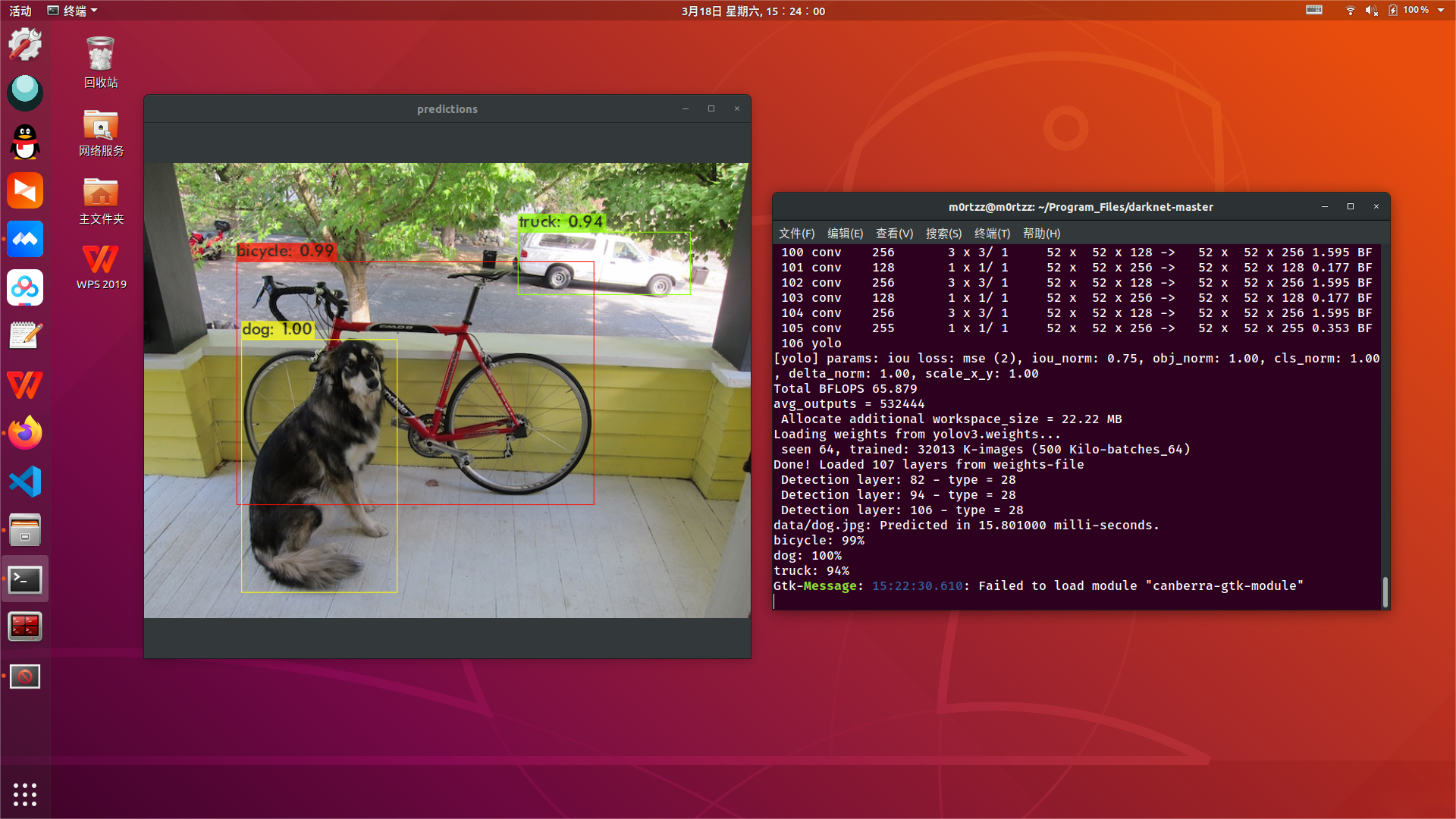

下面我们测试一下:

cd $(git rev-parse --show-toplevel)/ && \

./darknet detect cfg/yolov3.cfg weights/yolov3.weights data/dog.jpg

输出以下就证明配置没有问题:

输出的最后一行报错:

Gtk-Message: 15:22:30.610: Failed to load module "canberra-gtk-module"

解决方法:

sudo apt install -y 'libcanberra-gtk*'

安装之后重新运行就不会报错了。

darknet_ros工作空间(OpenCV-4.2.0):

mkdir -p catkin_ws/src && cd catkin_ws/src/ && catkin_init_workspace

cd .. && catkin_make

cd src/

git clone -b opencv4 --recursive https://github.com/M0rtzz/darknet_ros.git darknet_ros

cd darknet_ros/

git submodule update --recursive

如果视频流只有第一帧是RGB8编码格式,阅读源码后发现在show_image之前调用image.cpp中的rgbgr_image函数循环转换图像编码格式即可解决此问题:

// @file: image.cpp

void rgbgr_image(image im)

{

int i;

for(i = 0; i < im.w*im.h; ++i){

float swap = im.data[i];

im.data[i] = im.data[i+im.w*im.h*2];

im.data[i+im.w*im.h*2] = swap;

}

}

// @file: YoloObjectDetector.cpp

void *YoloObjectDetector::displayInThread(void *ptr)

{

// NOTE: Modified by M0rtzz, solved the problem of displaying video stream as bgr8

rgbgr_image(buff_[(buffIndex_ + 1) % 3]);

int c = show_image(buff_[(buffIndex_ + 1) % 3], "YOLO V3", waitKeyDelay_);

if (c != -1)

c = c % 256;

if (c == 27)

{

demoDone_ = 1;

return 0;

}

else if (c == 82)

{

demoThresh_ += .02;

}

else if (c == 84)

{

demoThresh_ -= .02;

if (demoThresh_ <= .02)

demoThresh_ = .02;

}

else if (c == 83)

{

demoHier_ += .02;

}

else if (c == 81)

{

demoHier_ -= .02;

if (demoHier_ <= .0)

demoHier_ = .0;

}

return 0;

}

之后:

catkin_make

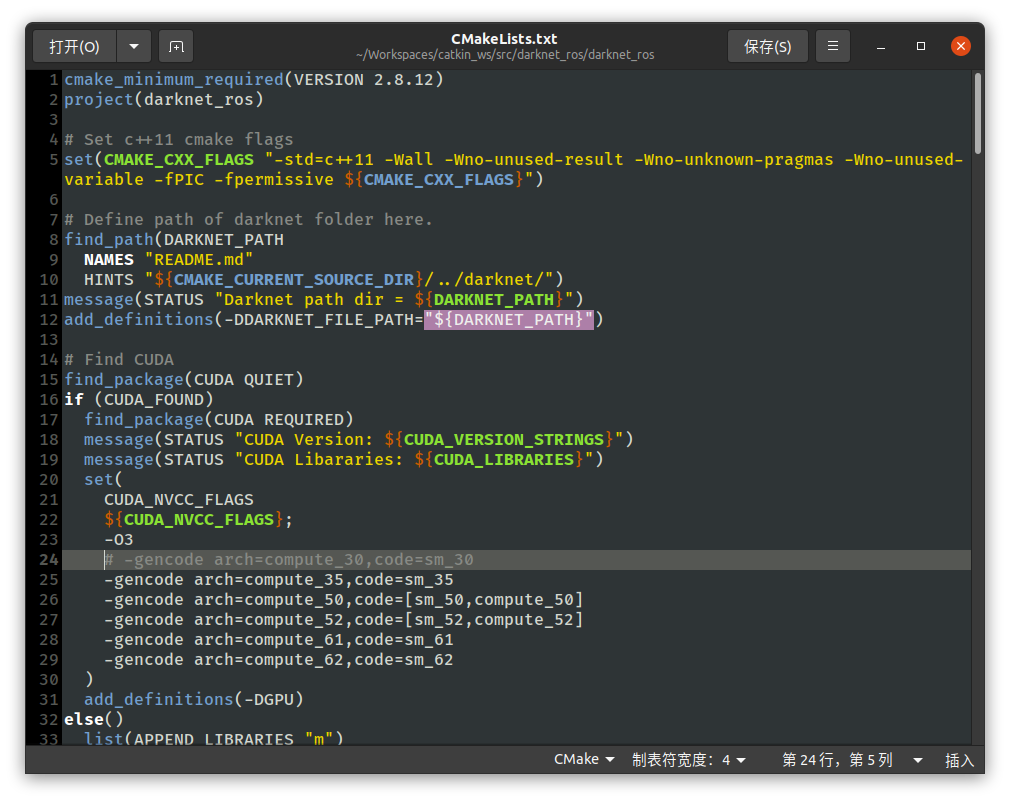

catkin_make如果编译不过的话(error: 'IplImage' 之类的,之前装OpenCV提到过避免报错的方法),注意以下命令是只编译darknet_ros一个包,若工作空间下有多个包需要一起编译那么把命令中的darknet_ros删除重新执行即可:

catkin_make darknet_ros \

--cmake-args \

-D CMAKE_CXX_FLAGS='-D CV__ENABLE_C_API_CTORS'

如果报错nvcc fatal : Unsupported gpu architecture 'compute_30'之类的,是因为CUDA11.X已经不支持compute_30了,我们将darknet_ros/darknet_ros/CMakeLists.txt中含有 compute_30的行进行注释后重新catkin_make:

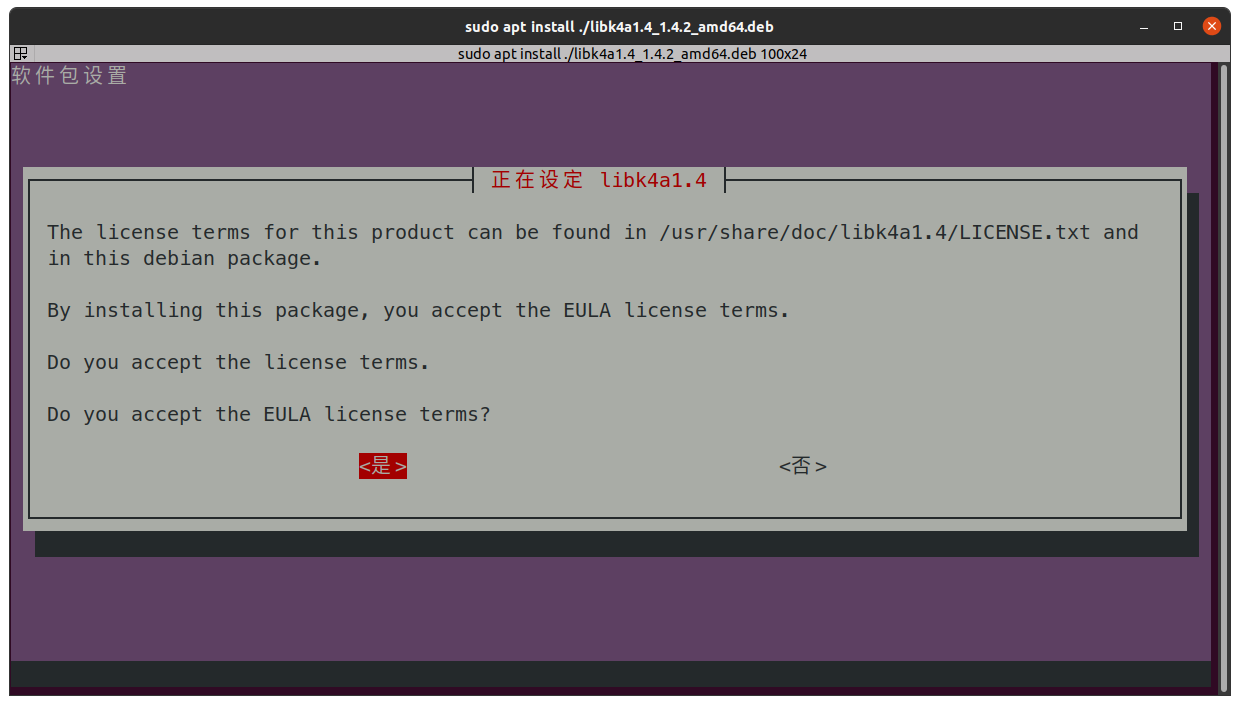

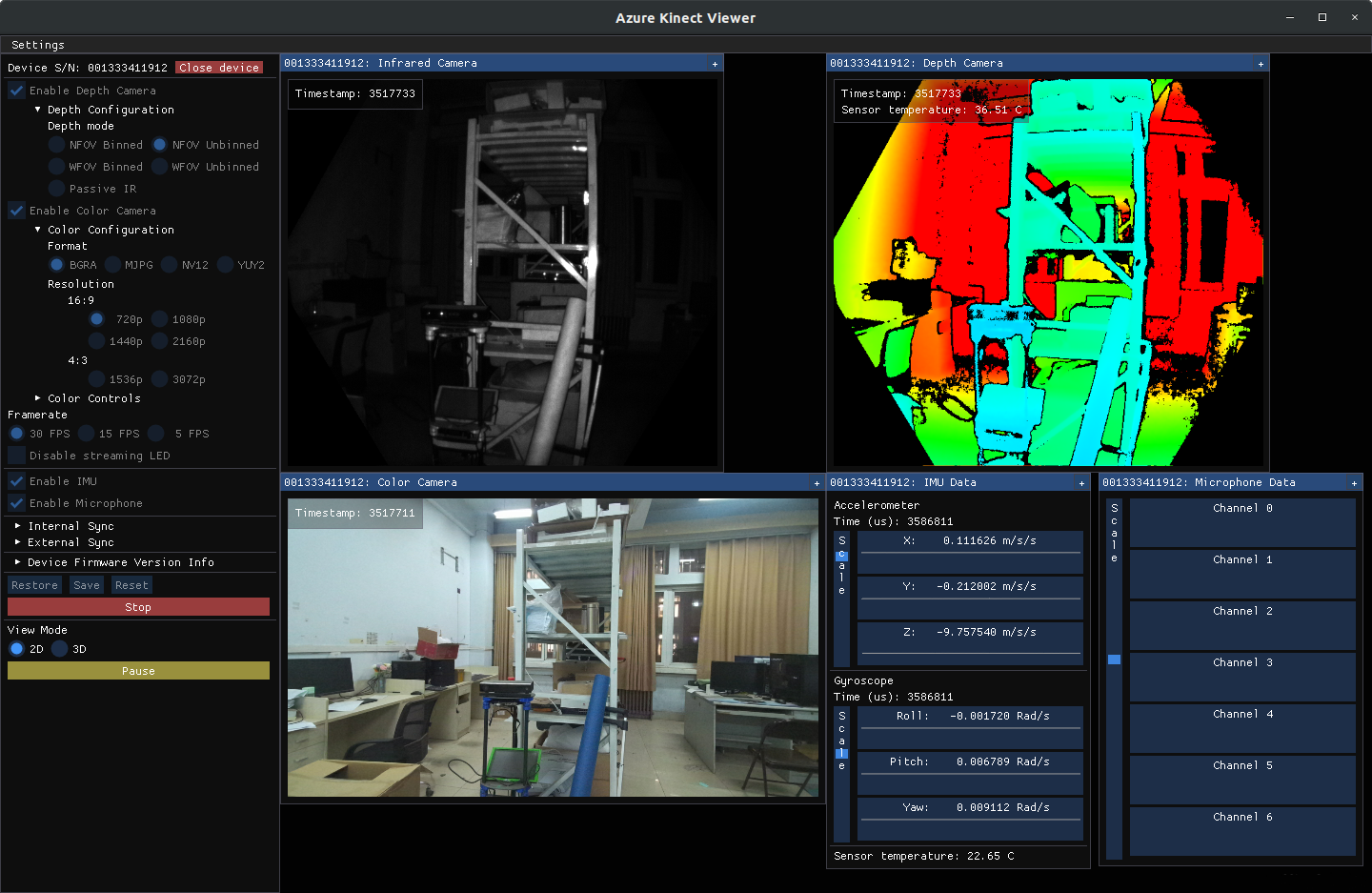

Azure Kinect SDK-v1.4.2(软件包)

[!NOTE]

鄙人在

Ubuntu18.04下是通过源码编译安装的,在Ubuntu20.04下是通过deb包直接安装的。

下载软件包:

wget -q --show-progress https://packages.microsoft.com/ubuntu/18.04/prod/pool/main/libk/libk4a1.4/libk4a1.4_1.4.2_amd64.deb -O ./libk4a1.4_1.4.2_amd64.deb && \

wget -q --show-progress https://packages.microsoft.com/ubuntu/18.04/prod/pool/main/libk/libk4a1.4-dev/libk4a1.4-dev_1.4.2_amd64.deb -O ./libk4a1.4-dev_1.4.2_amd64.deb && \

wget -q --show-progress https://packages.microsoft.com/ubuntu/18.04/prod/pool/main/k/k4a-tools/k4a-tools_1.4.2_amd64.deb -O ./k4a-tools_1.4.2_amd64.deb

安装:

sudo apt install -y ./libk4a1.4_1.4.2_amd64.deb && \

sudo cp /usr/lib/x86_64-linux-gnu/libk4a1.4/libdepthengine.so.2.0 /usr/lib/ && \

sudo cp /usr/lib/libdepthengine.so.2.0 /usr/lib/x86_64-linux-gnu/

sudo apt install -y ./libk4a1.4-dev_1.4.2_amd64.deb ./k4a-tools_1.4.2_amd64.deb

配置udev规则:

sudo tee /etc/udev/rules.d/99-k4a.rules > /dev/null << EOF

# Bus 002 Device 116: ID 045e:097a Microsoft Corp. - Generic Superspeed USB Hub

# Bus 001 Device 015: ID 045e:097b Microsoft Corp. - Generic USB Hub

# Bus 002 Device 118: ID 045e:097c Microsoft Corp. - Azure Kinect Depth Camera

# Bus 002 Device 117: ID 045e:097d Microsoft Corp. - Azure Kinect 4K Camera

# Bus 001 Device 016: ID 045e:097e Microsoft Corp. - Azure Kinect Microphone Array

BUS!="usb", ACTION!="add", SUBSYSTEM!=="usb_device", GOTO="k4a_logic_rules_end"

ATTRS{idVendor}=="045e", ATTRS{idProduct}=="097a", MODE="0666", GROUP="plugdev"

ATTRS{idVendor}=="045e", ATTRS{idProduct}=="097b", MODE="0666", GROUP="plugdev"

ATTRS{idVendor}=="045e", ATTRS{idProduct}=="097c", MODE="0666", GROUP="plugdev"

ATTRS{idVendor}=="045e", ATTRS{idProduct}=="097d", MODE="0666", GROUP="plugdev"

ATTRS{idVendor}=="045e", ATTRS{idProduct}=="097e", MODE="0666", GROUP="plugdev"

LABEL="k4a_logic_rules_end"

EOF

科大讯飞语音

https://www.xfyun.cn/sdk/dispatcher

sudo apt install -y sox libsox-fmt-all pavucontrol

# 如果编译时有相关warning再修改

sudo gedit /usr/include/pcl-1.8/pcl/visualization/cloud_viewer.h

修改一下:

// @line: 199左右

private:

/** \brief Private implementation. */

struct CloudViewer_impl;

// std::auto_ptr<CloudViewer_impl> impl_;

std::shared_ptr<CloudViewer_impl> impl_;

boost::signals2::connection

registerMouseCallback (boost::function<void (const pcl::visualization::MouseEvent&)>);

下载所需SDK,将libs/x64/libmsc.so文件拷贝至工作空间根目录/lib/your-appid/libmsc.so。

cmake_minimum_required(VERSION 3.0.2)

project(tts_voice_test)

set(CMAKE_CXX_FLAGS "-std=c++11")

find_package(

catkin REQUIRED

COMPONENTS roscpp

rospy

std_msgs

genmsg

actionlib_msgs

actionlib

rostime

sensor_msgs

message_filters

cv_bridge

image_transport

compressed_image_transport

compressed_depth_image_transport

tf

tf2

tf2_ros

tf2_geometry_msgs

geometry_msgs

message_generation

nodelet

kinova_msgs)

find_package(k4a REQUIRED)

find_package(realsense2 REQUIRED)

set(OpenCV_DIR "/usr/local/lib/cmake/opencv4")

find_package(OpenCV REQUIRED)

find_package(OpenMP)

find_package(PCL REQUIRED)

add_message_files(FILES person_msgs.msg BoundingBoxes.msg BoundingBox.msg)

generate_messages(DEPENDENCIES std_msgs)

catkin_package(

INCLUDE_DIRS

CATKIN_DEPENDS

include

roscpp

rospy

std_msgs

message_runtime

actionlib_msgs)

if(OPENMP_FOUND)

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} ${OpenMP_CXX_FLAGS}")

endif()

include_directories(/home/m0rtzz/Workspaces/tts_test_ws/include ${catkin_INCLUDE_DIRS}

${OpenCV_INCLUDE_DIRS} ${PCL_INCLUDE_DIRS})

link_directories(/home/m0rtzz/Workspaces/tts_test_ws/lib/)

add_executable(tts_voice_test src/tts_voice_test.cc)

add_dependencies(tts_voice_test ${${PROJECT_NAME}_EXPORTED_TARGETS}

${catkin_EXPORTED_TARGETS})

target_link_libraries(

tts_voice_test

PRIVATE

k4a::k4a

${PCL_LIBRARIES}

${catkin_LIBRARIES}

${OpenCV_LIBRARIES}

${realsense2_LIBRARY}

-lrt

-ldl

-lhpdf

-lcurl

-pthread

-lasound

-ljsoncpp

/home/m0rtzz/Workspaces/tts_voice_test_ws/lib/your-appid/libmsc.so # 替换为你的appid

)

打开终端:

catkin_make

若找不到asoundlib.h文件打开终端输入:

sudo apt install -y libasound2-dev

编译通过~

librealsense及realsense-ros工作空间

sudo apt install -y ros-${ROS_DISTRO}-realsense2-camera ros-${ROS_DISTRO}-rgbd-launch

安装realsense sdk:

sudo apt-key adv --keyserver keyserver.ubuntu.com --recv-key F6E65AC044F831AC80A06380C8B3A55A6F3EFCDE || sudo apt-key adv --keyserver hkp://keyserver.ubuntu.com:80 --recv-key F6E65AC044F831AC80A06380C8B3A55A6F3EFCDE

sudo add-apt-repository "deb https://librealsense.intel.com/Debian/apt-repo $(lsb_release -cs) main" -u

sudo apt update -y

安装realsense lib:

sudo apt install -y librealsense2-dkms librealsense2-utils

安装gcc-4.9和g++-4.9:

sudo tee -a /etc/apt/sources.list > /dev/null << EOF

# gcc-4.9, g++4.9

deb https://mirrors.hust.edu.cn/ubuntu/ xenial universe

EOF

sudo apt update -y

sudo apt install -y gcc-4.9 g++-4.9

# 注释掉xenial软件源

sudo sed -i '/^deb https:\/\/mirrors.hust.edu.cn\/ubuntu\/ xenial universe/s/^/# /' /etc/apt/sources.list && sudo apt update -y

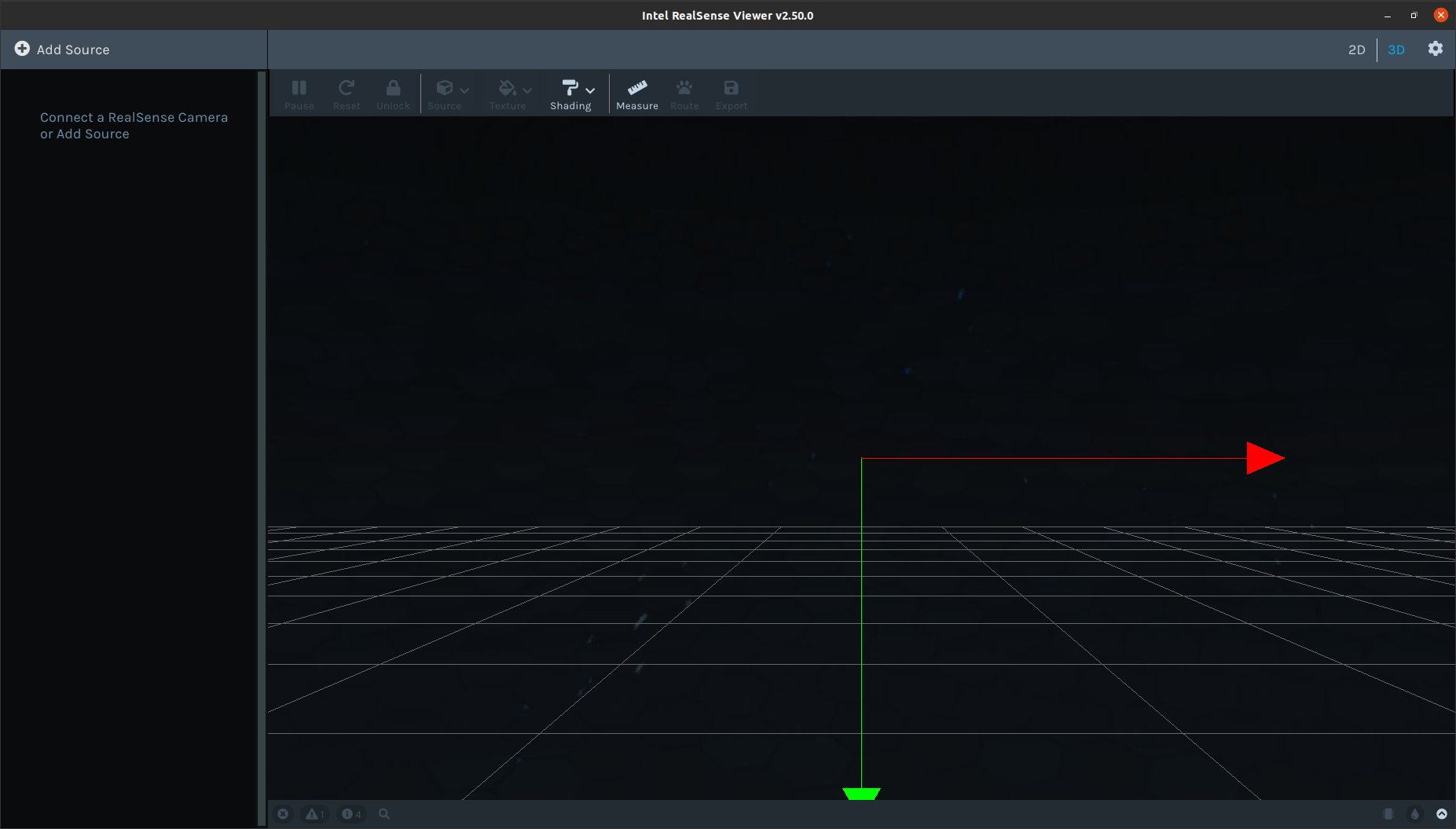

测试:

realsense-viewer

克隆librealsense源码并指定版本为v2.50.0:

git clone -b v2.50.0 https://github.com/IntelRealSense/librealsense.git librealsense-2.50.0

或公益加速源:

git clone -b v2.50.0 https://ghp.ci/https://github.com/IntelRealSense/librealsense.git librealsense-2.50.0

安装依赖:

sudo apt install -y libssl-dev libgtk-3-dev libusb-1.0-0-dev libglfw3-dev libgl1-mesa-dev libglu1-mesa-dev

进入刚才克隆的librealsense文件夹内:

cd librealsense-2.50.0/

./scripts/setup_udev_rules.sh

# The Bionic patches are maintained for Bionic Beaver LTS kernels 4.1[5/8], 5.[0/3/4] (Ubuntu18.04,`uname -r`查看自己的内核版本)

# The Focal patches are maintained for Ubuntu LTS with kernel 5.4, 5.8, 5.11 (Ubuntu20.04,`uname -r`查看自己的内核版本)

# 貌似不执行也不影响

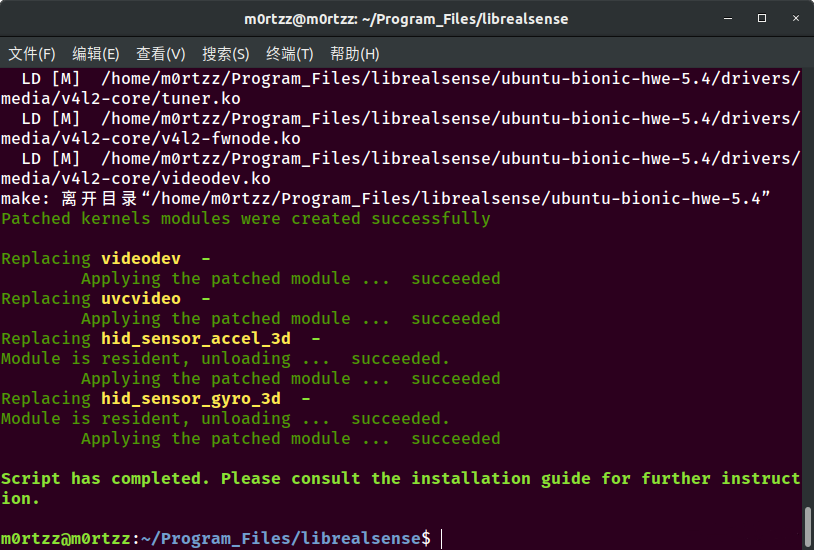

./scripts/patch-realsense-ubuntu-lts.sh

注意:上面的命令可能执行过慢,请耐心等待,或者科学的上网~

完成结果如下:

之后输入:

mkdir build && cd build/

# @file: $(git rev-parse --show-toplevel)/CMakeLists.txt

# @line: 3左右

project(librealsense2 LANGUAGES CXX C)

# NOTE: Modified by M0rtzz # [!code ++]

LINK_LIBRARIES(-lcurl -lcrypto) # [!code ++]

include(CMake/lrs_options.cmake)

cmake \

-D CMAKE_BUILD_TYPE=Release \

-D BUILD_EXAMPLES=true \

..

以下编译过慢,使用CPU最大线程进行make,速度会快很多:

sudo make -j$(nproc)

sudo make -j$(nproc) install

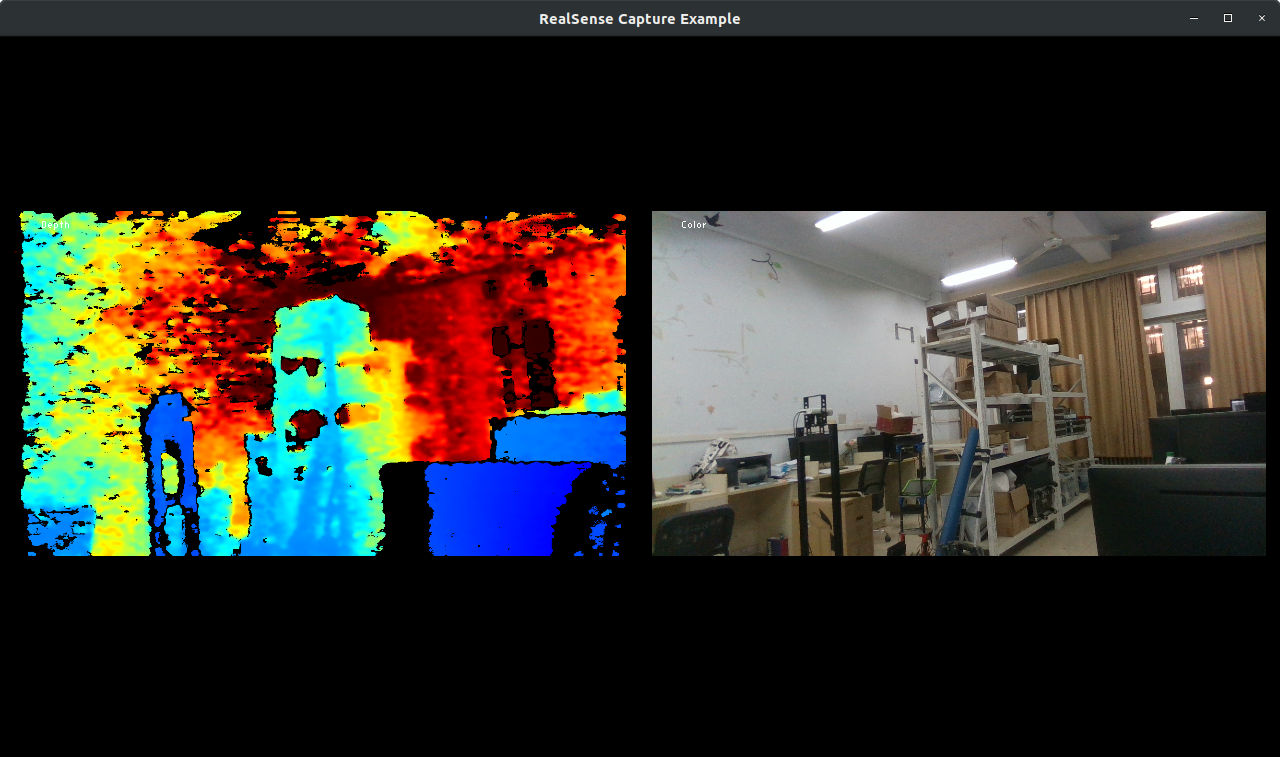

测试:

cd examples/capture/

./rs-capture

接下来我们配置realsense-ros工作空间:

cd catkin_ws/src/

下载功能包:

git clone -b ros1-legacy https://github.com/IntelRealSense/realsense-ros.git realsense-ros

或公益加速源:

git clone -b ros1-legacy https://ghp.ci/https://github.com/IntelRealSense/realsense-ros.git realsense-ros

cd ..

catkin_make \

-D CMAKE_BUILD_TYPE=Release \

-D CATKIN_ENABLE_TESTING=False

catkin_make install

测试:

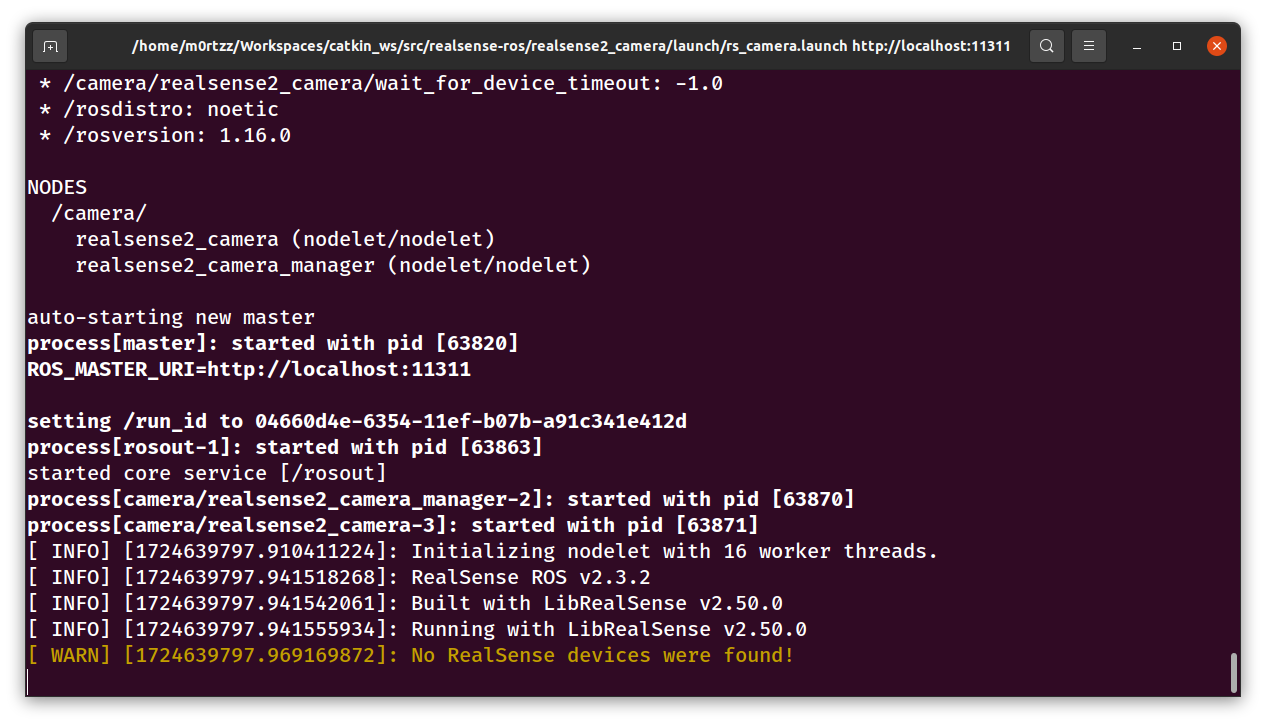

roslaunch realsense2_camera rs_camera.launch

还没安摄像头~

kinova-ros机械臂工作空间

cd catkin_ws/src/

catkin_init_workspace

cd ..

catkin_make

cd src/

git clone https://github.com/Kinovarobotics/kinova-ros.git kinova-ros

或公益加速源:

git clone https://ghp.ci/https://github.com/Kinovarobotics/kinova-ros.git kinova-ros

cd ..

安装缺少的moveit中相应的功能包 :

sudo apt install -y ros-${ROS_DISTRO}-moveit-visual-tools ros-${ROS_DISTRO}-moveit-ros-planning-interface

catkin_make

sudo cp src/kinova-ros/kinova_driver/udev/10-kinova-arm.rules /etc/udev/rules.d/

安装Moveit和pr2:

sudo apt install -y $(apt-cache search ros-${ROS_DISTRO}-pr2- | grep -v "ros-${ROS_DISTRO}-pr2-apps" | cut -d' ' -f1)

完成~

机器人导航

[!CAUTION]

ZZU-SR的童鞋请注意,此小节只需安装软件包,其他内容是之前听课做的笔记,导航相关代码直接

Copy比赛电脑的catkin_ws中的mrobot即可。

Dependency (ESSENTIAL)

sudo apt install -y "ros-${ROS_DISTRO}-move-base*" "ros-${ROS_DISTRO}-turtlebot3-*"

sudo apt install -y ros-${ROS_DISTRO}-dwa-local-planner ros-${ROS_DISTRO}-joy ros-${ROS_DISTRO}-teleop-twist-joy ros-${ROS_DISTRO}-teleop-twist-keyboard ros-${ROS_DISTRO}-laser-proc ros-${ROS_DISTRO}-rgbd-launch ros-${ROS_DISTRO}-depthimage-to-laserscan ros-${ROS_DISTRO}-rosserial-arduino ros-${ROS_DISTRO}-rosserial-python ros-${ROS_DISTRO}-rosserial-server ros-${ROS_DISTRO}-rosserial-client ros-${ROS_DISTRO}-rosserial-msgs ros-${ROS_DISTRO}-amcl ros-${ROS_DISTRO}-map-server ros-${ROS_DISTRO}-move-base ros-${ROS_DISTRO}-urdf ros-${ROS_DISTRO}-xacro ros-${ROS_DISTRO}-compressed-image-transport ros-${ROS_DISTRO}-rqt-image-view ros-${ROS_DISTRO}-gmapping ros-${ROS_DISTRO}-navigation ros-${ROS_DISTRO}-interactive-markers

安装gmapping包(用于构建地图):

sudo apt install -y ros-${ROS_DISTRO}-gmapping

安装地图服务包(用于保存与读取地图):

sudo apt install -y ros-${ROS_DISTRO}-map-server

安装navigation包(用于定位以及路径规划):

sudo apt install -y ros-${ROS_DISTRO}-navigation

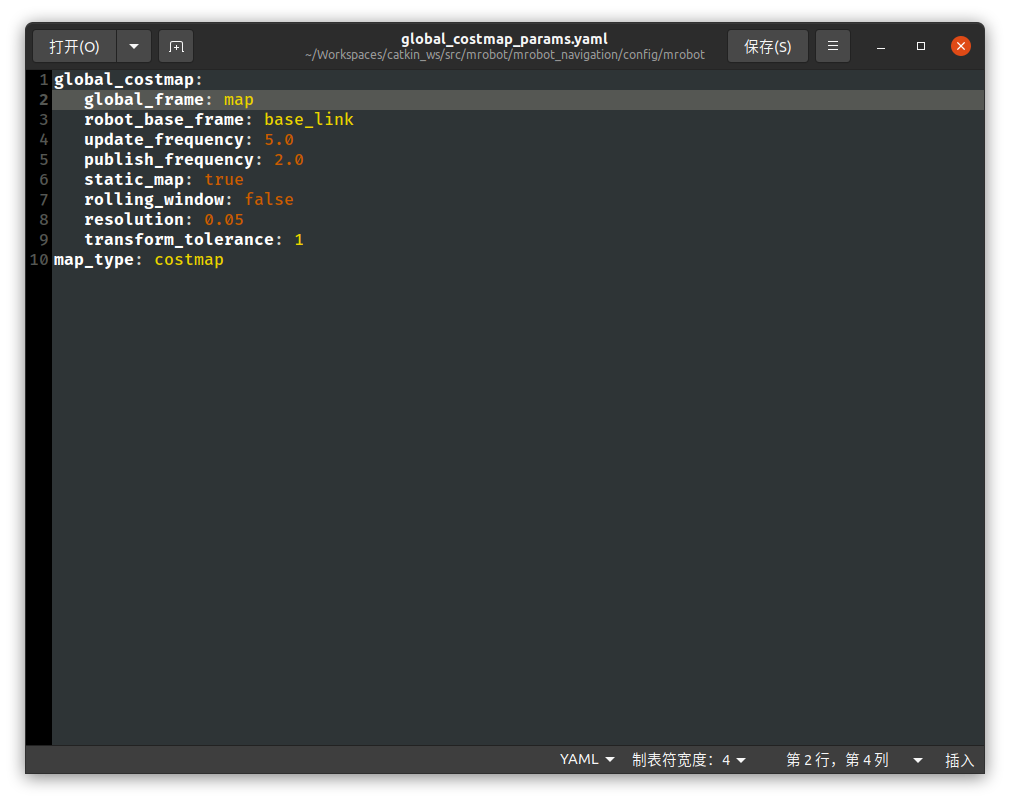

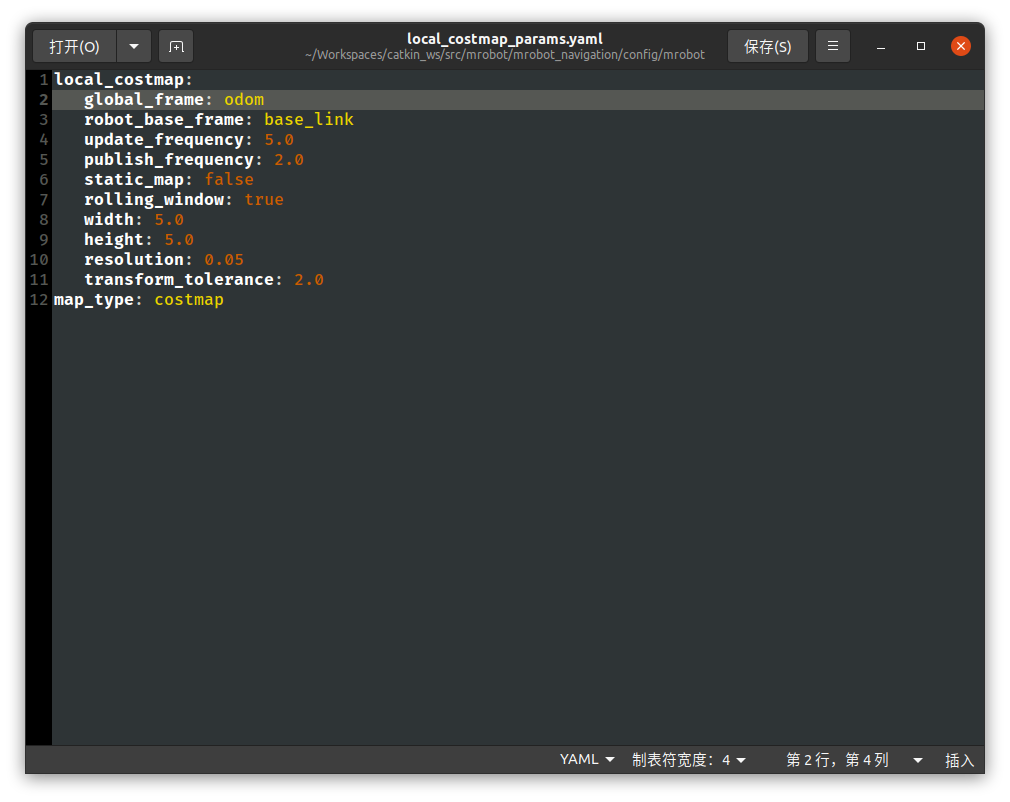

因tf和tf2迁移问题,需将工作空间内的所有global_costmap_params.yaml和local_costmap_params.yaml文件里的头几行去掉/,返回工作空间根目录下重新编译。

Reference:

http://wiki.ros.org/tf2/Migration#Removal_of_support_for_tf_prefix

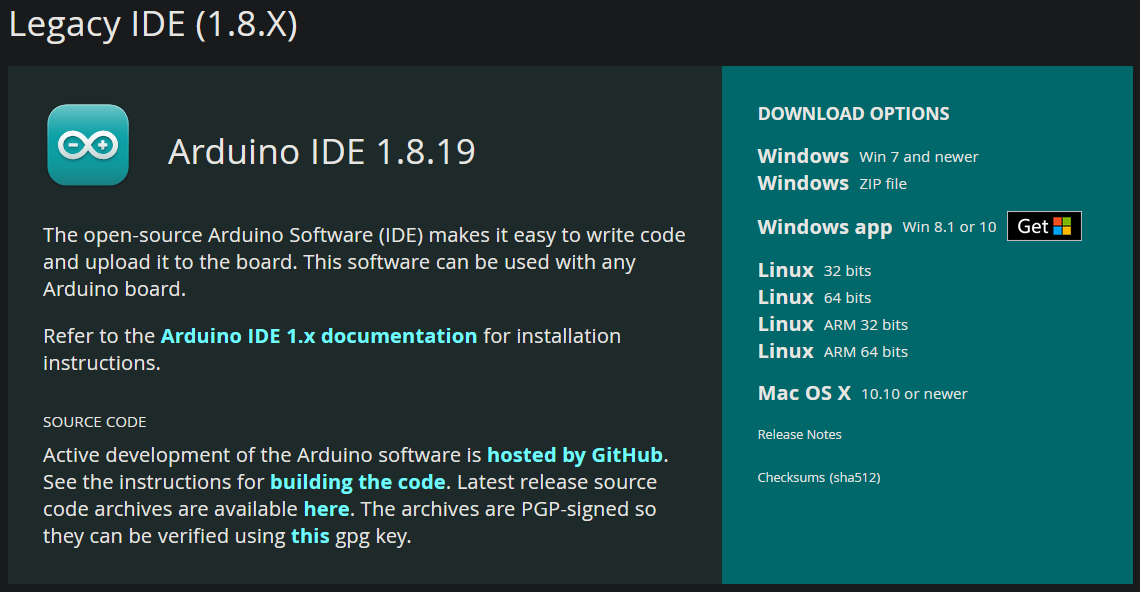

Arduino IDE (NOT REQUIRED) (EOL)

https://www.arduino.cc/en/software

下载安装包:

tar -xvf arduino-1.8.19-linux64.tar.xz && cd arduino-1.8.19/

sudo chmod +x install.sh

sudo ./install.sh

听课笔记 (NOT REQUIRED) (EOL)

首先创建实体导航工作空间:

mkdir -p navigation_entity_test_ws/src && cd navigation_entity_test_ws/src/

catkin_create_pkg entity_test roscpp rospy std_msgs gmapping map_server amcl move_base

cd .. && catkin_make

cd src/ && catkin_create_pkg robot_start_test roscpp rospy std_msgs ros_arduino_python usb_cam rplidar_ros

cd robot_start_test/ && mkdir launch && cd launch/ && touch start_test.launch

<!-- @file: start_test.launch

@brief: 机器人启动文件:

1.启动底盘

2.启动激光雷达

3.启动摄像头

-->

<launch>

<include file="$(find ros_arduino_python)/launch/arduino.launch" />

<include file="$(find usb_cam)/launch/usb_cam-test.launch" />

<include file="$(find rplidar_ros)/launch/rplidar.launch" />

</launch>

接下来创建机器人模型相关的功能包:

cd ../../src/

catkin_create_pkg robot_description_test urdf xacro

在功能包下新建urdf目录,编写具体的urdf文件:

cd robot_description_test/ && mkdir urdf

cd urdf/ && touch {robot.urdf.xacro,robot_base.urdf.xacro,robot_camera.urdf.xacro,robot_laser.urdf.xacro} && code robot.urdf.xacro

将下列代码粘贴进去:

<!-- @file: robot.urdf.xacro -->

<robot name="robot_test" xmlns:xacro="http://wiki.ros.org/xacro">

<xacro:include filename="robot_base.urdf.xacro" />

<xacro:include filename="robot_camera.urdf.xacro" />

<xacro:include filename="robot_laser.urdf.xacro" />

</robot>

保存退出,打开终端输入:

code robot_base.urdf.xacro

将下列代码粘贴进去:

robot_base.urdf.xacro

<!-- @file: robot_base.urdf.xacro -->

<robot name="robot_test" xmlns:xacro="http://wiki.ros.org/xacro">

<xacro:property name="footprint_radius" value="0.001" />

<link name="base_footprint">

<visual>

<geometry>

<sphere radius="${footprint_radius}" />

</geometry>

</visual>

</link>

<xacro:property name="base_radius" value="0.1" />

<xacro:property name="base_length" value="0.08" />

<xacro:property name="lidi" value="0.015" />

<xacro:property name="base_joint_z" value="${base_length / 2 + lidi}" />

<link name="base_link">

<visual>

<geometry>

<cylinder radius="0.1" length="0.08" />

</geometry>

<origin xyz="0 0 0" rpy="0 0 0" />

<material name="baselink_color">

<color rgba="1.0 0.5 0.2 0.5" />

</material>

</visual>

</link>

<joint name="link2footprint" type="fixed">

<parent link="base_footprint" />

<child link="base_link" />

<origin xyz="0 0 0.055" rpy="0 0 0" />

</joint>

<xacro:property name="wheel_radius" value="0.0325" />

<xacro:property name="wheel_length" value="0.015" />

<xacro:property name="PI" value="3.1415927" />

<xacro:property name="wheel_joint_z" value="${(base_length / 2 + lidi - wheel_radius) * -1}" />

<xacro:macro name="wheel_func" params="wheel_name flag">

<link name="${wheel_name}_wheel">

<visual>

<geometry>

<cylinder radius="${wheel_radius}" length="${wheel_length}" />

</geometry>

<origin xyz="0 0 0" rpy="${PI / 2} 0 0" />

<material name="wheel_color">

<color rgba="0 0 0 0.3" />

</material>

</visual>

</link>

<joint name="${wheel_name}2link" type="continuous">

<parent link="base_link" />

<child link="${wheel_name}_wheel" />

<origin xyz="0 ${0.1 * flag} ${wheel_joint_z}" rpy="0 0 0" />

<axis xyz="0 1 0" />

</joint>

</xacro:macro>

<xacro:wheel_func wheel_name="left" flag="1" />

<xacro:wheel_func wheel_name="right" flag="-1" />

<xacro:property name="small_wheel_radius" value="0.0075" />

<xacro:property name="small_joint_z" value="${(base_length / 2 + lidi - small_wheel_radius) * -1}" />

<xacro:macro name="small_wheel_func" params="small_wheel_name flag">

<link name="${small_wheel_name}_wheel">

<visual>

<geometry>

<sphere radius="${small_wheel_radius}" />

</geometry>

<origin xyz="0 0 0" rpy="0 0 0" />

<material name="wheel_color">

<color rgba="0 0 0 0.3" />

</material>

</visual>

</link>

<joint name="${small_wheel_name}2link" type="continuous">

<parent link="base_link" />

<child link="${small_wheel_name}_wheel" />

<origin xyz="${0.08 * flag} 0 ${small_joint_z}" rpy="0 0 0" />

<axis xyz="0 1 0" />

</joint>

</xacro:macro >

<xacro:small_wheel_func small_wheel_name="front" flag="1"/>

<xacro:small_wheel_func small_wheel_name="back" flag="-1"/>

</robot>

保存退出,打开终端输入:

code robot_camera.urdf.xacro

将下列代码粘贴进去:

<!-- @file: robot_camera.urdf.xacro -->

<robot name="robot_test" xmlns:xacro="http://wiki.ros.org/xacro">

<xacro:property name="camera_length" value="0.02" />

<xacro:property name="camera_width" value="0.05" />

<xacro:property name="camera_height" value="0.05" />

<xacro:property name="joint_camera_x" value="0.08" />

<xacro:property name="joint_camera_y" value="0" />

<xacro:property name="joint_camera_z" value="${base_length / 2 + camera_height / 2}" />

<link name="camera">

<visual>

<geometry>

<box size="${camera_length} ${camera_width} ${camera_height}" />

</geometry>

<origin xyz="0 0 0" rpy="0 0 0" />

<material name="black">

<color rgba="0 0 0 0.8" />

</material>

</visual>

</link>

<joint name="camera2base" type="fixed">

<parent link="base_link" />

<child link="camera" />

<origin xyz="${joint_camera_x} ${joint_camera_y} ${joint_camera_z}" rpy="0 0 0" />

</joint>

</robot>

保存退出,打开终端输入:

code robot_laser.urdf.xacro

将下列代码粘贴进去:

<!-- @file: robot_laser.urdf.xacro -->

<robot name="robot_test" xmlns:xacro="http://wiki.ros.org/xacro">

<xacro:property name="support_radius" value="0.01" />

<xacro:property name="support_length" value="0.15" />

<xacro:property name="laser_radius" value="0.03" />

<xacro:property name="laser_length" value="0.05" />

<xacro:property name="joint_support_x" value="0" />

<xacro:property name="joint_support_y" value="0" />

<xacro:property name="joint_support_z" value="${base_length / 2 + support_length / 2}" />

<xacro:property name="joint_laser_x" value="0" />

<xacro:property name="joint_laser_y" value="0" />

<xacro:property name="joint_laser_z" value="${support_length / 2 + laser_length / 2}" />

<link name="support">

<visual>

<geometry>

<cylinder radius="${support_radius}" length="${support_length}" />

</geometry>

<material name="yellow">

<color rgba="0.8 0.5 0.0 0.5" />

</material>

</visual>

</link>

<joint name="support2base" type="fixed">

<parent link="base_link" />

<child link="support"/>

<origin xyz="${joint_support_x} ${joint_support_y} ${joint_support_z}" rpy="0 0 0" />

</joint>

<link name="laser">

<visual>

<geometry>

<cylinder radius="${laser_radius}" length="${laser_length}" />

</geometry>

<material name="black">

<color rgba="0 0 0 0.5" />

</material>

</visual>

</link>

<joint name="laser2support" type="fixed">

<parent link="support" />

<child link="laser"/>

<origin xyz="${joint_laser_x} ${joint_laser_y} ${joint_laser_z}" rpy="0 0 0" />

</joint>

</robot>

保存退出,打开终端:

cd .. && mkdir launch

cd launch/ && touch robot_test.launch && code robot_test.launch

将下列代码粘贴进去:

<!-- @file: robot_test.launch -->

<launch>

<param name="robot_description" command="$(find xacro)/xacro $(find robot_description_test)/urdf/robot.urdf.xacro" />

<node pkg="joint_state_publisher" name="joint_state_publisher" type="joint_state_publisher" />

<node pkg="robot_state_publisher" name="robot_state_publisher" type="robot_state_publisher" />

</launch>

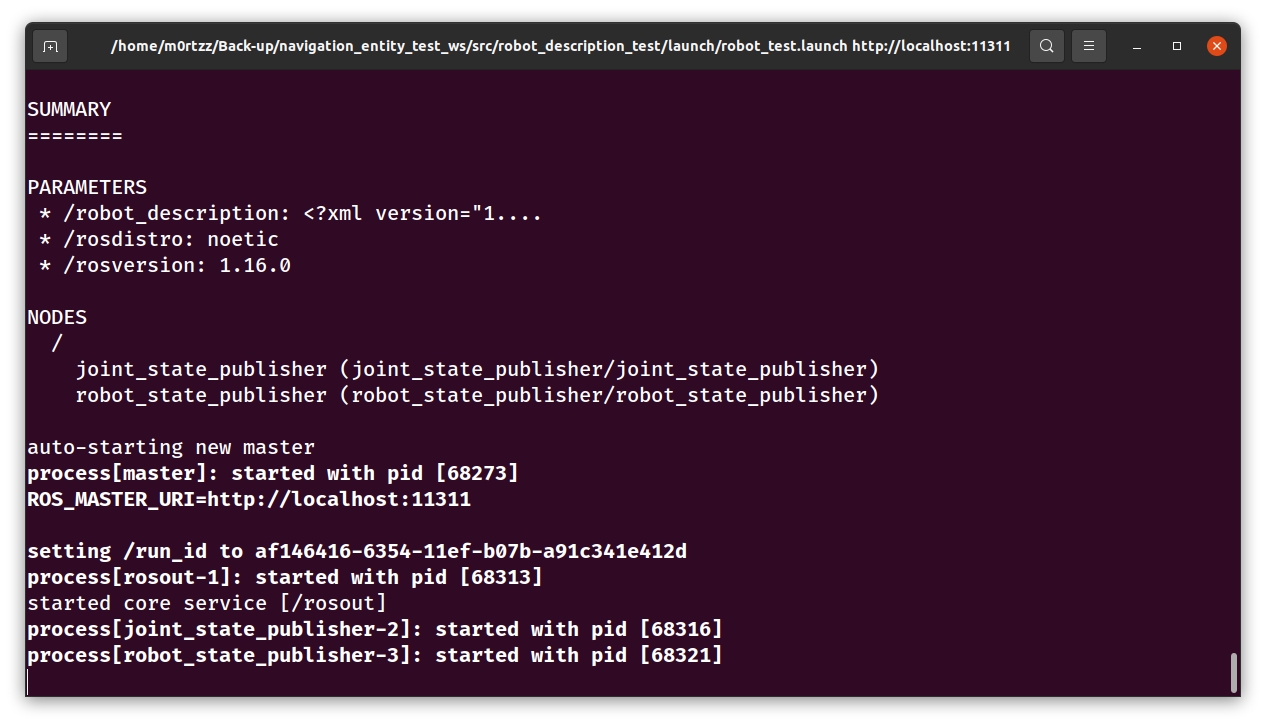

保存退出,打开终端:

cd ../../../ && echo 'source /home/m0rtzz/Workspaces/navigation_entity_test_ws/devel/setup.bash' >> ~/.bashrc && source ~/.bashrc

测试一下:

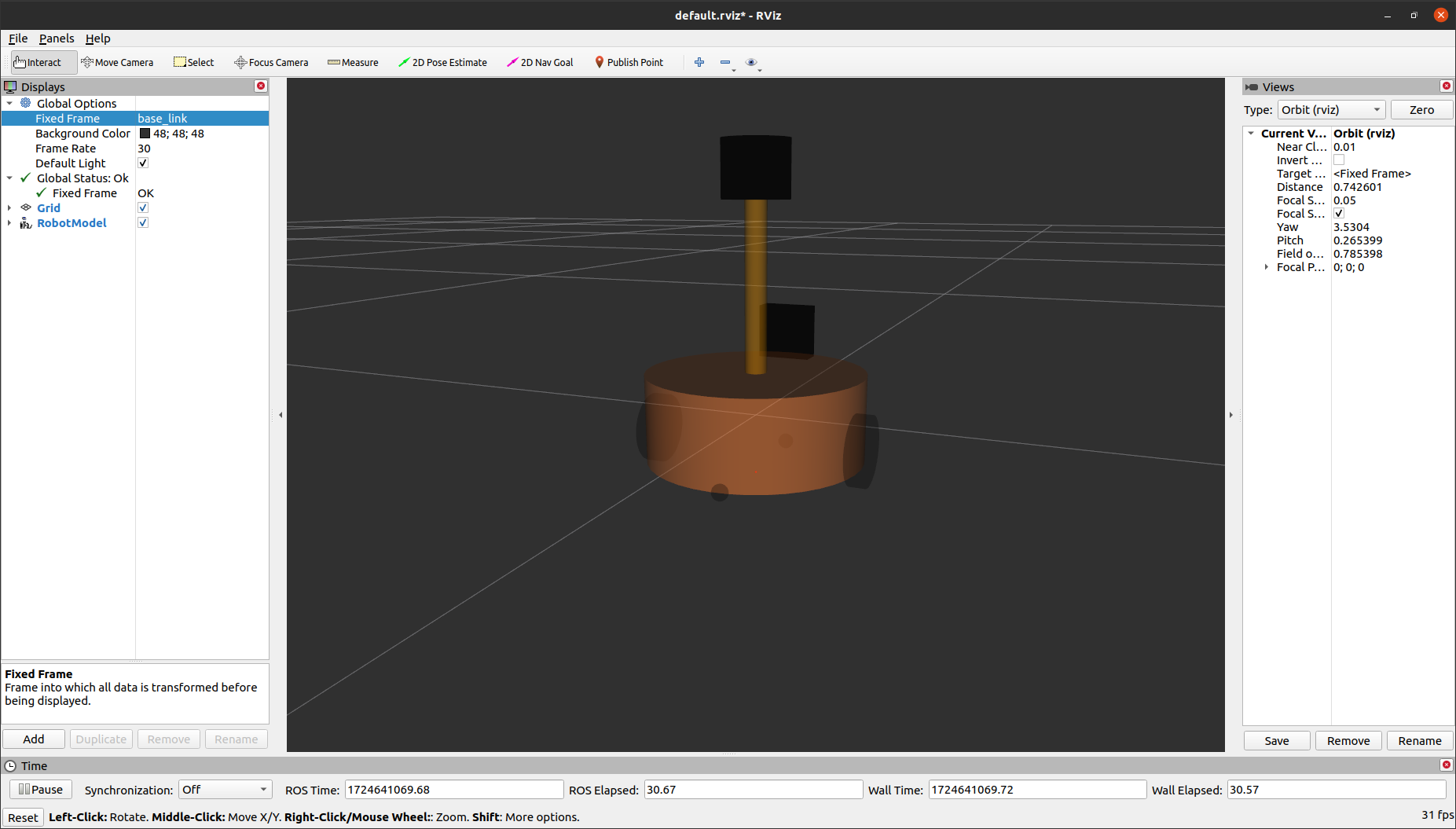

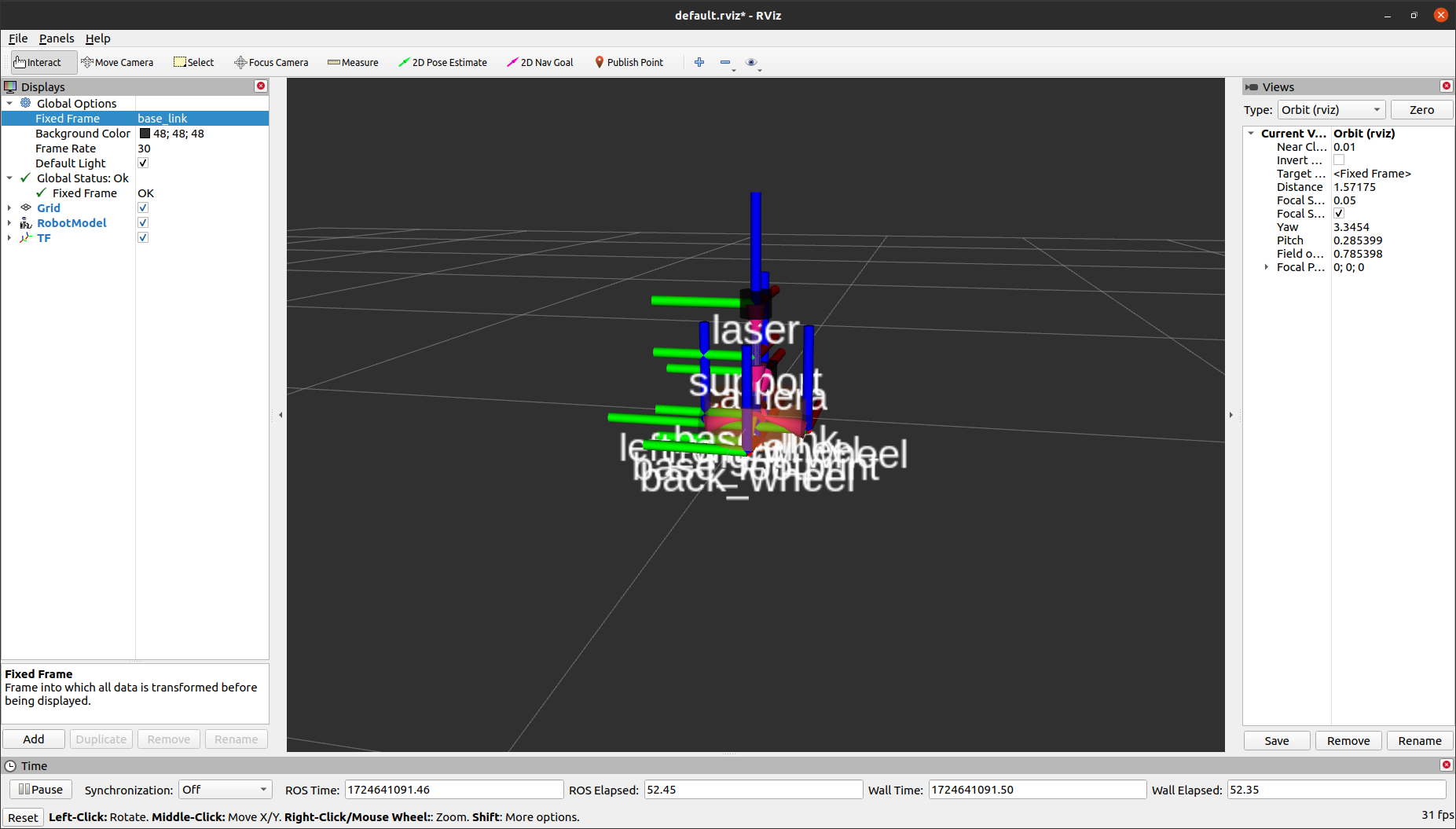

roslaunch robot_description_test robot_test.launch

之后Ctrl+Alt+T打开一个新的终端,输入:

rviz

将Fixed Frame设置为base_footprint:

Add一个RobotModel:

Add一个TF:

cd src/entity_test/ && mkdir launch && cd launch/

touch gmapping.launch && code gmapping.launch

将下列代码粘贴进去:

<!-- @file: gmapping.launch -->

<launch>

<node pkg="gmapping" type="slam_gmapping" name="slam_gmapping" output="screen">

<remap from="scan" to="scan"/>

<param name="base_frame" value="base_footprint"/><!--底盘坐标系-->

<param name="odom_frame" value="odom"/> <!--里程计坐标系-->

<param name="map_update_interval" value="5.0"/>

<param name="maxUrange" value="16.0"/>

<param name="sigma" value="0.05"/>

<param name="kernelSize" value="1"/>

<param name="lstep" value="0.05"/>

<param name="astep" value="0.05"/>

<param name="iterations" value="5"/>

<param name="lsigma" value="0.075"/>

<param name="ogain" value="3.0"/>

<param name="lskip" value="0"/>

<param name="srr" value="0.1"/>

<param name="srt" value="0.2"/>

<param name="str" value="0.1"/>

<param name="stt" value="0.2"/>

<param name="linearUpdate" value="1.0"/>

<param name="angularUpdate" value="0.5"/>

<param name="temporalUpdate" value="3.0"/>

<param name="resampleThreshold" value="0.5"/>

<param name="particles" value="30"/>

<param name="xmin" value="-50.0"/>

<param name="ymin" value="-50.0"/>

<param name="xmax" value="50.0"/>

<param name="ymax" value="50.0"/>

<param name="delta" value="0.05"/>

<param name="llsamplerange" value="0.01"/>

<param name="llsamplestep" value="0.01"/>

<param name="lasamplerange" value="0.005"/>

<param name="lasamplestep" value="0.005"/>

</node>

</launch>

cd .. && mkdir map

cd launch/ && touch map_save.launch && code map_save.launch

将下列代码粘贴进去:

<!-- @file: map_save.launch -->

<launch>

<arg name="filename" value="$(find entity_test)/map/nav" />

<node name="map_save" pkg="map_server" type="map_saver" args="-f $(arg filename)" />

</launch>

touch map_server.launch && code map_server.launch

将下列代码粘贴进去:

<!-- @file: map_server.launch -->

<launch>

<!-- 设置地图的配置文件 -->

<arg name="map" default="nav.yaml" />

<!-- 运行地图服务器,并且加载设置的地图-->

<node name="map_server" pkg="map_server" type="map_server" args="$(find entity_test)/map/$(arg map)"/>

</launch>

touch amcl.launch && code amcl.launch

将下列代码粘贴进去:

<!-- @file: amcl.launch -->

<launch>

<node pkg="amcl" type="amcl" name="amcl" output="screen">

<!-- Publish scans from best pose at a max of 10 Hz -->

<param name="odom_model_type" value="diff"/><!-- 里程计模式为差分 -->

<param name="odom_alpha5" value="0.1"/>

<param name="transform_tolerance" value="0.2" />

<param name="gui_publish_rate" value="10.0"/>

<param name="laser_max_beams" value="30"/>

<param name="min_particles" value="500"/>

<param name="max_particles" value="5000"/>

<param name="kld_err" value="0.05"/>

<param name="kld_z" value="0.99"/>

<param name="odom_alpha1" value="0.2"/>

<param name="odom_alpha2" value="0.2"/>

<!-- translation std dev, m -->

<param name="odom_alpha3" value="0.8"/>

<param name="odom_alpha4" value="0.2"/>

<param name="laser_z_hit" value="0.5"/>

<param name="laser_z_short" value="0.05"/>

<param name="laser_z_max" value="0.05"/>

<param name="laser_z_rand" value="0.5"/>

<param name="laser_sigma_hit" value="0.2"/>

<param name="laser_lambda_short" value="0.1"/>

<param name="laser_lambda_short" value="0.1"/>

<param name="laser_model_type" value="likelihood_field"/>

<!-- <param name="laser_model_type" value="beam"/> -->

<param name="laser_likelihood_max_dist" value="2.0"/>

<param name="update_min_d" value="0.2"/>

<param name="update_min_a" value="0.5"/>

<param name="odom_frame_id" value="odom"/><!-- 里程计坐标系 -->

<param name="base_frame_id" value="base_footprint"/><!-- 添加机器人基坐标系 -->

<param name="global_frame_id" value="map"/><!-- 添加地图坐标系 -->

</node>

</launch>

cd .. && mkdir param && cd param/ && touch {costmap_common_params.yaml,local_costmap_params.yaml,global_costmap_params.yaml,base_local_planner_params.yaml} && code .

将下列几个代码分别粘贴进去:

# @file: base_local_planner_params.yaml

TrajectoryPlannerROS:

# Robot Configuration Parameters

max_vel_x: 0.5 # X 方向最大速度

min_vel_x: 0.1 # X 方向最小速速

max_vel_theta: 1.0 #

min_vel_theta: -1.0

min_in_place_vel_theta: 1.0

acc_lim_x: 1.0 # X 加速限制

acc_lim_y: 0.0 # Y 加速限制

acc_lim_theta: 0.6 # 角速度加速限制

# Goal Tolerance Parameters,目标公差

xy_goal_tolerance: 0.10

yaw_goal_tolerance: 0.05

# Differential-drive robot configuration

# 是否是全向移动机器人

holonomic_robot: false

# Forward Simulation Parameters,前进模拟参数

sim_time: 0.8

vx_samples: 18

vtheta_samples: 20

sim_granularity: 0.05

# @file: cost_common_params.yaml

#机器人几何参,如果机器人是圆形,设置 robot_radius,如果是其他形状设置 footprint

robot_radius: 0.12 #圆形

# footprint: [[-0.12, -0.12], [-0.12, 0.12], [0.12, 0.12], [0.12, -0.12]] #其他形状

obstacle_range: 3.0 # 用于障碍物探测,比如: 值为 3.0,意味着检测到距离小于 3 米的障碍物时,就会引入代价地图

raytrace_range: 3.5 # 用于清除障碍物,比如:值为 3.5,意味着清除代价地图中 3.5 米以外的障碍物

#膨胀半径,扩展在碰撞区域以外的代价区域,使得机器人规划路径避开障碍物

inflation_radius: 0.2

#代价比例系数,越大则代价值越小

cost_scaling_factor: 3.0

#地图类型

map_type: costmap

#导航包所需要的传感器

observation_sources: scan

#对传感器的坐标系和数据进行配置。这个也会用于代价地图添加和清除障碍物。例如,你可以用激光雷达传感器用于在代价地图添加障碍物,再添加kinect用于导航和清除障碍物。

scan:

{

sensor_frame: laser,

data_type: LaserScan,

topic: scan,

marking: true,

clearing: true,

}

# @file: global_costmap_params.yaml

global_costmap:

global_frame: map #地图坐标系

robot_base_frame: base_footprint #机器人坐标系

# 以此实现坐标变换

update_frequency: 1.0 #代价地图更新频率

publish_frequency: 1.0 #代价地图的发布频率

transform_tolerance: 0.5 #等待坐标变换发布信息的超时时间

static_map: true # 是否使用一个地图或者地图服务器来初始化全局代价地图,如果不使用静态地图,这个参数为false.

# @file: local_costmap_params.yaml

local_costmap:

global_frame: odom #里程计坐标系

robot_base_frame: base_footprint #机器人坐标系

update_frequency: 10.0 #代价地图更新频率

publish_frequency: 10.0 #代价地图的发布频率

transform_tolerance: 0.5 #等待坐标变换发布信息的超时时间

static_map: false #不需要静态地图,可以提升导航效果

rolling_window: true #是否使用动态窗口,默认为false,在静态的全局地图中,地图不会变化

width: 3 # 局部地图宽度 单位是 m

height: 3 # 局部地图高度 单位是 m

resolution: 0.05 # 局部地图分辨率 单位是 m,一般与静态地图分辨率保持一致

cd ../launch/ && touch move_base.launch && code move_base.launch

将下列代码粘贴进去:

<!-- @file: move_base.launch -->

<launch>

<node pkg="move_base" type="move_base" respawn="false" name="move_base" output="screen" clear_params="true">

<rosparam file="$(find nav)/param/costmap_common_params.yaml" command="load" ns="global_costmap" />

<rosparam file="$(find nav)/param/costmap_common_params.yaml" command="load" ns="local_costmap" />

<rosparam file="$(find nav)/param/local_costmap_params.yaml" command="load" />

<rosparam file="$(find nav)/param/global_costmap_params.yaml" command="load" />

<rosparam file="$(find nav)/param/base_local_planner_params.yaml" command="load" />

</node>

</launch>

touch auto_slam.launch && code auto_slam.launch

将下列代码粘贴进去:

<!-- @file: auto_slam.launch -->

<launch>

<!-- 启动SLAM节点 -->

<include file="$(find entity_test)/launch/gmapping.launch" />

<!-- 运行move_base节点 -->

<include file="$(find entity_test)/launch/move_base.launch" />

</launch>

RECOMMENDED

自定义函数及别名

打开终端输入:

tee -a ~/.bashrc >> /dev/null << 'EOF'

# 在最后(WARNING:如果安装了Anaconda3,需要加在__conda_setup之前)加入如下代码段:

# 显示git分支

function customizePrompt()

{

local none='\[\033[00m\]' # 重置所有属性到默认状态

local green='\[\033[0;32m\]' # 绿色,用于用户名和主机名

local user_at_host="${green}\[\033[1m\]\u@\h${none}" # 用户名和主机名显示为绿色并加粗

local blue='\[\033[0;34m\]' # 蓝色,用于当前工作目录

local git_branch_color='\[\033[1;43;37m\]' # 黄色背景,白色字体,用于git分支

local command_prompt='$' # 命令提示符

if [ $UID -eq 0 ]; then

command_prompt='#'

fi

local working_directory="${blue}\[\033[1m\]\w${none}" # 当前工作目录显示为蓝色并加粗

# 使用__git_ps1函数来显示当前git分支,使用自定义的颜色

echo "${user_at_host}:${working_directory}\$(__git_ps1 \" ${git_branch_color}[%s]${none} \")${command_prompt} "

}

export PS1="$(customizePrompt)"

# 复制上一个命令到系统剪切板,sudo apt install -y xsel

function copyLastCommand()

{

# fc获取最后执行的命令,echo发送给xsel复制到剪切板

# -nl以列表形式显示命令历史,但不包括命令编号,-1只获取最近一条命令

echo -n $(fc -nl -1) | xsel --clipboard --input

}

# 创建一个别名,若与你的其他软件包内置命令冲突,请自行更换别名

alias clc='copyLastCommand'

# 计划关机(为了使得`.zshrc`也能用此函数,就没有使用`read -p`)

function powerOff() {

sudo shutdown -c # 取消之前的计划关机

echo "已取消之前的计划关机,下面进行新的计划~"

echo "请输入关机的年份(YYYY):"

read shutdown_year

echo "请输入关机的月份(MM):"

read shutdown_month

echo "请输入关机的日期(DD):"

read shutdown_day

echo "请输入关机的小时(24小时制,HH):"

read shutdown_hour

echo "请输入关机的分钟(MM):"

read shutdown_minute

shutdown_datetime="${shutdown_year}-${shutdown_month}-${shutdown_day} ${shutdown_hour}:${shutdown_minute}"

# 检查日期时间是否合法

date -d "${shutdown_datetime}" &>/dev/null

if [ $? -ne 0 ]; then

echo "Error:请输入合法的日期和时间格式(YYYY-MM-DD HH:MM)"

return 1

fi

# 计算等待时间(秒数)

current_timestamp=$(date +%s)

shutdown_timestamp=$(date -d "${shutdown_datetime}" +%s)

wait_time=$(echo "(${shutdown_timestamp} - ${current_timestamp}) / 60" | bc)

# 检查是否为未来时间

if [ ${wait_time} -le 0 ]; then

echo "Error:请输入未来的日期和时间"

return 1

fi

echo "计划在 ${shutdown_datetime} 关机"

sudo shutdown -h +${wait_time}

}

alias po='powerOff'

# 有效解决Anaconda3激活虚拟环境后使用`pip install`或`pip3 install`会安装到其他虚拟环境的问题

alias pip='python3 -m pip'

alias pip3='python3 -m pip'

EOF

source ~/.bashrc

这样就可以更清晰的显示git分支~

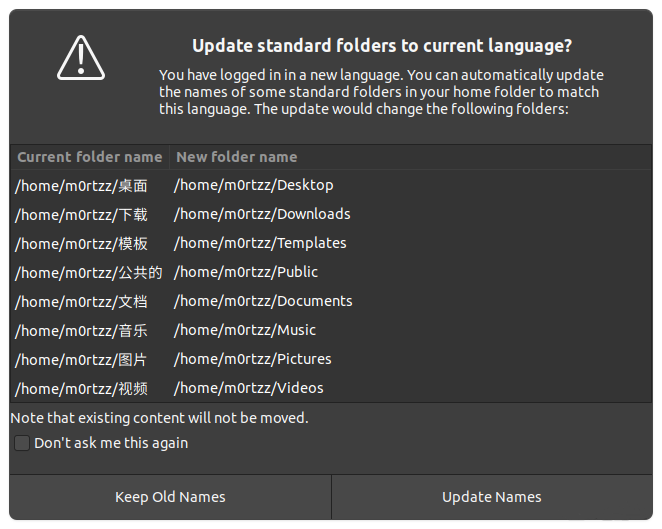

设置${HOME}下的文件夹为英文

export LANG=en_US

xdg-user-dirs-gtk-update

编辑选择右边的Update Names:

之后执行以下语句:

export LANG=zh_CN

reboot

勾选不要再次询问我,并选择保留旧的名称:

同步双系统时间

sudo apt install -y ntpdate

sudo ntpdate time.windows.com

timedatectl set-local-rtc 1 --adjust-system-clock

Software

推荐一些Linux办公常用的软件(包括wine环境下,全部下载deb格式的安装包,系统架构可通过命令uname -a查看):

搜狗输入法(下载安装包后,官方会跳转至安装教程,严格按照步骤执行)

Visual Studio Code(推荐打开Settings Sync,换电脑时设置可以同步)

仅支持Ubuntu20.04,需安装依赖包:

Linux原生微信_需要安装星火应用商店(最近的版本好像使用Fcitx输入法时中文输入有问题)

Ubuntu20.04以下版本或者不想安装星火应用商店的用户可安装Flatpak打包的微信(需安装Flatpak,下文有Flatpak安装教程):

解决基于Fcitx5的搜狗输入法无法在Flatpak版微信中进行中文输入的问题:https://github.com/web1n/wechat-universal-flatpak/issues/33#issuecomment-2222259823

wget -q --show-progress https://github.com/web1n/wechat-universal-flatpak/releases/latest/download/com.tencent.WeChat-x86_64.flatpak -O com.tencent.WeChat-x86_64.flatpak && sudo flatpak install ./com.tencent.WeChat-x86_64.flatpak

可以水平和垂直分割的终端:

sudo apt install -y terminator

Neovim:

sudo apt install -y neovim && \

echo '/usr/bin/nvim' | sudo update-alternatives --config editor

trash命令:

sudo apt install -y trash-cli

tree命令:

sudo apt install -y tree

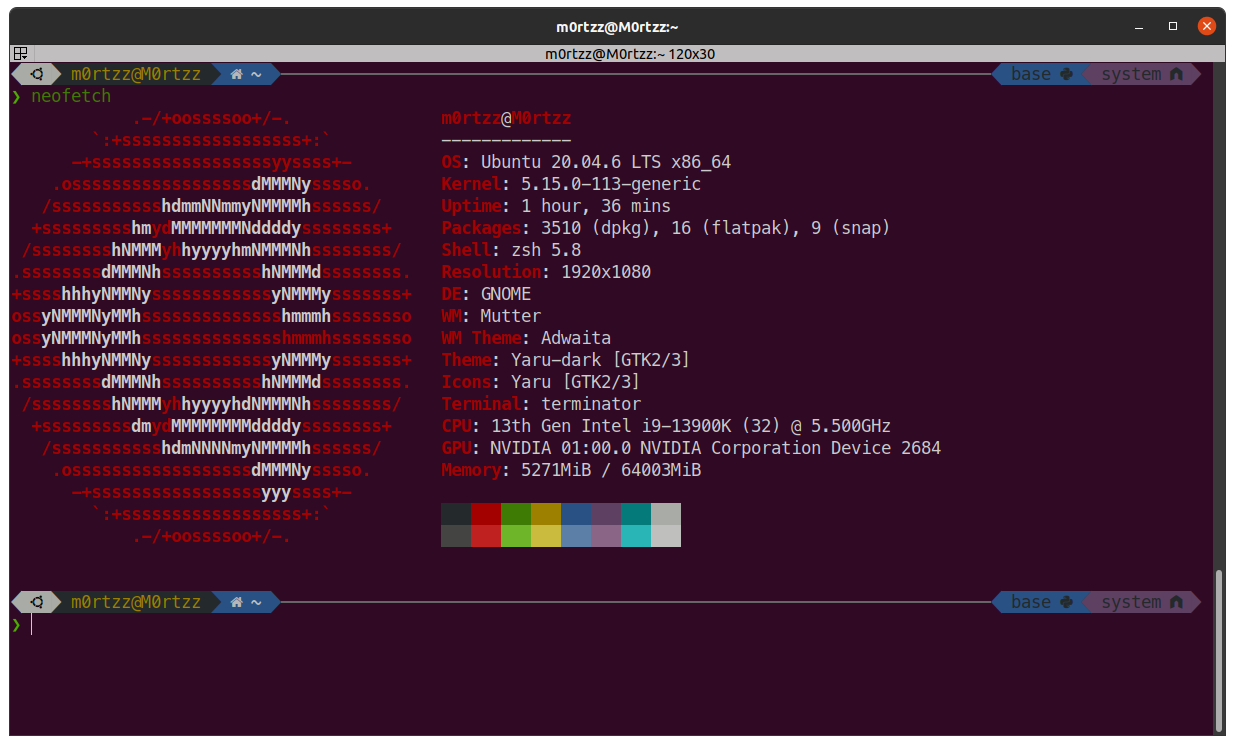

查看系统信息:

sudo apt install -y neofetch

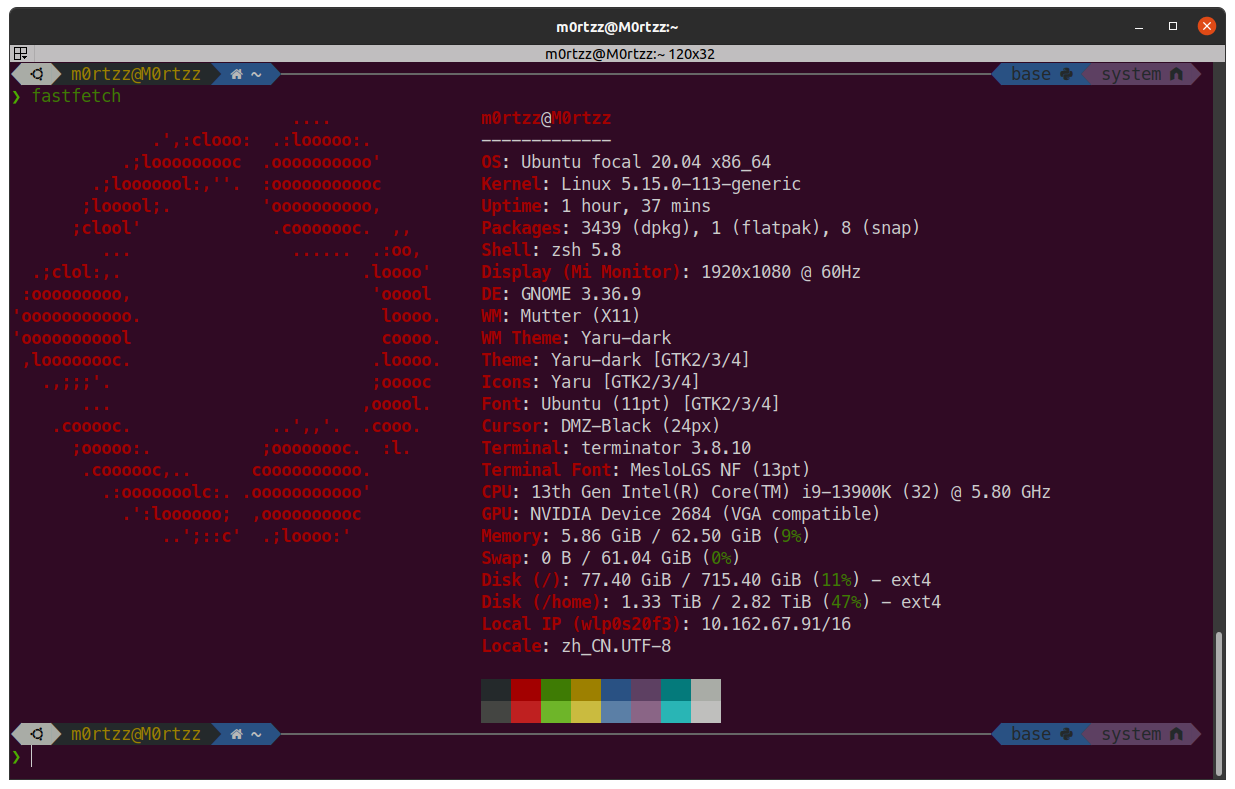

或安装用C语言写的更快的fastfetch:

wget -q --show-progress https://github.com/fastfetch-cli/fastfetch/releases/latest/download/fastfetch-linux-amd64.deb -O fastfetch-linux-amd64.deb && sudo apt install -y ./fastfetch-linux-amd64.deb

rar文件解压工具:

sudo apt install -y unrar

解决不能观看MP4文件:

sudo apt update -y

sudo apt install -y libdvdnav-dev libdvdread-dev gstreamer1.0-plugins-bad gstreamer1.0-plugins-ugly libdvd-pkg

sudo apt install -y ubuntu-restricted-extras

sudo dpkg-reconfigure libdvd-pkg

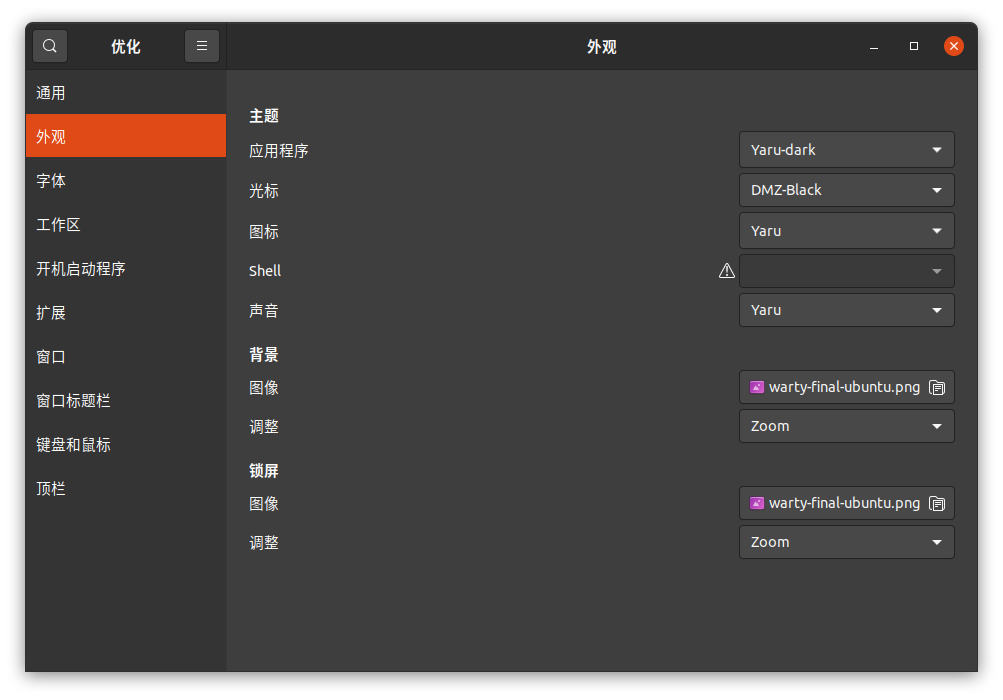

系统优化:

sudo apt update -y

sudo apt install -y gnome-tweak-tool

命令启动:

gnome-tweaks

或:

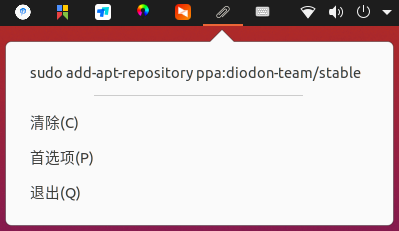

剪贴板管理工具:

sudo add-apt-repository ppa:diodon-team/stable

sudo apt update -y && sudo apt install -y diodon

然后使用刚才安装的优化工具将diodon设置为开机自启动:

这样就实现了类似于Windows下Win + V的剪贴板功能:

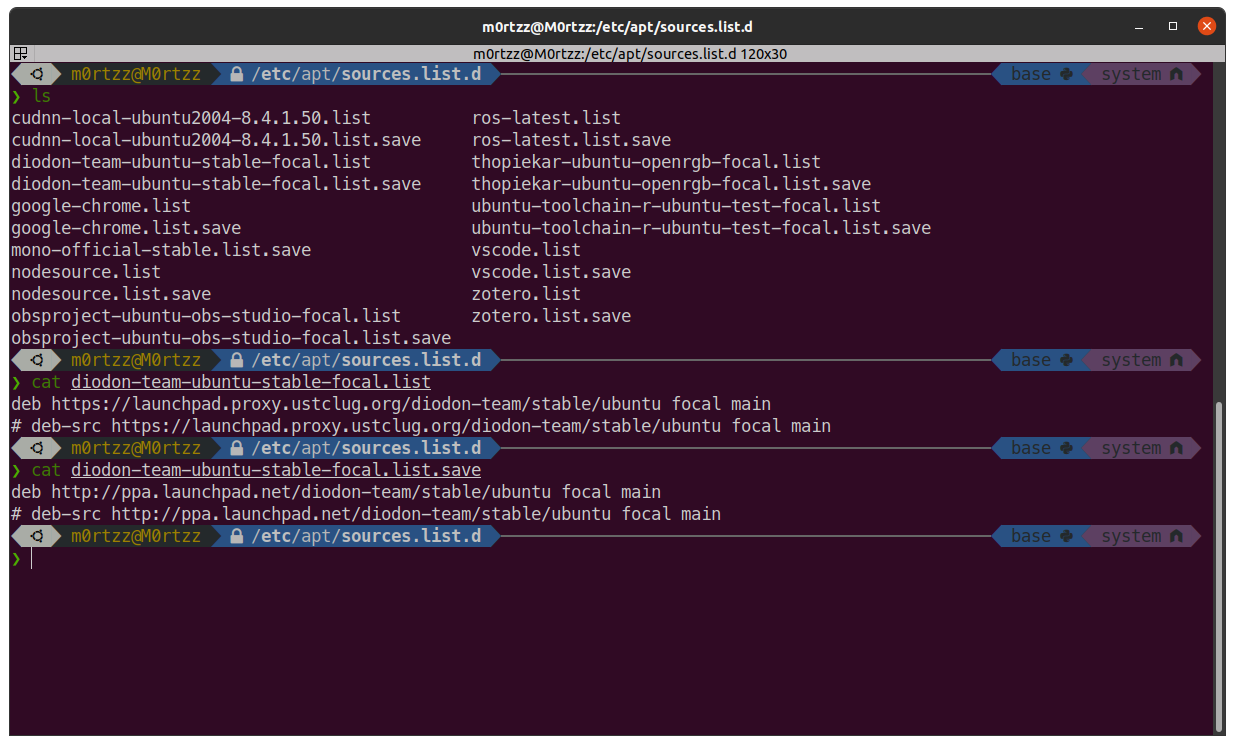

另外可使用中科大源反向代理的Canonical PPA仓库:

[!TIP]

将

/etc/apt/sources.list.d下.list文件中的http://ppa.launchpad.net替换为https://launchpad.proxy.ustclug.org即可,建议替换前先sudo cp /etc/apt/sources.list.d/your-file.list /etc/apt/sources.list.d/your-file.list.save备份一下(请自行替换文件名)

Flatpak:

Ubuntu 18.10 (Cosmic Cuttlefish) or later:

sudo apt install -y flatpak

Older Ubuntu versions:

sudo add-apt-repository ppa:flatpak/stable

sudo apt update -y

sudo apt install -y flatpak

FlatHub上交镜像源:

https://mirror.sjtu.edu.cn/docs/flathub

切换之后更新Flatpak应用将加速:

sudo flatpak update

火狐浏览器优化:

地址栏输入:

about:config

full-screen-api.warning.timeout

设置为0~

full-screen-api.transition-duration.enter

和

full-screen-api.transition-duration.leave

都设置为0 0~

browser.search.openintab

browser.urlbar.openintab

browser.tabs.loadBookmarksInTabs

都设置为true~

browser.urlbar.trimURLs

设置为false~

OPTIONAL

启动菜单的默认项

sudo gedit /etc/default/grub

改一下GRUB_DEFAULT=后边的数字,默认是0,Windows是第n个就设置为n-1

保存后关闭,打开终端,输入:

sudo update-grub

reboot

重启后问题解决~

使在桌面上右键打开终端时进入Desktop目录(Ubuntu18.04)

https://launchpad.net/ubuntu/+source/gnome-terminal/3.28.1-1ubuntu1

下载源码包:

wget -q --show-progress https://launchpad.net/ubuntu/+archive/primary/+sourcefiles/gnome-terminal/3.28.1-1ubuntu1/gnome-terminal_3.28.1.orig.tar.xz -O gnome-terminal_3.28.1.orig.tar.xz && \

wget -q --show-progress https://launchpad.net/ubuntu/+archive/primary/+sourcefiles/gnome-terminal/3.28.1-1ubuntu1/gnome-terminal_3.28.1-1ubuntu1.debian.tar.xz -O gnome-terminal_3.28.1-1ubuntu1.debian.tar.xz

解压:

tar -xf gnome-terminal_3.28.1.orig.tar.xz && \

tar -xf gnome-terminal_3.28.1-1ubuntu1.debian.tar.xz

ls debian/ gnome-terminal-3.28.1/

cp -r debian/* gnome-terminal-3.28.1/

cd gnome-terminal-3.28.1/ && git apply patches/*.patch

安装依赖:

sudo apt install -y intltool libvte-2.91-dev gsettings-desktop-schemas-dev uuid-dev libdconf-dev libpcre2-dev libgconf2-dev libxml2-utils gnome-shell libnautilus-extension-dev itstool yelp-tools pcre2-utils

打开src/下的terminal-nautilus.c,找到:

static inline gboolean

desktop_opens_home_dir (TerminalNautilus *nautilus)

{

#if 0

return _client_get_bool (gconf_client,

"/apps/nautilus-open-terminal/desktop_opens_home_dir",

NULL);

#endif

return TRUE;

}

改为

static inline gboolean

desktop_opens_home_dir (TerminalNautilus *nautilus)

{

#if 0

return _client_get_bool (gconf_client,

"/apps/nautilus-open-terminal/desktop_opens_home_dir",

NULL);

#endif

return FALSE; // here

}

src/下打开终端

cd ..

autoreconf --install

autoconf

./configure --prefix='/usr'

sudo make -j$(nproc)

sudo make check -j$(nproc)

sudo make -j$(nproc) install

sudo cp /usr/lib/nautilus/extensions-3.0/libterminal-nautilus.so /usr/lib/x86_64-linux-gnu/nautilus/extensions-3.0/

reboot

问题解决!

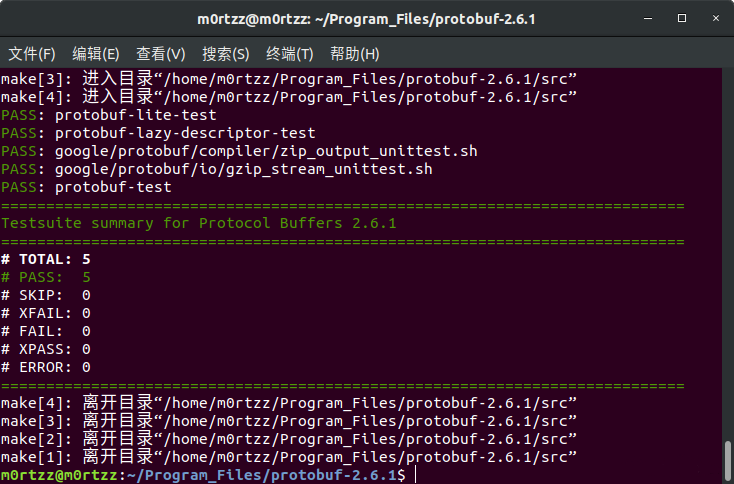

protobuf-2.6.1

sudo apt install -y libtool

https://github.com/protocolbuffers/protobuf/releases/download/v2.6.1/protobuf-2.6.1.tar.gz

或镜像:

wget -q --show-progress https://raw.gitcode.com/M0rtzz/protobuf-2.6.1/assets/199 -O protobuf-2.6.1.tar.gz

tar -zxvf protobuf-2.6.1.tar.gz && cd protobuf-2.6.1

# 可有可无(Reference: https://github.com/protocolbuffers/protobuf/blob/v2.6.1/README.md?plain=1#L11-L21)

./autogen.sh

./configure --prefix=/usr/local/protobuf

sudo make -j$(nproc)

养成有make check/test就执行的好习惯:

sudo make check -j$(nproc)

sudo make -j$(nproc) install

sudo tee -a /etc/profile > /dev/null << 'EOF'

# protobuf

export PATH=${PATH}:/usr/local/protobuf/bin

export LIBRARY_PATH=${LIBRARY_PATH}:/usr/local/protobuf/lib

export PKG_CONFIG_PATH=${PKG_CONFIG_PATH}:/usr/local/protobuf/lib/pkgconfig

EOF

source /etc/profile

sudo tee /etc/ld.so.conf.d/protobuf.conf > /dev/null << EOF

/usr/local/protobuf/lib

EOF

sudo ldconfig

OpenBLAS

软件源安装 (RECOMMENDED)

sudo apt update -y && sudo apt install -y libopenblas-dev

源码编译安装 (NOT RECOMMENDED)

sudo apt install -y gfortran gcc-arm-linux-gnueabihf libnewlib-arm-none-eabi libc6-dev-i386

git clone https://github.com/OpenMathLib/OpenBLAS.git OpenBLAS

或公益加速源:

git clone https://gitclone.com/github.com/OpenMathLib/OpenBLAS.git OpenBLAS

cd OpenBLAS/

sudo make -j$(nproc)

sudo make PREFIX=/usr/local install

查看版本:

grep OPENBLAS_VERSION /usr/local/include/openblas_config.h

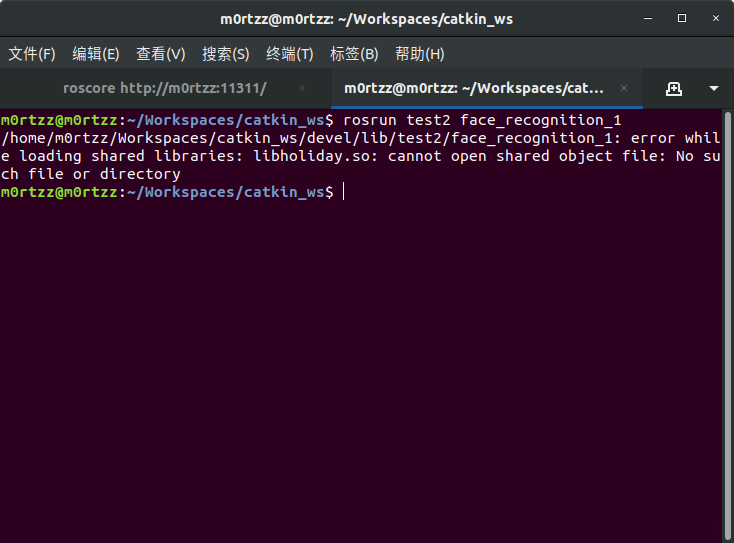

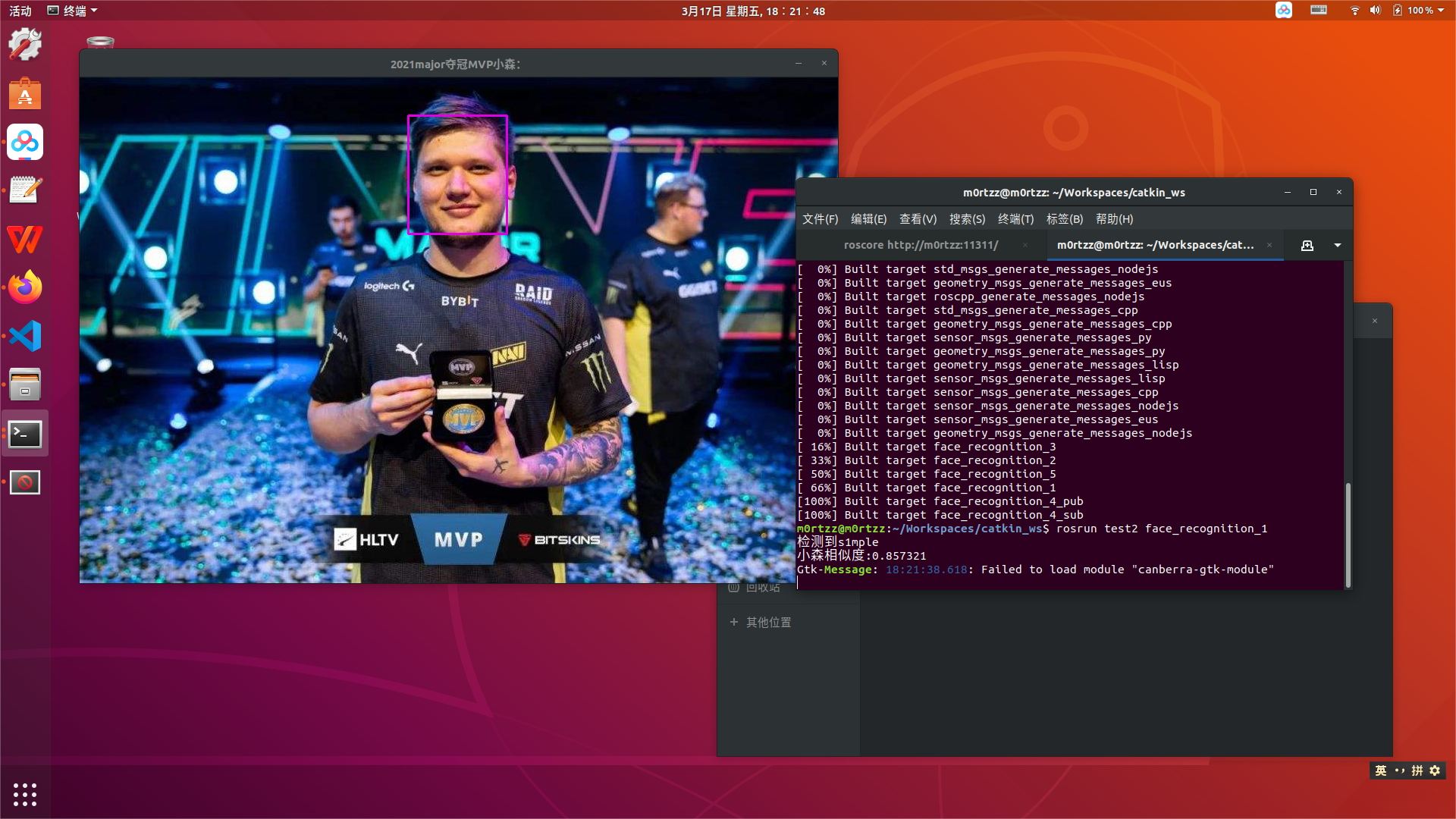

seetaface2工作空间

echo 'source /home/m0rtzz/Workspaces/catkin_ws/devel/setup.bash' >> ~/.bashrc

source ~/.bashrc

解决办法:

加入工作空间下lib文件夹的路径:

echo 'export LD_LIBRARY_PATH=${LD_LIBRARY_PATH}:/home/m0rtzz/Workspaces/catkin_ws/lib' >> ~/.bashrc

source ~/.bashrc

解决!

报错:

Gtk-Message: 15:22:30.610: Failed to load module "canberra-gtk-module"

解决方法:

sudo apt install -y 'libcanberra-gtk*'

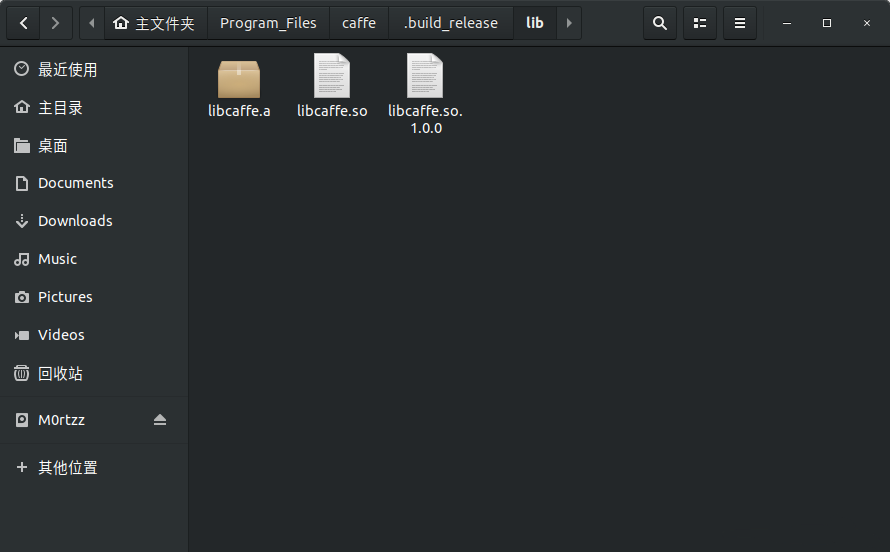

caffe (EOL)

Reference:

https://blog.csdn.net/weixin_39161727/article/details/120136500

首先安装依赖:

sudo apt install -y libprotobuf-dev libleveldb-dev libsnappy-dev libopencv-dev libhdf5-serial-dev protobuf-compiler libatlas-base-dev libgflags-dev libgoogle-glog-dev liblmdb-dev libboost-all-dev

git clone https://github.com/BVLC/caffe.git caffe

或公益加速源:

git clone https://ghp.ci/https://github.com/BVLC/caffe.git caffe

cd caffe/ && sudo cp Makefile.config.example Makefile.config

gedit Makefile.config

Makefile.config

## Refer to http://caffe.berkeleyvision.org/installation.html

# Contributions simplifying and improving our build system are welcome!

# cuDNN acceleration switch (uncomment to build with cuDNN).

USE_CUDNN := 1

# CPU-only switch (uncomment to build without GPU support).

# CPU_ONLY := 1

# uncomment to disable IO dependencies and corresponding data layers

# USE_OPENCV := 0

# USE_LEVELDB := 0

# USE_LMDB := 0

# This code is taken from https://github.com/sh1r0/caffe-android-lib

# USE_HDF5 := 0

# uncomment to allow MDB_NOLOCK when reading LMDB files (only if necessary)

# You should not set this flag if you will be reading LMDBs with any

# possibility of simultaneous read and write

# ALLOW_LMDB_NOLOCK := 1

# Uncomment if you're using OpenCV 3

OPENCV_VERSION := 3

# To customize your choice of compiler, uncomment and set the following.

# N.B. the default for Linux is g++ and the default for OSX is clang++

CUSTOM_CXX := g++

# CUDA directory contains bin/ and lib/ directories that we need.

CUDA_DIR := /usr/local/cuda

# On Ubuntu 14.04, if cuda tools are installed via

# "sudo apt-get install nvidia-cuda-toolkit" then use this instead:

# CUDA_DIR := /usr

# CUDA architecture setting: going with all of them.

# For CUDA < 6.0, comment the *_50 through *_61 lines for compatibility.

# For CUDA < 8.0, comment the *_60 and *_61 lines for compatibility.

# For CUDA >= 9.0, comment the *_20 and *_21 lines for compatibility.

CUDA_ARCH := #-gencode arch=compute_20,code=sm_20 \

#-gencode arch=compute_20,code=sm_21 \

#-gencode arch=compute_30,code=sm_30 \

-gencode arch=compute_35,code=sm_35 \

-gencode arch=compute_50,code=sm_50 \

-gencode arch=compute_52,code=sm_52 \

-gencode arch=compute_60,code=sm_60 \

-gencode arch=compute_61,code=sm_61 \

-gencode arch=compute_61,code=compute_61

# BLAS choice:

# atlas for ATLAS (default)

# mkl for MKL

# open for OpenBlas

BLAS := open

# Custom (MKL/ATLAS/OpenBLAS) include and lib directories.

# Leave commented to accept the defaults for your choice of BLAS

# (which should work)!

# BLAS_INCLUDE := /path/to/your/blas

# BLAS_LIB := /path/to/your/blas

# Homebrew puts openblas in a directory that is not on the standard search path

# BLAS_INCLUDE := $(shell brew --prefix openblas)/include

# BLAS_LIB := $(shell brew --prefix openblas)/lib

# This is required only if you will compile the matlab interface.

# MATLAB directory should contain the mex binary in /bin.

# MATLAB_DIR := /usr/local

# MATLAB_DIR := /Applications/MATLAB_R2012b.app

# NOTE: this is required only if you will compile the python interface.

# We need to be able to find Python.h and numpy/arrayobject.h.

PYTHON_INCLUDE := /usr/include/python2.7 \

/usr/lib/python2.7/dist-packages/numpy/core/include

# Anaconda Python distribution is quite popular. Include path:

# Verify anaconda location, sometimes it's in root.

# ANACONDA_HOME := $(HOME)/anaconda

# PYTHON_INCLUDE := $(ANACONDA_HOME)/include \

# $(ANACONDA_HOME)/include/python2.7 \

# $(ANACONDA_HOME)/lib/python2.7/site-packages/numpy/core/include

# Uncomment to use Python 3 (default is Python 2)

PYTHON_LIBRARIES := boost_python3 python3.6m

PYTHON_INCLUDE := /usr/include/python3.6m \

/usr/lib/python3.6/dist-packages/numpy/core/include

# We need to be able to find libpythonX.X.so or .dylib.

PYTHON_LIB := /usr/lib

# PYTHON_LIB := $(ANACONDA_HOME)/lib

# Homebrew installs numpy in a non standard path (keg only)

# PYTHON_INCLUDE += $(dir $(shell python -c 'import numpy.core; print(numpy.core.__file__)'))/include

# PYTHON_LIB += $(shell brew --prefix numpy)/lib

# Uncomment to support layers written in Python (will link against Python libs)

WITH_PYTHON_LAYER := 1

# Whatever else you find you need goes here.

INCLUDE_DIRS := $(PYTHON_INCLUDE) /usr/local/include /usr/include/hdf5/serial/

LIBRARY_DIRS := $(PYTHON_LIB) /usr/local/lib /usr/lib /usr/lib/x86_64-linux-gnu/hdf5/serial/

# If Homebrew is installed at a non standard location (for example your home directory) and you use it for general dependencies

# INCLUDE_DIRS += $(shell brew --prefix)/include

# LIBRARY_DIRS += $(shell brew --prefix)/lib

# NCCL acceleration switch (uncomment to build with NCCL)

# https://github.com/NVIDIA/nccl (last tested version: v1.2.3-1+cuda8.0)

# USE_NCCL := 1

# Uncomment to use `pkg-config` to specify OpenCV library paths.

# (Usually not necessary -- OpenCV libraries are normally installed in one of the above $LIBRARY_DIRS.)

# USE_PKG_CONFIG := 1

# N.B. both build and distribute dirs are cleared on `make clean`

BUILD_DIR := build

DISTRIBUTE_DIR := distribute

# Uncomment for debugging. Does not work on OSX due to https://github.com/BVLC/caffe/issues/171

# DEBUG := 1

# The ID of the GPU that 'make runtest' will use to run unit tests.

TEST_GPUID := 0

# enable pretty build (comment to see full commands)

Q ?= @

gedit Makefile

Makefile

PROJECT := caffe

CONFIG_FILE := Makefile.config

# Explicitly check for the config file, otherwise make -k will proceed anyway.

ifeq ($(wildcard $(CONFIG_FILE)),)

$(error $(CONFIG_FILE) not found. See $(CONFIG_FILE).example.)

endif

include $(CONFIG_FILE)

BUILD_DIR_LINK := $(BUILD_DIR)

ifeq ($(RELEASE_BUILD_DIR),)

RELEASE_BUILD_DIR := .$(BUILD_DIR)_release

endif

ifeq ($(DEBUG_BUILD_DIR),)

DEBUG_BUILD_DIR := .$(BUILD_DIR)_debug

endif

DEBUG ?= 0

ifeq ($(DEBUG), 1)

BUILD_DIR := $(DEBUG_BUILD_DIR)

OTHER_BUILD_DIR := $(RELEASE_BUILD_DIR)

else

BUILD_DIR := $(RELEASE_BUILD_DIR)

OTHER_BUILD_DIR := $(DEBUG_BUILD_DIR)

endif

# All of the directories containing code.

SRC_DIRS := $(shell find * -type d -exec bash -c "find {} -maxdepth 1 \

\( -name '*.cpp' -o -name '*.proto' \) | grep -q ." \; -print)

# The target shared library name

LIBRARY_NAME := $(PROJECT)

LIB_BUILD_DIR := $(BUILD_DIR)/lib

STATIC_NAME := $(LIB_BUILD_DIR)/lib$(LIBRARY_NAME).a

DYNAMIC_VERSION_MAJOR := 1

DYNAMIC_VERSION_MINOR := 0

DYNAMIC_VERSION_REVISION := 0

DYNAMIC_NAME_SHORT := lib$(LIBRARY_NAME).so

#DYNAMIC_SONAME_SHORT := $(DYNAMIC_NAME_SHORT).$(DYNAMIC_VERSION_MAJOR)

DYNAMIC_VERSIONED_NAME_SHORT := $(DYNAMIC_NAME_SHORT).$(DYNAMIC_VERSION_MAJOR).$(DYNAMIC_VERSION_MINOR).$(DYNAMIC_VERSION_REVISION)

DYNAMIC_NAME := $(LIB_BUILD_DIR)/$(DYNAMIC_VERSIONED_NAME_SHORT)

COMMON_FLAGS += -DCAFFE_VERSION=$(DYNAMIC_VERSION_MAJOR).$(DYNAMIC_VERSION_MINOR).$(DYNAMIC_VERSION_REVISION)

##############################

# Get all source files

##############################

# CXX_SRCS are the source files excluding the test ones.

CXX_SRCS := $(shell find src/$(PROJECT) ! -name "test_*.cpp" -name "*.cpp")

# CU_SRCS are the cuda source files

CU_SRCS := $(shell find src/$(PROJECT) ! -name "test_*.cu" -name "*.cu")

# TEST_SRCS are the test source files

TEST_MAIN_SRC := src/$(PROJECT)/test/test_caffe_main.cpp

TEST_SRCS := $(shell find src/$(PROJECT) -name "test_*.cpp")

TEST_SRCS := $(filter-out $(TEST_MAIN_SRC), $(TEST_SRCS))

TEST_CU_SRCS := $(shell find src/$(PROJECT) -name "test_*.cu")

GTEST_SRC := src/gtest/gtest-all.cpp

# TOOL_SRCS are the source files for the tool binaries

TOOL_SRCS := $(shell find tools -name "*.cpp")

# EXAMPLE_SRCS are the source files for the example binaries

EXAMPLE_SRCS := $(shell find examples -name "*.cpp")

# BUILD_INCLUDE_DIR contains any generated header files we want to include.

BUILD_INCLUDE_DIR := $(BUILD_DIR)/src

# PROTO_SRCS are the protocol buffer definitions

PROTO_SRC_DIR := src/$(PROJECT)/proto

PROTO_SRCS := $(wildcard $(PROTO_SRC_DIR)/*.proto)

# PROTO_BUILD_DIR will contain the .cc and obj files generated from

# PROTO_SRCS; PROTO_BUILD_INCLUDE_DIR will contain the .h header files

PROTO_BUILD_DIR := $(BUILD_DIR)/$(PROTO_SRC_DIR)

PROTO_BUILD_INCLUDE_DIR := $(BUILD_INCLUDE_DIR)/$(PROJECT)/proto

# NONGEN_CXX_SRCS includes all source/header files except those generated

# automatically (e.g., by proto).

NONGEN_CXX_SRCS := $(shell find \

src/$(PROJECT) \

include/$(PROJECT) \

python/$(PROJECT) \

matlab/+$(PROJECT)/private \

examples \

tools \

-name "*.cpp" -or -name "*.hpp" -or -name "*.cu" -or -name "*.cuh")

LINT_SCRIPT := scripts/cpp_lint.py

LINT_OUTPUT_DIR := $(BUILD_DIR)/.lint

LINT_EXT := lint.txt

LINT_OUTPUTS := $(addsuffix .$(LINT_EXT), $(addprefix $(LINT_OUTPUT_DIR)/, $(NONGEN_CXX_SRCS)))

EMPTY_LINT_REPORT := $(BUILD_DIR)/.$(LINT_EXT)

NONEMPTY_LINT_REPORT := $(BUILD_DIR)/$(LINT_EXT)

# PY$(PROJECT)_SRC is the python wrapper for $(PROJECT)

PY$(PROJECT)_SRC := python/$(PROJECT)/_$(PROJECT).cpp

PY$(PROJECT)_SO := python/$(PROJECT)/_$(PROJECT).so

PY$(PROJECT)_HXX := include/$(PROJECT)/layers/python_layer.hpp

# MAT$(PROJECT)_SRC is the mex entrance point of matlab package for $(PROJECT)

MAT$(PROJECT)_SRC := matlab/+$(PROJECT)/private/$(PROJECT)_.cpp

ifneq ($(MATLAB_DIR),)

MAT_SO_EXT := $(shell $(MATLAB_DIR)/bin/mexext)

endif

MAT$(PROJECT)_SO := matlab/+$(PROJECT)/private/$(PROJECT)_.$(MAT_SO_EXT)

##############################

# Derive generated files

##############################

# The generated files for protocol buffers

PROTO_GEN_HEADER_SRCS := $(addprefix $(PROTO_BUILD_DIR)/, \

$(notdir ${PROTO_SRCS:.proto=.pb.h}))

PROTO_GEN_HEADER := $(addprefix $(PROTO_BUILD_INCLUDE_DIR)/, \

$(notdir ${PROTO_SRCS:.proto=.pb.h}))

PROTO_GEN_CC := $(addprefix $(BUILD_DIR)/, ${PROTO_SRCS:.proto=.pb.cc})

PY_PROTO_BUILD_DIR := python/$(PROJECT)/proto

PY_PROTO_INIT := python/$(PROJECT)/proto/__init__.py

PROTO_GEN_PY := $(foreach file,${PROTO_SRCS:.proto=_pb2.py}, \

$(PY_PROTO_BUILD_DIR)/$(notdir $(file)))

# The objects corresponding to the source files

# These objects will be linked into the final shared library, so we

# exclude the tool, example, and test objects.

CXX_OBJS := $(addprefix $(BUILD_DIR)/, ${CXX_SRCS:.cpp=.o})

CU_OBJS := $(addprefix $(BUILD_DIR)/cuda/, ${CU_SRCS:.cu=.o})

PROTO_OBJS := ${PROTO_GEN_CC:.cc=.o}

OBJS := $(PROTO_OBJS) $(CXX_OBJS) $(CU_OBJS)

# tool, example, and test objects

TOOL_OBJS := $(addprefix $(BUILD_DIR)/, ${TOOL_SRCS:.cpp=.o})

TOOL_BUILD_DIR := $(BUILD_DIR)/tools

TEST_CXX_BUILD_DIR := $(BUILD_DIR)/src/$(PROJECT)/test

TEST_CU_BUILD_DIR := $(BUILD_DIR)/cuda/src/$(PROJECT)/test

TEST_CXX_OBJS := $(addprefix $(BUILD_DIR)/, ${TEST_SRCS:.cpp=.o})

TEST_CU_OBJS := $(addprefix $(BUILD_DIR)/cuda/, ${TEST_CU_SRCS:.cu=.o})

TEST_OBJS := $(TEST_CXX_OBJS) $(TEST_CU_OBJS)

GTEST_OBJ := $(addprefix $(BUILD_DIR)/, ${GTEST_SRC:.cpp=.o})

EXAMPLE_OBJS := $(addprefix $(BUILD_DIR)/, ${EXAMPLE_SRCS:.cpp=.o})

# Output files for automatic dependency generation

DEPS := ${CXX_OBJS:.o=.d} ${CU_OBJS:.o=.d} ${TEST_CXX_OBJS:.o=.d} \

${TEST_CU_OBJS:.o=.d} $(BUILD_DIR)/${MAT$(PROJECT)_SO:.$(MAT_SO_EXT)=.d}

# tool, example, and test bins

TOOL_BINS := ${TOOL_OBJS:.o=.bin}

EXAMPLE_BINS := ${EXAMPLE_OBJS:.o=.bin}

# symlinks to tool bins without the ".bin" extension

TOOL_BIN_LINKS := ${TOOL_BINS:.bin=}

# Put the test binaries in build/test for convenience.

TEST_BIN_DIR := $(BUILD_DIR)/test

TEST_CU_BINS := $(addsuffix .testbin,$(addprefix $(TEST_BIN_DIR)/, \

$(foreach obj,$(TEST_CU_OBJS),$(basename $(notdir $(obj))))))

TEST_CXX_BINS := $(addsuffix .testbin,$(addprefix $(TEST_BIN_DIR)/, \

$(foreach obj,$(TEST_CXX_OBJS),$(basename $(notdir $(obj))))))

TEST_BINS := $(TEST_CXX_BINS) $(TEST_CU_BINS)

# TEST_ALL_BIN is the test binary that links caffe dynamically.

TEST_ALL_BIN := $(TEST_BIN_DIR)/test_all.testbin

##############################

# Derive compiler warning dump locations

##############################

WARNS_EXT := warnings.txt

CXX_WARNS := $(addprefix $(BUILD_DIR)/, ${CXX_SRCS:.cpp=.o.$(WARNS_EXT)})

CU_WARNS := $(addprefix $(BUILD_DIR)/cuda/, ${CU_SRCS:.cu=.o.$(WARNS_EXT)})

TOOL_WARNS := $(addprefix $(BUILD_DIR)/, ${TOOL_SRCS:.cpp=.o.$(WARNS_EXT)})

EXAMPLE_WARNS := $(addprefix $(BUILD_DIR)/, ${EXAMPLE_SRCS:.cpp=.o.$(WARNS_EXT)})

TEST_WARNS := $(addprefix $(BUILD_DIR)/, ${TEST_SRCS:.cpp=.o.$(WARNS_EXT)})

TEST_CU_WARNS := $(addprefix $(BUILD_DIR)/cuda/, ${TEST_CU_SRCS:.cu=.o.$(WARNS_EXT)})

ALL_CXX_WARNS := $(CXX_WARNS) $(TOOL_WARNS) $(EXAMPLE_WARNS) $(TEST_WARNS)

ALL_CU_WARNS := $(CU_WARNS) $(TEST_CU_WARNS)

ALL_WARNS := $(ALL_CXX_WARNS) $(ALL_CU_WARNS)

EMPTY_WARN_REPORT := $(BUILD_DIR)/.$(WARNS_EXT)

NONEMPTY_WARN_REPORT := $(BUILD_DIR)/$(WARNS_EXT)

##############################

# Derive include and lib directories

##############################

CUDA_INCLUDE_DIR := $(CUDA_DIR)/include

CUDA_LIB_DIR :=

# add <cuda>/lib64 only if it exists

ifneq ("$(wildcard $(CUDA_DIR)/lib64)","")

CUDA_LIB_DIR += $(CUDA_DIR)/lib64

endif

CUDA_LIB_DIR += $(CUDA_DIR)/lib

INCLUDE_DIRS += $(BUILD_INCLUDE_DIR) ./src ./include

ifneq ($(CPU_ONLY), 1)

INCLUDE_DIRS += $(CUDA_INCLUDE_DIR)

LIBRARY_DIRS += $(CUDA_LIB_DIR)

LIBRARIES := cudart cublas curand

endif

LIBRARIES += glog gflags protobuf boost_system boost_filesystem m hdf5_serial_hl hdf5_serial

# handle IO dependencies

USE_LEVELDB ?= 1

USE_LMDB ?= 1

# This code is taken from https://github.com/sh1r0/caffe-android-lib

USE_HDF5 ?= 1

USE_OPENCV ?= 1

ifeq ($(USE_LEVELDB), 1)

LIBRARIES += leveldb snappy

endif

ifeq ($(USE_LMDB), 1)

LIBRARIES += lmdb

endif

# This code is taken from https://github.com/sh1r0/caffe-android-lib

ifeq ($(USE_HDF5), 1)

LIBRARIES += hdf5_hl hdf5

endif

ifeq ($(USE_OPENCV), 1)

LIBRARIES += opencv_core opencv_highgui opencv_imgproc

ifeq ($(OPENCV_VERSION), 3)

LIBRARIES += opencv_imgcodecs

endif

endif

PYTHON_LIBRARIES ?= boost_python python2.7

WARNINGS := -Wall -Wno-sign-compare

##############################

# Set build directories

##############################

DISTRIBUTE_DIR ?= distribute

DISTRIBUTE_SUBDIRS := $(DISTRIBUTE_DIR)/bin $(DISTRIBUTE_DIR)/lib

DIST_ALIASES := dist

ifneq ($(strip $(DISTRIBUTE_DIR)),distribute)

DIST_ALIASES += distribute

endif

ALL_BUILD_DIRS := $(sort $(BUILD_DIR) $(addprefix $(BUILD_DIR)/, $(SRC_DIRS)) \

$(addprefix $(BUILD_DIR)/cuda/, $(SRC_DIRS)) \

$(LIB_BUILD_DIR) $(TEST_BIN_DIR) $(PY_PROTO_BUILD_DIR) $(LINT_OUTPUT_DIR) \

$(DISTRIBUTE_SUBDIRS) $(PROTO_BUILD_INCLUDE_DIR))

##############################

# Set directory for Doxygen-generated documentation

##############################

DOXYGEN_CONFIG_FILE ?= ./.Doxyfile

# should be the same as OUTPUT_DIRECTORY in the .Doxyfile

DOXYGEN_OUTPUT_DIR ?= ./doxygen

DOXYGEN_COMMAND ?= doxygen

# All the files that might have Doxygen documentation.

DOXYGEN_SOURCES := $(shell find \

src/$(PROJECT) \

include/$(PROJECT) \

python/ \

matlab/ \

examples \

tools \

-name "*.cpp" -or -name "*.hpp" -or -name "*.cu" -or -name "*.cuh" -or \

-name "*.py" -or -name "*.m")

DOXYGEN_SOURCES += $(DOXYGEN_CONFIG_FILE)

##############################

# Configure build

##############################

# Determine platform

UNAME := $(shell uname -s)

ifeq ($(UNAME), Linux)

LINUX := 1

else ifeq ($(UNAME), Darwin)

OSX := 1

OSX_MAJOR_VERSION := $(shell sw_vers -productVersion | cut -f 1 -d .)

OSX_MINOR_VERSION := $(shell sw_vers -productVersion | cut -f 2 -d .)

endif

# Linux

ifeq ($(LINUX), 1)

CXX ?= /usr/bin/g++

GCCVERSION := $(shell $(CXX) -dumpversion | cut -f1,2 -d.)

# older versions of gcc are too dumb to build boost with -Wuninitalized

ifeq ($(shell echo | awk '{exit $(GCCVERSION) < 4.6;}'), 1)

WARNINGS += -Wno-uninitialized

endif

# boost::thread is reasonably called boost_thread (compare OS X)

# We will also explicitly add stdc++ to the link target.

LIBRARIES += boost_thread stdc++