神经网络之数据拟合

近期学习神经网络,初步实现利用神经网络对训练集进行拟合。import tensorflow as tfimport numpy as npimport osos.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'import matplotlib.pyplot as plt#定义在(-0.5,0.5)之间随机生成50个数,并转换为50*1矩阵x_data ...

·

近期学习神经网络,初步实现利用神经网络对训练集进行拟合。 import tensorflow as tf import numpy as np import os os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2' import matplotlib.pyplot as plt #定义在(-0.5,0.5)之间随机生成50个数,并转换为50*1矩阵 x_data = np.linspace(-0.5,0.5,50)[:,np.newaxis] #随机生成50个噪音值 noise = np.random.normal(0,0.02,x_data.shape) #y=x^2+noise y_data = np.square(x_data) + noise # x=tf.placeholder(tf.float32,[None,1]) x=tf.compat.v1.placeholder(tf.float32,[None,1]) # y=tf.placeholder(tf.float32,[None,1]) y=tf.compat.v1.placeholder(tf.float32,[None,1]) w_L1=tf.Variable(tf.random.normal([1,10])) b_L1=tf.Variable(tf.zeros([1,10])) wx_plut_b_L1=tf.matmul(x,w_L1)+b_L1 L1 = tf.nn.sigmoid(wx_plut_b_L1) w_L2=tf.Variable(tf.random.normal([10,1])) b_L2=tf.Variable(tf.zeros([1,1])) wx_plut_b_L2=tf.matmul(L1,w_L2)+b_L2 prediction= tf.nn.sigmoid(wx_plut_b_L2) loss = tf.reduce_mean(tf.square(y-prediction)) train_step=tf.compat.v1.train.ProximalGradientDescentOptimizer(0.2).minimize(loss) with tf.compat.v1.Session() as sess: sess.run(tf.compat.v1.global_variables_initializer()) for i in range(1000): sess.run(train_step, feed_dict={x:x_data,y:y_data}) # percent = 1.0 * i / 1000 * 100 # print('complete percent:%10.8s%s' % (str(percent), '%'), end='\r') # time.sleep(0.001) if i % 100 == 0: print(i,sess.run(loss,feed_dict={x:x_data,y:y_data})) prediction_value=sess.run(prediction,feed_dict={x:x_data}) plt.figure() plt.scatter(x_data,y_data) plt.plot(x_data,prediction_value,'r-',lw=5) plt.show()

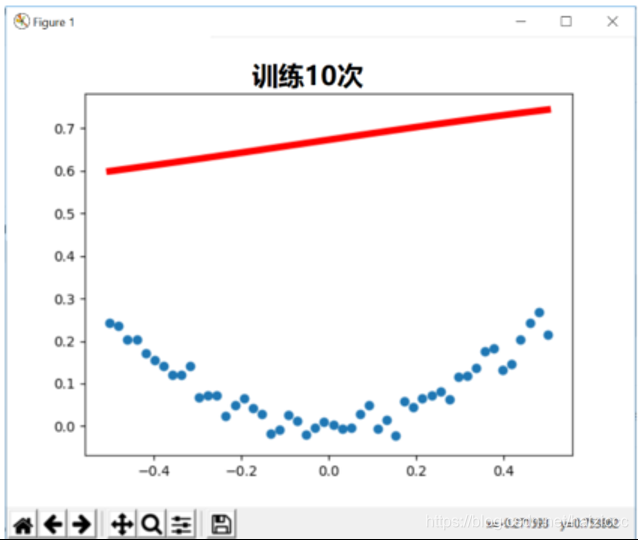

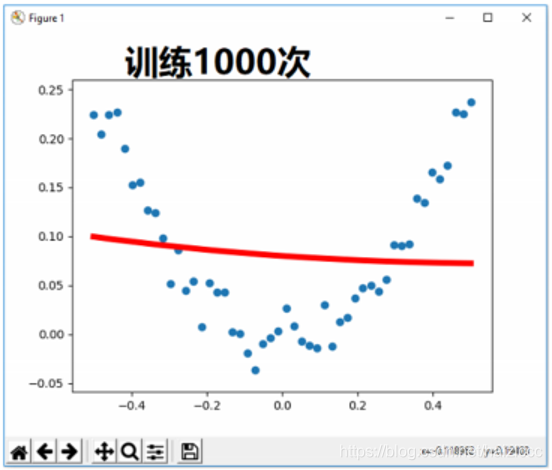

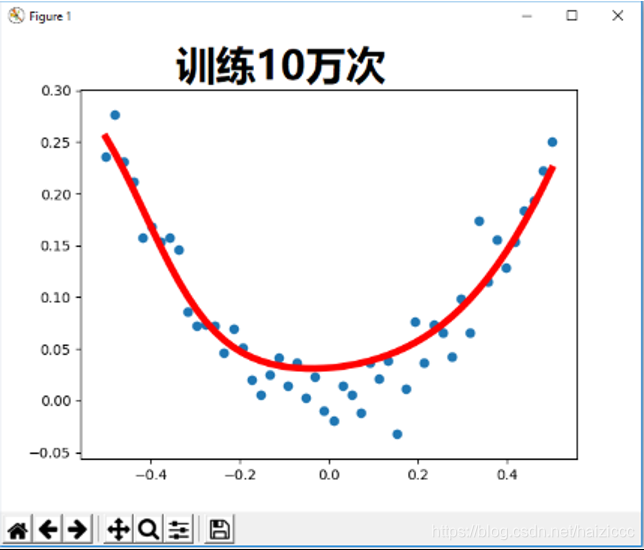

通过该表步长和训练次数,得到不通拟合结果,当训练次数达到10万次时,结果误差到达0.0004附近,可以看到结果已趋近于理想,也可以通过改变步长实现不同步长下训练效果对比

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)