动手学AI 第二部分第三节 —— 主流的卷积神经网络(下)

各位同学大家好,欢迎来到动手学 AI 专栏!在上一篇文章《动手学 AI 第二部分第二节 —— 主流的卷积神经网络(上)》中,我们一起学习了 AlexNet、VGGNet、GoogleNet 和 ResNet 这几种主流卷积神经网络,相信大家对卷积神经网络已经有了更深入的理解:那么在这篇文章中,咱们接着探索另外几种同样具有代表性的卷积神经网络,看看它们又有哪些神奇的地方!

各位同学大家好,欢迎来到动手学 AI 专栏!

在上一篇文章《动手学 AI 第二部分第二节 —— 主流的卷积神经网络(上)》中,我们一起学习了 AlexNet、VGGNet、GoogleNet 和 ResNet 这几种主流卷积神经网络,相信大家对卷积神经网络已经有了更深入的理解:

具体在这里:

那么在这篇文章中,咱们接着探索另外几种同样具有代表性的卷积神经网络,看看它们又有哪些神奇的地方!

老规矩,干货满满,记得点赞关注哦~

一、DenseNet:密集连接的网络架构

(一)原理

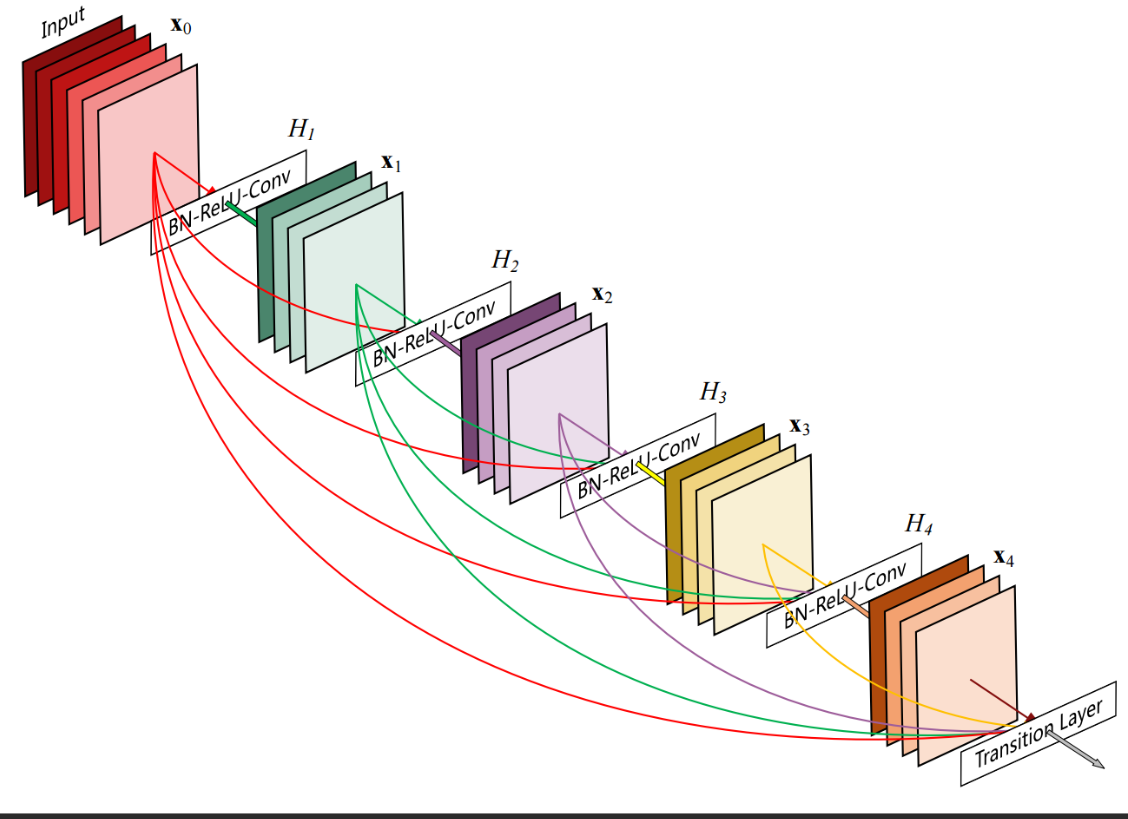

DenseNet(Densely Connected Convolutional Networks)是 2017 年提出的一种全新的卷积神经网络架构,它的核心设计理念是密集连接。在传统的卷积神经网络中,各层之间大多是顺序连接的,即某一层的输入仅来自上一层的输出。而 DenseNet 打破了这种常规,它让每一层都与前面所有层直接相连,这样一来,每一层都能获取到前面所有层的特征信息,大大提高了特征的利用率。

如上图。

具体来说,DenseNet 由多个密集块(Dense Block)组成,每个密集块内的层与层之间都采用密集连接。假设一个密集块中有L层,那么第l层的输入就是前面(l - 1)层的输出在通道维度上拼接起来的结果。这种连接方式使得网络中的信息流更加丰富,每一层都能 “站在巨人的肩膀上” 进行特征学习。

此外,DenseNet 在不同密集块之间还使用了过渡层(Transition Layer),过渡层主要包含一个(1×1)卷积层和一个平均池化层,用于降低特征图的维度,减少计算量,同时防止模型过拟合。

(二)代码示例(使用 PyTorch)

import torch

import torch.nn as nn

class Bottleneck(nn.Module):

def __init__(self, in_channels, growth_rate):

super(Bottleneck, self).__init__()

inner_channels = 4 * growth_rate

self.bn1 = nn.BatchNorm2d(in_channels)

self.relu1 = nn.ReLU(inplace=True)

self.conv1 = nn.Conv2d(in_channels, inner_channels, kernel_size=1, bias=False)

self.bn2 = nn.BatchNorm2d(inner_channels)

self.relu2 = nn.ReLU(inplace=True)

self.conv2 = nn.Conv2d(inner_channels, growth_rate, kernel_size=3, padding=1, bias=False)

def forward(self, x):

out = self.bn1(x)

out = self.relu1(out)

out = self.conv1(out)

out = self.bn2(out)

out = self.relu2(out)

out = self.conv2(out)

out = torch.cat([x, out], dim=1)

return out

class DenseBlock(nn.Module):

def __init__(self, num_layers, in_channels, growth_rate):

super(DenseBlock, self).__init__()

layers = []

for i in range(num_layers):

layers.append(Bottleneck(in_channels + i * growth_rate, growth_rate))

self.layers = nn.Sequential(*layers)

def forward(self, x):

return self.layers(x)

class TransitionLayer(nn.Module):

def __init__(self, in_channels, out_channels):

super(TransitionLayer, self).__init__()

self.bn = nn.BatchNorm2d(in_channels)

self.relu = nn.ReLU(inplace=True)

self.conv = nn.Conv2d(in_channels, out_channels, kernel_size=1, bias=False)

self.pool = nn.AvgPool2d(kernel_size=2, stride=2)

def forward(self, x):

out = self.bn(x)

out = self.relu(out)

out = self.conv(out)

out = self.pool(out)

return out

class DenseNet(nn.Module):

def __init__(self, num_init_features, growth_rate, block_config, num_classes=1000):

super(DenseNet, self).__init__()

self.features = nn.Sequential(

nn.Conv2d(3, num_init_features, kernel_size=7, stride=2, padding=3, bias=False),

nn.BatchNorm2d(num_init_features),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

)

in_channels = num_init_features

for i, num_layers in enumerate(block_config):

self.features.add_module(f'denseblock{i + 1}', DenseBlock(num_layers, in_channels, growth_rate))

in_channels += num_layers * growth_rate

if i != len(block_config) - 1:

out_channels = in_channels // 2

self.features.add_module(f'transition{i + 1}', TransitionLayer(in_channels, out_channels))

in_channels = out_channels

self.features.add_module('bn', nn.BatchNorm2d(in_channels))

self.features.add_module('relu', nn.ReLU(inplace=True))

self.features.add_module('avgpool', nn.AdaptiveAvgPool2d((1, 1)))

self.classifier = nn.Linear(in_channels, num_classes)

def forward(self, x):

out = self.features(x)

out = torch.flatten(out, 1)

out = self.classifier(out)

return out

def densenet121():

return DenseNet(num_init_features=64, growth_rate=32, block_config=(6, 12, 24, 16))

def densenet169():

return DenseNet(num_init_features=64, growth_rate=32, block_config=(6, 12, 32, 32))

def densenet201():

return DenseNet(num_init_features=64, growth_rate=32, block_config=(6, 12, 48, 32))

def densenet264():

return DenseNet(num_init_features=64, growth_rate=48, block_config=(6, 12, 64, 48))

(三)优缺点

更多推荐

已为社区贡献11条内容

已为社区贡献11条内容

所有评论(0)