hCaptcha机器学习对抗技术:深度学习驱动的智能验证码挑战

深入探讨hCaptcha验证码的机器学习对抗技术,基于深度学习实现智能化验证码挑战系统。通过Python构建完整的对抗样本生成和防御框架,推进验证码安全技术发展。系统性介绍核心概念,结合最新技术趋势提供前瞻性指导方案。全面剖析技术原理,为开发者提供专业的解决方案和实战指导。

hCaptcha机器学习对抗技术:深度学习驱动的智能验证码挑战

技术概述

hCaptcha作为现代验证码技术的重要代表,通过机器学习驱动的智能挑战系统,为网络安全防护提供了强有力的技术支撑。其独特的对抗性机器学习架构,不仅能够有效区分人类用户和自动化程序,还能通过持续学习和模型优化,不断提升验证码的安全防护能力。

对抗性机器学习在验证码领域的应用代表了人工智能安全研究的前沿方向。通过深入理解hCaptcha的对抗机制,研究人员能够更好地把握机器学习安全的发展趋势,为构建更加robust的AI系统提供重要参考。这种技术不仅推动了验证码技术的演进,也为更广泛的AI安全应用奠定了基础。

核心原理与代码实现

对抗样本生成与防御系统

import torch

import torch.nn as nn

import torch.optim as optim

import torch.nn.functional as F

from torch.utils.data import DataLoader, Dataset

import torchvision.transforms as transforms

from torchvision.models import resnet50, efficientnet_b0

import numpy as np

import cv2

from PIL import Image, ImageDraw, ImageFont

import matplotlib.pyplot as plt

import json

import logging

import asyncio

from typing import Dict, List, Tuple, Optional, Any

from dataclasses import dataclass

from enum import Enum

import time

from pathlib import Path

class AttackType(Enum):

FGSM = "fgsm"

PGD = "pgd"

CWNL2 = "cw_l2"

DEEPFOOL = "deepfool"

PATCH = "patch"

@dataclass

class AdversarialConfig:

"""对抗攻击配置"""

attack_type: AttackType

epsilon: float = 0.03

alpha: float = 0.01

num_iter: int = 40

targeted: bool = False

target_class: Optional[int] = None

confidence: float = 0.0

class HCaptchaChallengeGenerator:

"""hCaptcha挑战生成器"""

def __init__(self):

self.device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

self.challenge_types = {

'object_detection': ['car', 'bus', 'truck', 'bicycle', 'motorcycle'],

'animal_recognition': ['cat', 'dog', 'bird', 'fish', 'horse'],

'text_verification': ['house_number', 'street_sign', 'license_plate'],

'activity_recognition': ['person_walking', 'person_running', 'person_sitting']

}

# 图像变换

self.transform = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225])

])

def generate_challenge_set(self, challenge_type: str, num_images: int = 9) -> Dict[str, Any]:

"""生成挑战集合"""

try:

if challenge_type not in self.challenge_types:

raise ValueError(f"不支持的挑战类型: {challenge_type}")

target_objects = self.challenge_types[challenge_type]

target_object = np.random.choice(target_objects)

# 生成网格图像

grid_images = []

grid_labels = []

# 确保至少有一个正样本

positive_count = np.random.randint(1, max(2, num_images // 3))

for i in range(num_images):

if i < positive_count:

# 正样本

image = self._generate_positive_sample(target_object)

label = 1

else:

# 负样本

negative_object = np.random.choice([obj for obj in target_objects if obj != target_object])

image = self._generate_negative_sample(negative_object)

label = 0

grid_images.append(image)

grid_labels.append(label)

# 随机打乱

indices = np.random.permutation(num_images)

grid_images = [grid_images[i] for i in indices]

grid_labels = [grid_labels[i] for i in indices]

return {

'challenge_type': challenge_type,

'target_object': target_object,

'grid_images': grid_images,

'grid_labels': grid_labels,

'positive_indices': [i for i, label in enumerate(grid_labels) if label == 1],

'challenge_id': self._generate_challenge_id()

}

except Exception as e:

logging.error(f"生成挑战集合失败: {e}")

return {}

def _generate_positive_sample(self, target_object: str) -> np.ndarray:

"""生成正样本"""

# 简化的图像生成(实际中应使用GAN或真实数据)

image = np.random.randint(50, 200, (224, 224, 3), dtype=np.uint8)

# 根据目标对象添加特征

if 'car' in target_object:

# 绘制车辆形状

cv2.rectangle(image, (50, 100), (174, 180), (255, 100, 100), -1)

cv2.rectangle(image, (60, 110), (164, 140), (200, 200, 200), -1)

# 添加车轮

cv2.circle(image, (70, 180), 15, (0, 0, 0), -1)

cv2.circle(image, (154, 180), 15, (0, 0, 0), -1)

elif 'bicycle' in target_object:

# 绘制自行车

cv2.circle(image, (80, 150), 25, (100, 100, 255), 3)

cv2.circle(image, (144, 150), 25, (100, 100, 255), 3)

cv2.line(image, (80, 150), (144, 150), (0, 0, 0), 2)

cv2.line(image, (112, 150), (112, 100), (0, 0, 0), 2)

elif 'cat' in target_object:

# 绘制猫的形状

cv2.ellipse(image, (112, 140), (40, 30), 0, 0, 360, (150, 75, 0), -1)

# 耳朵

points = np.array([[92, 120], [102, 100], [112, 120]], np.int32)

cv2.fillPoly(image, [points], (150, 75, 0))

points = np.array([[112, 120], [122, 100], [132, 120]], np.int32)

cv2.fillPoly(image, [points], (150, 75, 0))

# 眼睛

cv2.circle(image, (102, 135), 3, (0, 0, 0), -1)

cv2.circle(image, (122, 135), 3, (0, 0, 0), -1)

return image

def _generate_negative_sample(self, distractor_object: str) -> np.ndarray:

"""生成负样本"""

# 生成不包含目标对象的图像

image = np.random.randint(0, 255, (224, 224, 3), dtype=np.uint8)

# 添加干扰元素

if 'dog' in distractor_object:

# 绘制狗的形状(与猫不同)

cv2.ellipse(image, (112, 140), (50, 35), 0, 0, 360, (100, 50, 25), -1)

# 长耳朵

cv2.ellipse(image, (90, 130), (15, 25), 30, 0, 360, (100, 50, 25), -1)

cv2.ellipse(image, (134, 130), (15, 25), -30, 0, 360, (100, 50, 25), -1)

return image

def _generate_challenge_id(self) -> str:

"""生成挑战ID"""

import hashlib

import time

timestamp = str(time.time())

random_bytes = np.random.bytes(8)

hash_input = timestamp.encode() + random_bytes

challenge_id = hashlib.sha256(hash_input).hexdigest()[:16]

return challenge_id

class AdversarialAttackGenerator:

"""对抗攻击生成器"""

def __init__(self, model: nn.Module):

self.model = model

self.device = next(model.parameters()).device

def fgsm_attack(self, images: torch.Tensor, labels: torch.Tensor,

epsilon: float = 0.03) -> torch.Tensor:

"""快速梯度符号方法攻击"""

images = images.to(self.device)

labels = labels.to(self.device)

images.requires_grad = True

# 前向传播

outputs = self.model(images)

loss = F.cross_entropy(outputs, labels)

# 计算梯度

self.model.zero_grad()

loss.backward()

# 收集数据梯度

data_grad = images.grad.data

# 生成对抗样本

sign_data_grad = data_grad.sign()

perturbed_images = images + epsilon * sign_data_grad

# 确保在有效范围内

perturbed_images = torch.clamp(perturbed_images, 0, 1)

return perturbed_images.detach()

def pgd_attack(self, images: torch.Tensor, labels: torch.Tensor,

epsilon: float = 0.03, alpha: float = 0.01,

num_iter: int = 40) -> torch.Tensor:

"""投影梯度下降攻击"""

images = images.to(self.device)

labels = labels.to(self.device)

# 初始化对抗样本

adv_images = images.clone().detach()

adv_images = adv_images + torch.empty_like(adv_images).uniform_(-epsilon, epsilon)

adv_images = torch.clamp(adv_images, 0, 1).detach()

for i in range(num_iter):

adv_images.requires_grad = True

outputs = self.model(adv_images)

loss = F.cross_entropy(outputs, labels)

# 计算梯度

grad = torch.autograd.grad(loss, adv_images,

retain_graph=False, create_graph=False)[0]

# 更新对抗样本

adv_images = adv_images.detach() + alpha * grad.sign()

delta = torch.clamp(adv_images - images, min=-epsilon, max=epsilon)

adv_images = torch.clamp(images + delta, min=0, max=1).detach()

return adv_images

def patch_attack(self, images: torch.Tensor, patch_size: int = 50,

target_class: int = 0) -> Tuple[torch.Tensor, torch.Tensor]:

"""补丁攻击"""

batch_size, channels, height, width = images.shape

# 初始化随机补丁

patch = torch.rand(channels, patch_size, patch_size, device=self.device)

patch.requires_grad = True

# 随机位置

x_offset = np.random.randint(0, width - patch_size)

y_offset = np.random.randint(0, height - patch_size)

optimizer = optim.Adam([patch], lr=0.01)

target_labels = torch.full((batch_size,), target_class, device=self.device)

for epoch in range(100):

optimizer.zero_grad()

# 应用补丁

patched_images = images.clone()

patched_images[:, :, y_offset:y_offset+patch_size,

x_offset:x_offset+patch_size] = patch

outputs = self.model(patched_images)

loss = F.cross_entropy(outputs, target_labels)

loss.backward()

optimizer.step()

# 约束补丁值在有效范围内

patch.data = torch.clamp(patch.data, 0, 1)

# 生成最终的对抗样本

final_images = images.clone()

final_images[:, :, y_offset:y_offset+patch_size,

x_offset:x_offset+patch_size] = patch.detach()

return final_images, patch.detach()

class AdversarialDefenseSystem:

"""对抗防御系统"""

def __init__(self, model: nn.Module):

self.model = model

self.device = next(model.parameters()).device

def adversarial_training(self, train_loader: DataLoader,

attack_config: AdversarialConfig,

epochs: int = 10) -> Dict[str, List[float]]:

"""对抗训练"""

optimizer = optim.Adam(self.model.parameters(), lr=0.001)

attack_generator = AdversarialAttackGenerator(self.model)

train_losses = []

train_accuracies = []

for epoch in range(epochs):

self.model.train()

total_loss = 0.0

correct = 0

total = 0

for batch_idx, (images, labels) in enumerate(train_loader):

images, labels = images.to(self.device), labels.to(self.device)

# 生成对抗样本

if attack_config.attack_type == AttackType.FGSM:

adv_images = attack_generator.fgsm_attack(

images, labels, attack_config.epsilon

)

elif attack_config.attack_type == AttackType.PGD:

adv_images = attack_generator.pgd_attack(

images, labels, attack_config.epsilon,

attack_config.alpha, attack_config.num_iter

)

else:

adv_images = images # 正常训练

# 混合训练:原始样本 + 对抗样本

mixed_images = torch.cat([images, adv_images], dim=0)

mixed_labels = torch.cat([labels, labels], dim=0)

optimizer.zero_grad()

outputs = self.model(mixed_images)

loss = F.cross_entropy(outputs, mixed_labels)

loss.backward()

optimizer.step()

total_loss += loss.item()

_, predicted = outputs.max(1)

total += mixed_labels.size(0)

correct += predicted.eq(mixed_labels).sum().item()

epoch_loss = total_loss / len(train_loader)

epoch_acc = 100. * correct / total

train_losses.append(epoch_loss)

train_accuracies.append(epoch_acc)

print(f'Epoch {epoch}: Loss={epoch_loss:.4f}, Acc={epoch_acc:.2f}%')

return {'losses': train_losses, 'accuracies': train_accuracies}

def input_transformation_defense(self, images: torch.Tensor) -> torch.Tensor:

"""输入变换防御"""

# 随机裁剪和填充

transformed_images = []

for image in images:

# 随机噪声

noise = torch.randn_like(image) * 0.01

image_with_noise = image + noise

# 随机翻转

if np.random.random() > 0.5:

image_with_noise = torch.flip(image_with_noise, [2])

# 随机旋转(小角度)

angle = np.random.uniform(-5, 5)

# 这里简化处理,实际应使用旋转变换

# 高斯模糊

if np.random.random() > 0.7:

# 简化的模糊效果

image_with_noise = image_with_noise * 0.9

transformed_images.append(image_with_noise)

return torch.stack(transformed_images)

def ensemble_defense(self, images: torch.Tensor,

models: List[nn.Module]) -> torch.Tensor:

"""集成防御"""

all_outputs = []

for model in models:

model.eval()

with torch.no_grad():

outputs = model(images)

all_outputs.append(F.softmax(outputs, dim=1))

# 平均预测结果

ensemble_output = torch.mean(torch.stack(all_outputs), dim=0)

return ensemble_output

def detect_adversarial_examples(self, images: torch.Tensor,

threshold: float = 0.5) -> torch.Tensor:

"""检测对抗样本"""

self.model.eval()

detection_scores = []

for image in images:

image = image.unsqueeze(0)

# 原始预测

with torch.no_grad():

original_output = self.model(image)

original_confidence = F.softmax(original_output, dim=1).max().item()

# 添加小扰动后的预测

perturbed_images = []

for _ in range(10):

noise = torch.randn_like(image) * 0.01

perturbed_image = image + noise

perturbed_images.append(perturbed_image)

perturbed_batch = torch.cat(perturbed_images, dim=0)

with torch.no_grad():

perturbed_outputs = self.model(perturbed_batch)

perturbed_confidences = F.softmax(perturbed_outputs, dim=1).max(dim=1)[0]

# 计算置信度变化

confidence_variance = torch.var(perturbed_confidences).item()

# 对抗样本通常对小扰动敏感

detection_score = confidence_variance

detection_scores.append(detection_score)

detection_tensor = torch.tensor(detection_scores)

return detection_tensor > threshold

class HCaptchaMLSystem:

"""hCaptcha机器学习系统"""

def __init__(self):

self.challenge_generator = HCaptchaChallengeGenerator()

self.classifier_model = None

self.defense_system = None

self.attack_generator = None

async def initialize_system(self, model_path: Optional[str] = None):

"""初始化系统"""

# 创建分类模型

self.classifier_model = resnet50(pretrained=True)

self.classifier_model.fc = nn.Linear(

self.classifier_model.fc.in_features, 2 # 二分类:目标/非目标

)

if model_path:

self.classifier_model.load_state_dict(torch.load(model_path))

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

self.classifier_model.to(device)

# 初始化防御和攻击系统

self.defense_system = AdversarialDefenseSystem(self.classifier_model)

self.attack_generator = AdversarialAttackGenerator(self.classifier_model)

async def generate_secure_challenge(self, challenge_type: str) -> Dict[str, Any]:

"""生成安全挑战"""

# 生成基础挑战

challenge = self.challenge_generator.generate_challenge_set(challenge_type)

if not challenge:

return {'error': '挑战生成失败'}

# 应用防御措施

enhanced_images = []

for image in challenge['grid_images']:

# 转换为tensor

image_tensor = self.challenge_generator.transform(

Image.fromarray(image)

).unsqueeze(0)

# 应用输入变换防御

defended_image = self.defense_system.input_transformation_defense(

image_tensor

)

# 转换回numpy数组

defended_array = defended_image.squeeze(0).permute(1, 2, 0).numpy()

defended_array = (defended_array * 255).astype(np.uint8)

enhanced_images.append(defended_array)

challenge['grid_images'] = enhanced_images

challenge['security_level'] = 'high'

challenge['defense_applied'] = True

return challenge

async def evaluate_robustness(self, test_images: torch.Tensor,

test_labels: torch.Tensor) -> Dict[str, float]:

"""评估模型鲁棒性"""

results = {}

# 测试不同攻击方法

attack_configs = [

AdversarialConfig(AttackType.FGSM, epsilon=0.01),

AdversarialConfig(AttackType.FGSM, epsilon=0.03),

AdversarialConfig(AttackType.PGD, epsilon=0.03, num_iter=20),

AdversarialConfig(AttackType.PGD, epsilon=0.03, num_iter=40)

]

for config in attack_configs:

attack_name = f"{config.attack_type.value}_eps_{config.epsilon}"

if config.attack_type == AttackType.FGSM:

adv_images = self.attack_generator.fgsm_attack(

test_images, test_labels, config.epsilon

)

elif config.attack_type == AttackType.PGD:

adv_images = self.attack_generator.pgd_attack(

test_images, test_labels, config.epsilon,

config.alpha, config.num_iter

)

# 评估攻击成功率

with torch.no_grad():

original_outputs = self.classifier_model(test_images)

adv_outputs = self.classifier_model(adv_images)

original_pred = original_outputs.argmax(dim=1)

adv_pred = adv_outputs.argmax(dim=1)

# 计算攻击成功率

attack_success = (original_pred != adv_pred).float().mean().item()

# 计算干净准确率

clean_accuracy = (original_pred == test_labels).float().mean().item()

# 计算对抗准确率

adv_accuracy = (adv_pred == test_labels).float().mean().item()

results[attack_name] = {

'attack_success_rate': attack_success,

'clean_accuracy': clean_accuracy,

'adversarial_accuracy': adv_accuracy,

'robustness_score': 1.0 - attack_success

}

return results

专业解决方案集成

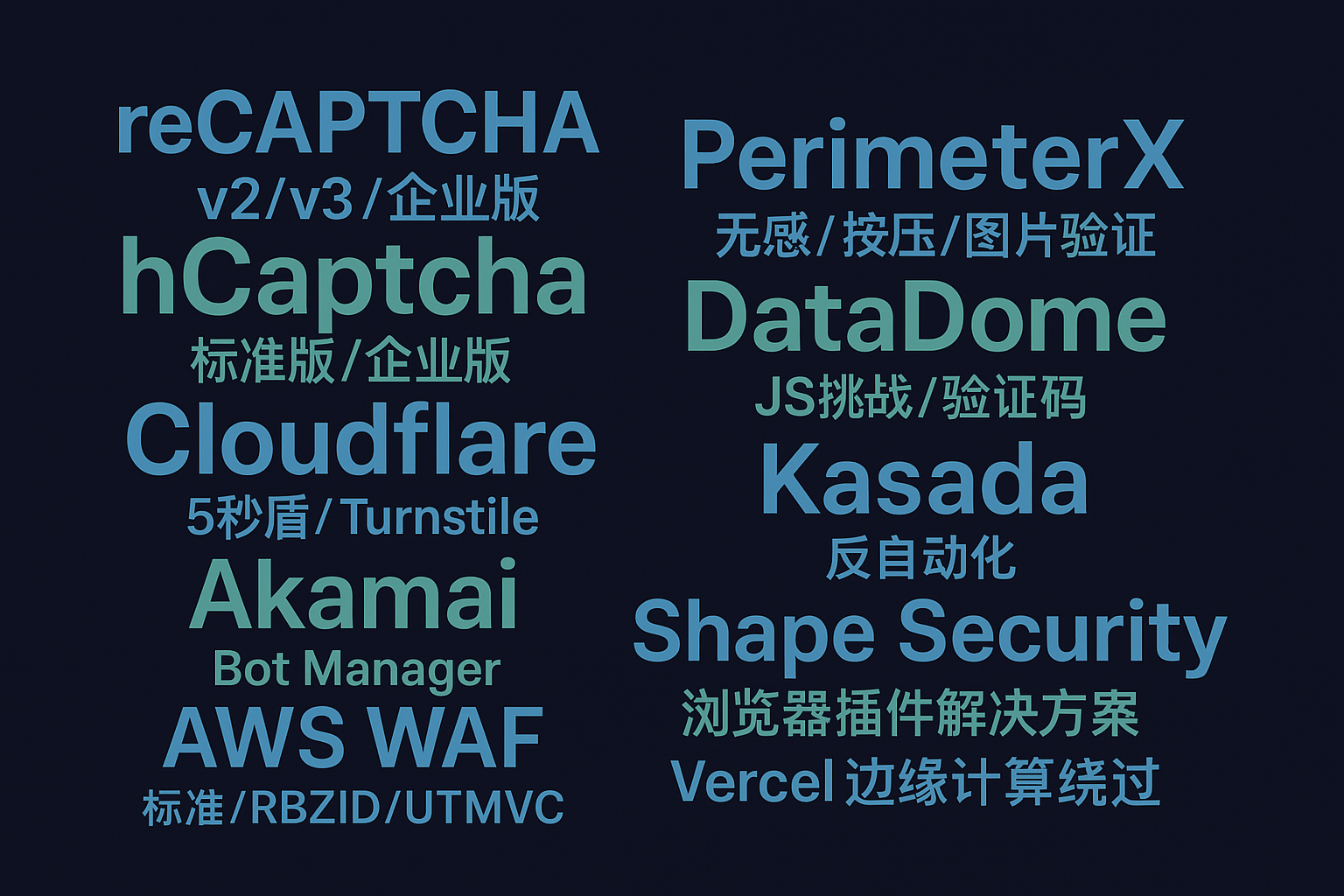

在机器学习安全领域,对抗样本的研究对于提升AI系统的鲁棒性具有重要意义。hCaptcha机器学习对抗 - 专业AI安全技术服务提供完整的对抗样本生成和防御技术支持,帮助研究机构和企业构建更加安全可靠的机器学习系统。

对于需要进行深度的AI安全研究和验证码技术开发的团队,AI驱动验证码识别 - 支持18种主流验证码类型具备丰富的机器学习安全经验,能够提供从对抗攻击分析到防御策略优化的全方位技术解决方案。

结语总结

hCaptcha的机器学习对抗技术展现了现代AI安全领域的前沿成果,通过对抗样本生成、防御机制和鲁棒性评估的综合应用,为构建更加安全的验证码系统提供了重要的技术支撑。这种技术不仅推动了验证码领域的发展,也为更广泛的AI安全应用奠定了基础。

随着深度学习技术的不断发展,对抗性机器学习将在网络安全、自动驾驶、医疗诊断等关键领域发挥更加重要的作用。未来的研究方向包括更加高效的对抗攻击方法、更强的防御机制、更准确的鲁棒性评估指标和更实用的安全部署策略。

关键词: hCaptcha对抗技术, 机器学习安全, 对抗样本生成, 深度学习防御, AI鲁棒性评估, 验证码安全, 神经网络攻击, 智能安全系统

更多推荐

已为社区贡献17条内容

已为社区贡献17条内容

所有评论(0)