IDEA+scala+spark程序开发流程

1. 新建JAVA工程2. 设置scala SDKFile -> Project Struction -> Libraries -> +; 添加Scala SDK。如果没有配置过系统的scala SDK, 指定系统中安装的scala位置。3. 导入spark librariesFile -> Project Struction -> Librar...

·

1. 新建JAVA工程

2. 设置scala SDK

File -> Project Struction -> Libraries -> +; 添加Scala SDK。如果没有配置过系统的scala SDK, 指定系统中安装的scala位置。

3. 导入spark libraries

File -> Project Struction -> Libraries -> +; 导入spark的jar包,这里可以统一对所有的jar包进行命名,如spark-lib。

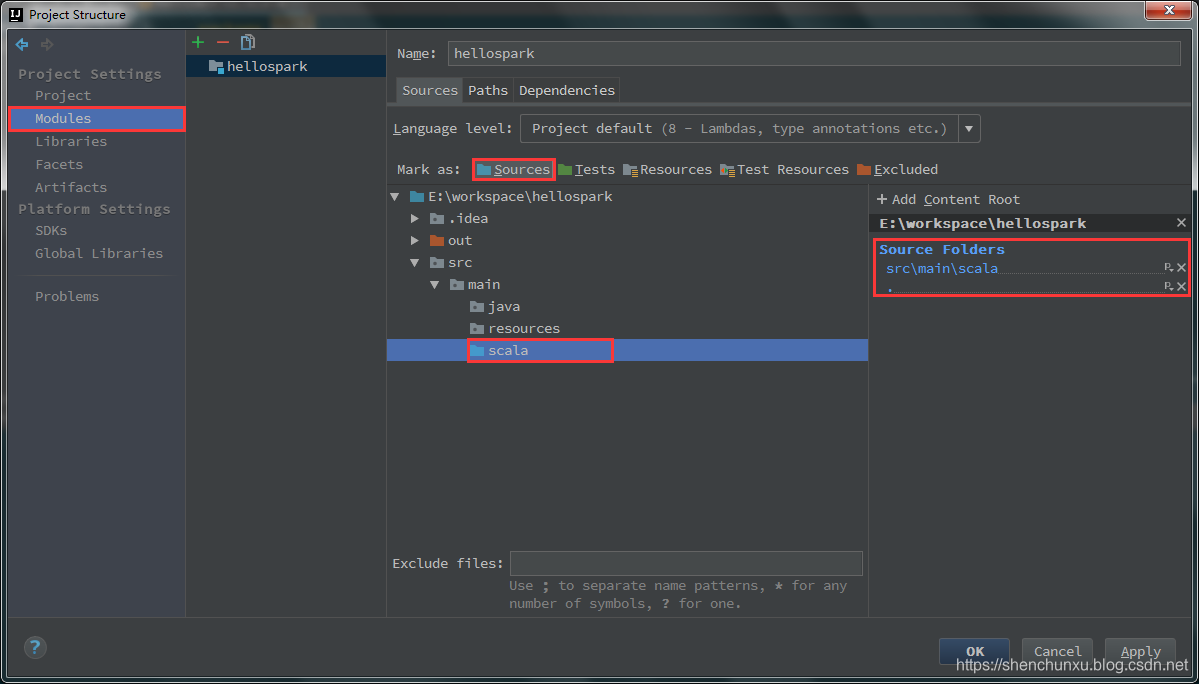

4. 设置Source

File->Project Structure->Modules->Sources->src

这里选中src,右键New Folder取名main,然后在main下面新建三个文件夹:java,resources,scala。最后右键scala文件夹,选择Sources。

在面板的右侧的+Add Content Root下面就会增加src\main\scala作为源文件夹,可以检验文件结构是否正确。

需要注意的是,如果没有设置source文件夹或者文件结构设置错误,后面运行spark程序都会报错!

- 不设置Source文件夹:Error=找不到或无法加载主类 scala.HelloWorld

- main, main/scala都设置为source:Error=HelloWorld is already defined as object HelloWorld

5. 编写spark测试程序

右键scala文件夹 -> New -> Scala Class -> 选择object

测试代码如下:

package scala

import org.apache.spark.{SparkConf, SparkContext}

object HelloWorld {

def main(args: Array[String]): Unit = {

val logFile = "E:\\software\\spark-2.3.0-bin-hadoop2.7\\helloSpark.txt"

val conf = new SparkConf().setAppName("wordcount").setMaster("local")

val sc = new SparkContext(conf)

val rdd = sc.textFile(logFile)

val counts = rdd.flatMap(line=>line.split(",")).map(x=>(x,1)).reduceByKey((x,y)=>(x+y))

counts.foreach(println)

sc.stop()

}

}Ctrl+Shift+F10 运行程序,日志如下。

2019-08-05 22:18:32 INFO Executor:54 - Running task 0.0 in stage 1.0 (TID 1)

2019-08-05 22:18:32 INFO ShuffleBlockFetcherIterator:54 - Getting 1 non-empty blocks out of 1 blocks

2019-08-05 22:18:32 INFO ShuffleBlockFetcherIterator:54 - Started 0 remote fetches in 6 ms

(scala,1)

(spark,2)

(hello,3)

(sparkui,1)

(java,1)

2019-08-05 22:18:32 INFO Executor:54 - Finished task 0.0 in stage 1.0 (TID 1). 1138 bytes result sent to driver

2019-08-05 22:18:32 TaskSetManager:54 - Finished task 0.0 in stage 1.0 (TID 1) in 56 ms on localhost (executor driver) (1/1)

2019-08-05 22:18:32 INFO TaskSchedulerImpl:54 - Removed TaskSet 1.0, whose tasks have all completed, from pool

2019-08-05 22:18:32 INFO DAGScheduler:54 - ResultStage 1 (main at <unknown>:0) finished in 0.072 s

2019-08-05 22:18:32 INFO DAGScheduler:54 - Job 0 finished: main at <unknown>:0, took 0.859162 s

2019-08-05 22:18:32 INFO AbstractConnector:318 - Stopped Spark@1f4c2977{HTTP/1.1,[http/1.1]}{0.0.0.0:4040}

2019-08-05 22:18:32 INFO SparkUI:54 - Stopped Spark web UI at http://10.119.9.164:4040

更多推荐

已为社区贡献6条内容

已为社区贡献6条内容

所有评论(0)