opencv项目实战——答题卡识别

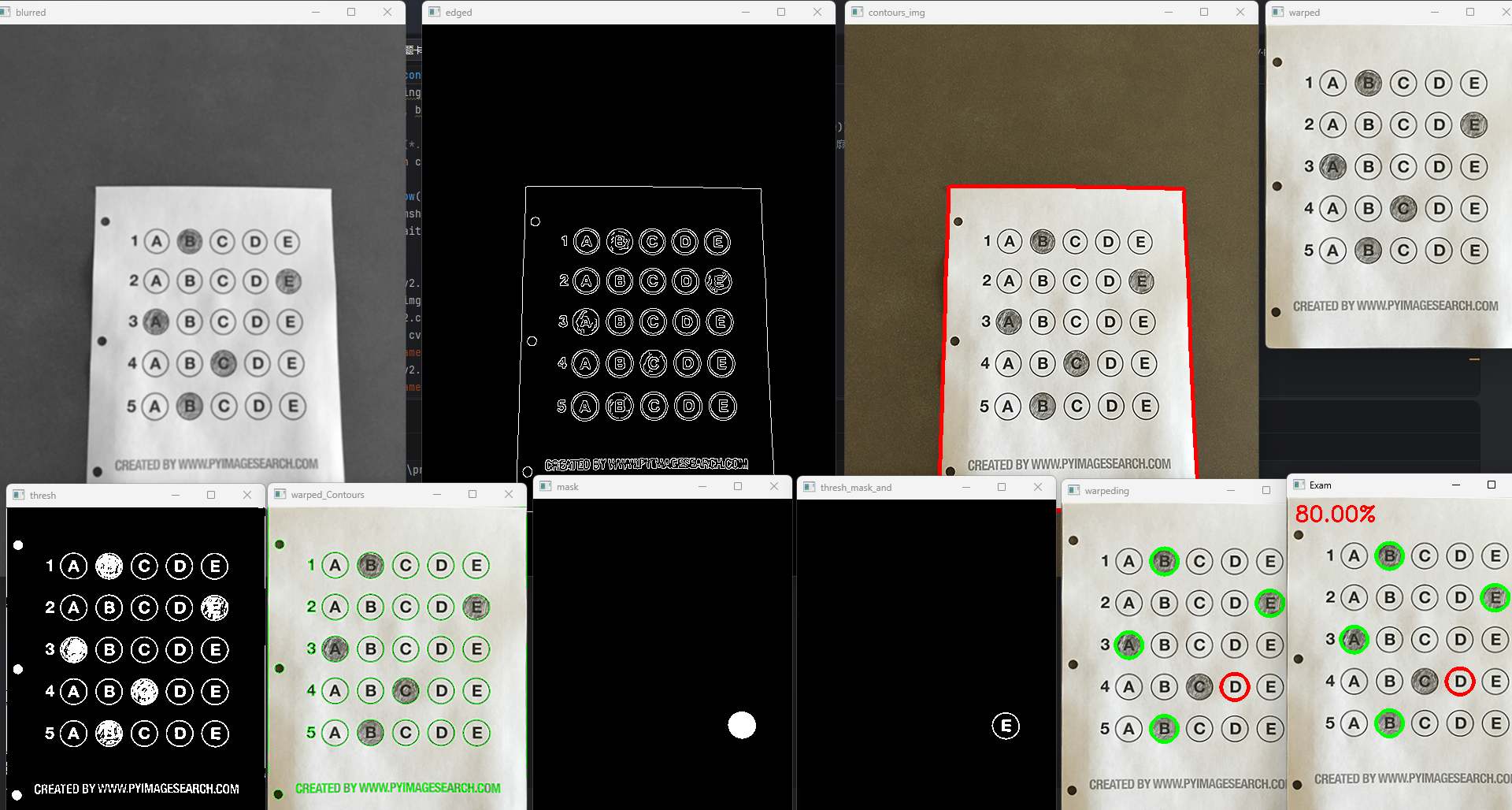

观察此图发现需要将答题卡透视变换,再提取出所有的圆圈,进行排序,最后判断答案。如下图,要求识别出答题卡的答案,并与正确答案比对(B,E,A,D,B)6、判断哪个选择答案与正确答案(这里采用统计白色像素数量)为了比对正确答案,将正确答案与其索引值相匹配。5、轮廓排序(从上到下、从左到右)7、对比标准答案 、 打分。1、读取图像 、 预处理。2、找到答题卡最大外框。4、提取所有选项圆圈。

·

如下图,要求识别出答题卡的答案,并与正确答案比对(B,E,A,D,B)

为了比对正确答案,将正确答案与其索引值相匹配

ANSWER_KEY = {0: 1, 1: 4, 2: 0, 3: 3, 4: 1}

观察此图发现需要将答题卡透视变换,再提取出所有的圆圈,进行排序,最后判断答案。

具体思路流程:

1、读取图像 、 预处理

2、找到答题卡最大外框

3、透视变换

4、提取所有选项圆圈

5、轮廓排序(从上到下、从左到右)

6、判断哪个选择答案与正确答案(这里采用统计白色像素数量)

7、对比标准答案 、 打分

import numpy as np

import cv2

ANSWER_KEY = {0: 1, 1: 4, 2: 0, 3: 3, 4: 1} # 正确答案

def order_points(pts):

rect = np.zeros((4, 2), dtype="float32") # 用来存储排序之后的坐标位置

# 按顺序找到对应坐标0123分别是左上,右上,右下,左下

s = pts.sum(axis=1)

rect[0] = pts[np.argmin(s)]

rect[2] = pts[np.argmax(s)]

diff = np.diff(pts, axis=1)

rect[1] = pts[np.argmin(diff)]

rect[3] = pts[np.argmax(diff)]

return rect

def four_point_transform(image, pts):

# 获取输入的坐标点

rect = order_points(pts)

tl, tr, br, bl = rect

# 计算图像透视变换后的w和h

widthA = np.sqrt((tr[0] - tl[0]) ** 2 + (tr[1] - tl[1]) ** 2)

widthB = np.sqrt((br[0] - bl[0]) ** 2 + (br[1] - bl[1]) ** 2)

maxwidth = max(int(widthA), int(widthB))

heightA = np.sqrt((tl[0] - bl[0]) ** 2 + (tl[1] - bl[1]) ** 2)

heightB = np.sqrt((tr[0] - br[0]) ** 2 + (tr[1] - br[1]) ** 2)

maxheight = max(int(heightA), int(heightB))

# 变换后对应坐标位置

dst = np.array([[0, 0], [maxwidth - 1, 0], [maxwidth - 1, maxheight - 1], [0, maxheight - 1]], dtype="float32")

# 图像透视变换 cv2.getPerspectiveTransform(src,dst[,solveMethod])>MP获得转换之间的关系

# src:变换前图像四边形顶点坐标

# dst:变换后图像四边形顶点坐标

# cv2.warpPerspective(src, MP, dsize[, dst[, flags[, borderMode[, borderValue]]]]) > dst

# 参数说明:

# src:原图

# MP:透视变换矩阵,3行3列

# dsize:输出图像的大小,二元元组(width,height)

M = cv2.getPerspectiveTransform(rect, dst)

warped = cv2.warpPerspective(image, M, (maxwidth, maxheight))

return warped

def sort_contours(cnts, method="left-to-right"):

reverse = False

i = 0

if method == 'right-to-left' or method == 'bottom-to-top':

reverse = True

if method == 'top-to-bottom' or method == 'bottom-to-top':

i = 1

boundingBoxes = [cv2.boundingRect(c) for c in cnts]

(cnts, boundingBoxes) = zip(*sorted(zip(cnts, boundingBoxes),

key=lambda b: b[1][i], reverse=reverse))

# zip(*...) 使用星号操作符解包排序后的元组列表,并将其重新组合成两个列表:一个包含所有轮廓,另一个包含所有边界框。

return cnts, boundingBoxes

def cv_show(name, img):

cv2.imshow(name, img)

cv2.waitKey(0)

# 预处理

image = cv2.imread('../data/images/test_01.png')

contours_img = image.copy()

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

blurred = cv2.GaussianBlur(gray, ksize=(5, 5), sigmaX=0)

cv_show(name='blurred', img=blurred)

edged = cv2.Canny(blurred, threshold1=75, threshold2=200)

cv_show(name='edged', img=edged)

# 轮廓检测

cnts = cv2.findContours(edged.copy(), cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)[-2]

cv2.drawContours(contours_img, cnts, -1, color=(0, 0, 255), thickness=3)

cv_show(name='contours_img', img=contours_img)

docCnt = None

# 根据轮廓大小进行排序,准备透视变换

cnts = sorted(cnts, key=cv2.contourArea, reverse=True)

for c in cnts: # 遍历每一个轮廓

peri = cv2.arcLength(c, closed=True)

approx = cv2.approxPolyDP(c, 0.02 * peri, closed=True) # 轮廓近似

if len(approx) == 4:

docCnt = approx

break

# 执行透视变换

warped_t = four_point_transform(image, docCnt.reshape(4, 2))

warped_new = warped_t.copy()

cv_show(name='warped', img=warped_t)

warped = cv2.cvtColor(warped_t, cv2.COLOR_BGR2GRAY)

# 阈值处理

thresh = cv2.threshold(warped, 0, 255, cv2.THRESH_BINARY_INV | cv2.THRESH_OTSU)[1]

cv_show(name='thresh', img=thresh)

thresh_Contours = thresh.copy()

# 找到每一个圆圈轮廓

cnts = cv2.findContours(thresh, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)[-2]

warped_Contours = cv2.drawContours(warped_t, cnts, -1, color=(0, 255, 0), thickness=1)

cv_show(name='warped_Contours', img=warped_Contours)

questionCnts = []

for c in cnts: # 遍历轮廓并计算比例和大小

(x, y, w, h) = cv2.boundingRect(c)

ar = w / float(h)

# 根据实际情况指定标准

if w >= 20 and h >= 20 and 0.9 <= ar <= 1.1:

questionCnts.append(c)

print(len(questionCnts))

###########################################################

# 按照从上到下进行排序

questionCnts = sort_contours(questionCnts, method="top-to-bottom")[0]

correct = 0

# 每排有5个选项

for (q, i) in enumerate(np.arange(0, len(questionCnts), 5)):

cnts = sort_contours(questionCnts[i:i + 5])[0] # 排序

bubbled = None

# 遍历每一个结果

for (j, c) in enumerate(cnts):

# 使用mask来判断结果

mask = np.zeros(thresh.shape, dtype="uint8")

cv2.drawContours(mask, [c], -1, 255, -1) # -1表示填充

cv_show(name='mask', img=mask)

# 通过计算非零点数量来算是否选择这个答案

# 利用掩膜(mask)进行“与”操作,只保留mask位置中的内容

thresh_mask_and = cv2.bitwise_and(thresh, thresh, mask=mask)

cv_show(name='thresh_mask_and', img=thresh_mask_and)

total = cv2.countNonZero(thresh_mask_and) # 统计灰度值不为0的像素数

if bubbled is None or total > bubbled[0]: # 通过阈值判断,保存灰度值最大的序号

bubbled = (total, j)

# 对比正确答案

color = (0, 0, 255)

k = ANSWER_KEY[q]

if k == bubbled[1]: # 判断正确

color = (0, 255, 0)

correct += 1

cv2.drawContours(warped_new, [cnts[k]], -1, color, thickness=3) # 绘图

cv_show('warpeding', warped_new)

score = (correct / 5.0) * 100

print("[INFO] score: {:.2f}%".format(score))

cv2.putText(warped_new, "{:.2f}%".format(score), (10, 30),cv2.FONT_HERSHEY_SIMPLEX, 0.9, (0, 0, 255), 2)

cv2.imshow(winname="Original", mat=image)

cv2.imshow(winname="Exam", mat=warped_new)

cv2.waitKey(0)

更多推荐

已为社区贡献13条内容

已为社区贡献13条内容

所有评论(0)