【Python语音识别系列】一文教你实现双声道录音文件语音识别(案例+源码)

一文教你实现双声道录音文件语音识别(案例+源码)

·

这是我的第457篇原创文章。

一、引言

双声道录音文件的语音识别,一般用于区分两个说话人(如客服 vs 客户),典型处理流程如下:

✅ 整体流程

🎧 双声道 WAV 文件 → 🎙️ 声道分离 → 🧠 逐声道语音识别 → 📋 对话重建(按时间排序)

二、实现过程

2.1 定义模型

核心代码:

model = AutoModel(

model="./workspace/official_models/speech_seaco_paraformer_large_asr_nat-zh-cn-16k-common-vocab8404-pytorch",

model_revision="v2.0.4",

vad_model="./workspace/official_models/speech_fsmn_vad_zh-cn-16k-common-pytorch",

vad_model_revision="v2.0.4",

punc_model="./workspace/official_models/punc_ct-transformer_zh-cn-common-vocab272727-pytorch",

punc_model_revision="v2.0.4",

spk_model = "./workspace/official_models/speech_campplus_sv_zh-cn_16k-common",

spk_model_revision="v2.0.2",

disable_update=True)2.2 声道分离

读取双声道音频,分离为两个单声道 numpy 数组

def separate_channels(wav_path: str):

"""读取双声道音频,分离为两个单声道 numpy 数组"""

audio, sample_rate = sf.read(wav_path) # shape: [samples, channels]

if audio.ndim != 2 or audio.shape[1] != 2:

raise ValueError("音频不是双声道格式!")

return audio[:, 0], audio[:, 1], sample_rate2.3 语音识别

核心代码:

def recognize_with_model(audio: np.ndarray, sample_rate: int) -> List[Dict]:

"""使用 FunASR 识别语音"""

result = model.generate(

input=audio,

input_fs=sample_rate,

hotword=None,

return_dict=True

)

return result[0]["sentence_info"]2.4 对话合并

核心代码:

def merge_dialogues(cust_segments, agent_segments) -> List[Dict]:

"""按起止时间戳排序合并对话"""

all_segs = [

{"role": "客户", **seg} for seg in cust_segments

] + [

{"role": "客服", **seg} for seg in agent_segments

]

dialogue_data = sorted(all_segs, key=lambda x: x["start"])

return dialogue_data2.5 完成处理逻辑

将上面的逻辑进行串联:

def process_bichannel_wav(wav_path: str):

print(f"处理文件:{wav_path}")

# ====================声道分离================

cust_audio, agent_audio, sr = separate_channels(wav_path)

# ====================语音识别================

result_cust = recognize_with_model(cust_audio, sr)

result_agent = recognize_with_model(agent_audio, sr)

# ====================组装对话================

dialogue = merge_dialogues(result_cust, result_agent)

# ====================打印最终合并结果================

for turn in dialogue:

start_sec = turn["start"] / 1000

end_sec = turn["end"] / 1000

print(f"[{start_sec:.2f}s - {end_sec:.2f}s] {turn['role']}:{turn['text']}")

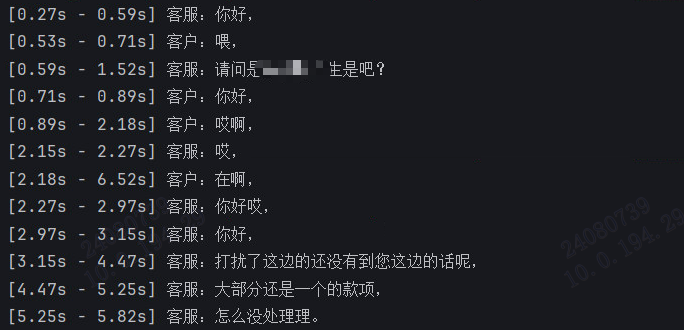

return dialogue2.6 脚本测试

代码:

if __name__ == "__main__":

# 传入一个双声道的客服录音

wav_file = "test.wav"

process_bichannel_wav(wav_file)结果:

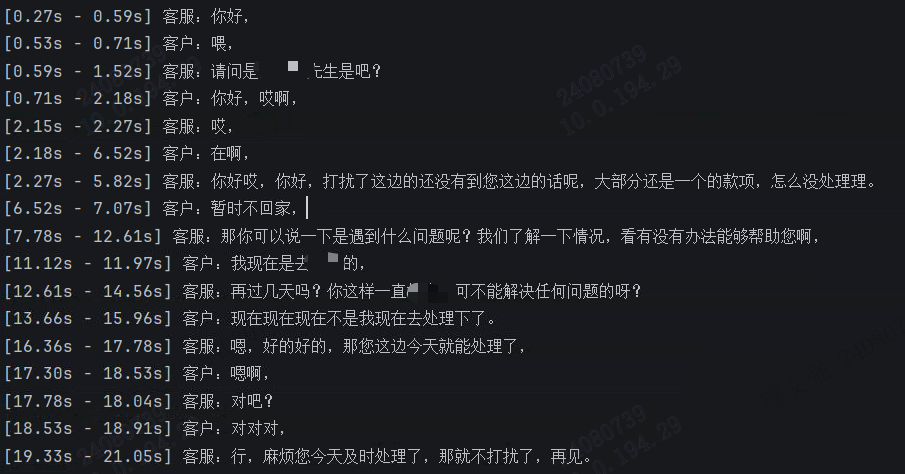

改进:合并连续相同角色的对话,使得对话记录更加简洁。对话合并的修改逻辑如下:

def merge_dialogues(cust_segments, agent_segments) -> List[Dict]:

"""按起止时间戳排序合并对话"""

all_segs = [

{"role": "客户", **seg} for seg in cust_segments

] + [

{"role": "客服", **seg} for seg in agent_segments

]

dialogue_data = sorted(all_segs, key=lambda x: x["start"])

merged_data = []

for role, group in itertools.groupby(dialogue_data, key=lambda x: x['role']):

group_list = list(group)

merged_entry = {

'role': role,

'text': ''.join(entry['text'] for entry in group_list),

'start': group_list[0]['start'],

'end': group_list[-1]['end'],

'timestamp': [ts for entry in group_list for ts in entry['timestamp']],

'spk': group_list[0]['spk']

}

merged_data.append(merged_entry)

return merged_data结果:

作者简介:

读研期间发表6篇SCI数据挖掘相关论文,现在某研究院从事数据算法相关科研工作,结合自身科研实践经历不定期分享关于Python、机器学习、深度学习、人工智能系列基础知识与应用案例。致力于只做原创,以最简单的方式理解和学习,关注我一起交流成长。需要数据集和源码的小伙伴可以关注底部公众号添加作者微信。

更多推荐

已为社区贡献26条内容

已为社区贡献26条内容

所有评论(0)